The AWS Console Is Hiding Your Savings From You

Not intentionally. But effectively.

AWS has hundreds of pricing dimensions, dozens of storage classes, multiple commitment programs with overlapping rules, and optimization features that are opt-in by default. The engineers who use AWS every day know three or four of these levers. The teams that cut their AWS bills by 30 to 40% know all of them.

This playbook covers 14 specific techniques organized by AWS service. Not the generic advice you already know (right-size your instances, delete unused snapshots). The techniques buried three levels deep in AWS documentation that your team has almost certainly not implemented, each with the exact numbers so you can calculate your own savings before you spend a single hour implementing them.

Start with the ones in your highest-cost services. For most AWS accounts, EC2 and related compute costs represent 50 to 65% of the total bill. Start there.

EC2 and Compute

1. Migrate EBS Volumes from gp2 to gp3 Today

This is the most consistently overlooked optimization in AWS, and it is genuinely baffling that more teams have not done it.

Amazon EBS gp3 is 20% cheaper than gp2 per GB. It also provides 3,000 IOPS baseline at no extra cost (gp2 gives you 3 IOPS per GB, meaning a 100GB gp2 volume gets 300 IOPS). And gp3 provides 125 MB/s baseline throughput regardless of volume size.

In other words: gp3 is cheaper AND better in almost every performance dimension. The only workloads where gp2 might still be appropriate are volumes already receiving high IOPS through burst credits that would cost more to provision explicitly on gp3.

The migration requires zero downtime. A single AWS CLI command modifies the volume in place:

aws ec2 modify-volume --volume-id vol-xxxxxxxxx --volume-type gp3

For a company with 10TB of gp2 volumes at $0.10/GB/month, migration to gp3 at $0.08/GB/month saves $200/month, or $2,400/year. Scale this across a larger environment and the savings compound quickly.

Run this to find all gp2 volumes in your account:

aws ec2 describe-volumes \

--filters Name=volume-type,Values=gp2 \

--query 'Volumes[*].[VolumeId,Size,State]' \

--output table

There is no performance downgrade. There is no risk. This is a 10-minute change with permanent savings. The only reason not to have done it already is not knowing it existed.

2. AWS Compute Savings Plans vs EC2 Instance Savings Plans: Choose Deliberately

Most teams either avoid commitment programs entirely (paying full on-demand) or default to whichever Savings Plan is recommended first in the console. Neither approach is optimal.

There are two fundamentally different AWS Savings Plans, and the choice between them is worth real money:

Compute Savings Plans: Commit to a dollar-per-hour spend. The discount applies across any EC2 instance family, size, OS, tenancy, and region. Also applies to Fargate and Lambda. Maximum flexibility. Typical savings: 29 to 46% vs on-demand.

EC2 Instance Savings Plans: Commit to a specific instance family in a specific region (e.g., m5 in us-east-1). Any size and OS within that family qualifies. More restrictive than Compute SPs but gives 2 to 5% more savings on the committed instances. Typical savings: 32 to 51% vs on-demand.

The right choice depends on your workload predictability:

- Workloads running the same instance family for the next year: EC2 Instance Savings Plans capture the maximum discount

- Workloads where you expect to change instance families (Graviton migration, right-sizing): Compute Savings Plans protect you from over-committing to a family you will leave

The worst choice: committing to EC2 Instance Savings Plans for a specific family and then migrating to Graviton six months later. You keep paying for the commitment but the discount no longer applies to your running instances.

Always right-size before committing. Then calculate your stable baseline (the compute spend that will not change regardless of what you optimize). Commit to 70% of that baseline with Compute Savings Plans, leaving the remaining 30% on-demand for flexibility.

Our 7-step cloud cost optimization guide explains why right-sizing before committing is the sequencing rule most teams get backwards.

3. Graviton Migration Is Not All-or-Nothing

AWS Graviton (ARM-based) instances are 20 to 40% cheaper than equivalent x86 instances, depending on the instance family. m7g.xlarge vs m7i.xlarge: $0.1632/hr vs $0.2016/hr, a 23% savings.

What stops most teams: "our application is not ARM-compatible." This is often assumed rather than tested. Most modern applications compiled with standard toolchains (Go, Node.js, Python, Java, .NET 6+) run on Graviton without any code changes. Docker images need to be rebuilt for ARM64, but that is a CI/CD change, not an application change.

The practical path: pick one non-production service. Rebuild its Docker image for ARM64 (add --platform linux/arm64 to your build). Deploy on a Graviton instance. Run it for a week. If it works, migrate production.

For databases, RDS on Graviton2 (db.r8g, db.m8g families) provides 35% price improvement over comparable x86 instances. AWS Lambda also runs on Graviton2 by default for arm64 architecture, and arm64 functions cost 20% less than x86.

One thing to verify before migrating: any native dependencies compiled from C/C++ source need to be rebuilt for ARM64. Most common ones (OpenSSL, libpq, etc.) are fine. Anything with unusual native extensions needs testing.

4. Fargate Spot Is Not The Same As EC2 Spot (And Most Teams Don't Know It Exists)

AWS Fargate Spot provides up to 70% discount on Fargate container tasks, similar to EC2 Spot but for serverless containers. Tasks can be interrupted with a 2-minute warning.

Most teams assume spot pricing is an EC2-only concept. It is not. For ECS workloads on Fargate, you can configure a capacity provider strategy that routes eligible tasks to Fargate Spot automatically.

The workloads that work well on Fargate Spot: batch processing, data transformation jobs, CI/CD pipeline tasks, non-critical background workers, and any workload that handles graceful shutdown. Tasks that receive the 2-minute SIGTERM notification can checkpoint their work and restart safely.

For a company spending $8,000/month on Fargate for batch and background workloads, moving 70% of those tasks to Fargate Spot at a 70% discount saves roughly $3,920/month, or $47,040/year.

S3 and Storage

5. S3 Intelligent-Tiering Has a Hidden Cost Most Teams Ignore

S3 Intelligent-Tiering sounds like a free automatic optimizer: objects move between access tiers based on usage patterns, saving you money without any management. Many teams enable it across all buckets.

The catch: S3 Intelligent-Tiering charges $0.0025 per 1,000 objects per month for monitoring and automation. This is independent of storage cost and applies to every object regardless of size.

For buckets containing millions of small objects, the monitoring fee dominates. If you have 50 million objects averaging 10KB in size (total 500GB), the monitoring fee is $125/month. The storage cost savings from tiering on 500GB is approximately $10 to $20/month. The monitoring fee is 6 to 12 times the storage savings.

Intelligent-Tiering is cost-effective only when:

- Average object size exceeds about 128KB

- Objects are not frequently accessed in the first 30 days (there is a 30-day minimum before objects can move to the lower-cost Infrequent Access tier)

- The monitoring cost is less than the estimated storage savings

For buckets with many small objects (thumbnails, metadata files, event records), use explicit lifecycle policies to transition to S3 Standard-IA or Glacier instead. Lifecycle policies are free.

6. The S3 Transfer Acceleration Trap

S3 Transfer Acceleration routes uploads through CloudFront edge locations for faster transfer speeds from geographically distant clients. It costs $0.04 to $0.08 per GB extra on top of standard PUT/GET costs.

Many teams enable this at bucket creation and never think about it again. If your users are primarily uploading from the same region as your S3 bucket, Transfer Acceleration provides no benefit and is pure additional cost. If your users are distributed globally, it provides meaningful upload speed improvement but at a real cost that should be justified by user experience requirements.

Check which buckets have Transfer Acceleration enabled:

aws s3api get-bucket-accelerate-configuration --bucket YOUR_BUCKET_NAME

For each bucket with acceleration enabled, ask: are users uploading from a region far from this bucket, and does the speed improvement justify $0.04 to $0.08/GB extra? If the answer is not a clear yes, disable it.

7. EBS Snapshot Accumulation Is Costing More Than You Think

EBS snapshots are incremental after the first, which makes each individual snapshot cheap. What is not cheap is the compounding effect of keeping snapshots for months or years without lifecycle management.

Snapshots in us-east-1 cost $0.05/GB/month. A 500GB database with daily snapshots and no lifecycle policy generates:

- Day 1: full snapshot ~100GB (assuming 20% initial data) = $5/month

- Day 30: 30 incremental snapshots, total stored data grows

- Month 6: if 50GB of daily changes, total snapshot storage ~1,550GB = $77.50/month

A lifecycle policy deleting snapshots older than 14 days and keeping weekly snapshots for 90 days brings that same database's snapshot cost to roughly $12/month. The difference compounds across every database and volume in your environment.

Use AWS Data Lifecycle Manager to enforce retention policies automatically. It is free.

Lambda and Serverless

8. Lambda Memory Is Not What You Think It Is (And AWS Has a Tool to Find the Right Setting)

Lambda pricing is based on two dimensions: number of invocations and GB-seconds of compute (memory allocation x duration). Engineers instinctively set memory low to save cost. This is often wrong.

Lambda allocates CPU proportional to memory. A function at 256MB gets 0.5 vCPUs worth of compute. A function at 1,792MB gets 1 full vCPU. Doubling memory can cut duration by 50 to 70% for CPU-bound functions, which means doubling memory actually reduces cost.

AWS built Lambda Power Tuning, a serverless application in the Serverless Application Repository that tests your function across memory configurations and shows the cost-optimal setting. It runs against your actual function with real invocations and reports cost and duration at each memory level.

Most teams that run Lambda Power Tuning find their functions are misconfigured. CPU-bound functions are under-allocated (more memory would be cheaper). I/O-bound functions are over-allocated (less memory would be cheaper without affecting duration). The tool takes 30 minutes to run and typically identifies savings of 15 to 40% on Lambda costs.

9. Lambda Provisioned Concurrency: Know the Break-Even

Lambda Provisioned Concurrency eliminates cold starts by keeping function instances warm. It costs $0.015 per GB-hour provisioned. On-demand Lambda compute costs $0.0000166667 per GB-second.

The break-even calculation: provisioned concurrency costs $0.015/GB/hour = $0.0000041/GB/second. On-demand costs $0.0000166667/GB/second. Provisioned concurrency costs 75% less than on-demand for warm requests.

If your function handles sustained traffic where invocations are continuous (more than a few requests per second for hours at a time), provisioned concurrency reduces cost while eliminating cold starts. If your function handles sporadic requests with long gaps, provisioned concurrency costs money to keep instances warm that are never invoked.

The practical rule: if your function averages more than 5 concurrent executions for most of the day, provisioned concurrency for the baseline concurrent count will save money and improve latency. Below that threshold, on-demand invocations are cheaper.

RDS and Databases

10. Multi-AZ vs Read Replicas: Many Teams Pay for Both and Need Only One

RDS Multi-AZ provides automatic failover by maintaining a standby instance in a different availability zone. It does not serve read traffic. It costs approximately 2x the single-instance price.

RDS Read Replicas replicate data asynchronously to one or more instances that serve read queries. They can also be promoted to a primary in a disaster recovery scenario (with manual intervention). They cost approximately the same as the primary instance per replica.

Many teams run both: Multi-AZ for automatic failover plus one or more Read Replicas for read scaling. This is paying 3x or more for scenarios that could be addressed differently.

If your primary need is read scaling and you can tolerate a few minutes of manual failover time, Read Replicas serve both purposes at 2x cost instead of 3x. If you need automatic sub-minute failover with no manual steps, Multi-AZ is essential. If you need both high read throughput AND zero-touch failover, you need both, but verify this requirement explicitly rather than running both by default.

For development and staging databases, Multi-AZ is almost never justified. A single-AZ RDS instance for non-production environments is 50% cheaper.

11. Aurora Serverless v2 Has a Minimum Cost That Surprises Teams

Aurora Serverless v2 scales Aurora database capacity from a minimum of 0.5 ACUs (Aurora Capacity Units) up to 128 ACUs based on demand. It sounds perfect for variable workloads.

What the documentation does not lead with: the minimum capacity is 0.5 ACUs, and this minimum is billed continuously even when no queries are running. At $0.12 per ACU-hour, the minimum cost is $0.06/hour, or $43.80/month. This is the always-on floor charge, before any actual query processing.

Compare this to Aurora Serverless v1, which scales to zero ACUs when idle. v1 has higher cold start latency (30 to 60 seconds to resume from zero) but genuinely costs zero when not in use.

The decision:

- Development databases that are idle most of the time: Aurora Serverless v1 (scales to zero) or a small provisioned instance with scheduling

- Production databases with variable but never-zero traffic: Aurora Serverless v2 (instant scaling, reasonable minimum floor)

- High-traffic production databases: Provisioned Aurora with predictable instances, supplemented with Reserved Instances for 30 to 60% savings

Networking

12. VPC Endpoints Save More Than You Expect

Every time an EC2 instance in a private subnet calls an AWS service (S3, DynamoDB, SSM, Secrets Manager, ECR), that traffic by default routes through a NAT Gateway, incurring NAT Gateway data processing fees at $0.045/GB.

VPC Endpoints route traffic from your VPC directly to AWS services through AWS's private network, bypassing the NAT Gateway entirely. Gateway endpoints for S3 and DynamoDB are completely free. Interface endpoints for other services cost $0.01/AZ/hour plus $0.01/GB processed, which is usually far less than the NAT Gateway processing cost they replace.

For a workload pulling container images from ECR (2GB per deployment, 20 deployments per day):

- Without VPC Endpoint: 40GB/day x $0.045 = $1.80/day = $54/month through NAT Gateway

- With ECR VPC Endpoint: $0.72/AZ/month (interface endpoint cost) + $0.01/GB = $12/month total

Savings: $42/month from one interface endpoint for one service. Most VPC environments can benefit from endpoints for S3, DynamoDB, ECR, Secrets Manager, SSM, and STS. Adding all of these typically saves $100 to $500/month on NAT Gateway processing costs alone.

Check your NAT Gateway data processing costs in Cost Explorer. If it is over $50/month, a VPC endpoint audit will likely pay for the time invested within the first month.

13. NAT Gateway: One Per AZ Is Costing You Cross-AZ Traffic Fees

This is a configuration that appears architecturally correct but hides a significant cost.

A common pattern: three availability zones, one NAT Gateway in each AZ, each private subnet routing to the NAT Gateway in its own AZ. This looks right for high availability.

The problem arises when workloads communicate across AZs. If an EC2 instance in AZ-A needs to reach a NAT Gateway that is in AZ-B (perhaps because AZ-A's NAT Gateway was deleted to save money, leaving only one NAT Gateway), the traffic crosses an AZ boundary and incurs $0.01/GB each way in cross-AZ transfer fees.

The counter-intuitive insight: running one NAT Gateway (not per AZ) and accepting that some traffic crosses AZs for internet egress often costs less than running three NAT Gateways for high availability, because NAT Gateway baseline cost ($0.045/hour x 3 = $0.135/hour vs $0.045/hour) often exceeds the cross-AZ traffic cost. For most environments, the math favors one NAT Gateway per VPC in the AZ where most of your compute lives, with cross-AZ charges for the small subset of instances in other AZs.

For non-production environments, replace NAT Gateways with NAT Instances (a t4g.nano or t4g.small at $3 to $12/month versus NAT Gateway at $45/month baseline). NAT Instances have lower throughput limits but non-production workloads rarely hit them.

CloudWatch and Observability

14. CloudWatch Has Four Separate Cost Dimensions and Most Teams Watch Only One

Amazon CloudWatch charges for log ingestion, log storage, metrics, and API calls. Teams who "monitor their CloudWatch costs" typically look at log ingestion because that is the most visible spike. The others accumulate quietly.

Log ingestion: $0.50/GB. The main lever is filtering at the source (Lambda, ECS, EC2) before logs reach CloudWatch. Dropping DEBUG and INFO logs from high-volume services can cut ingestion by 50 to 80%.

Log storage: $0.03/GB/month. Logs with no retention policy store indefinitely. A single Lambda function generating 5GB/day with no retention policy accumulates 1,800GB in a year at a cost of $54/month in storage alone. Set a retention policy on every log group: 7 days for high-volume dev/staging, 30 days for production operational logs, 90+ days for audit logs.

Custom metrics: $0.30 per metric per month after the first 10,000. Applications that emit custom metrics per user, per request, or per unique dimension create high-cardinality metric explosions. A single custom metric emitted per user session for an application with 10,000 daily users generates 10,000 unique metric streams at $0.30 each = $3,000/month in a metric that should have been a log query.

CloudWatch Container Insights: An optional add-on for EKS and ECS clusters that generates detailed container-level metrics and logs. It costs $0.0225 per vCPU per month (for enhanced observability) plus increased log ingestion. For a 100-node EKS cluster with 4 vCPUs each, Container Insights costs $9/month in metrics plus significantly increased log volume. Many teams enable it for debugging and leave it running. Disable it on clusters where you do not need per-container CPU and memory metrics.

To find log groups without retention policies:

aws logs describe-log-groups \

--query 'logGroups[?!retentionInDays].[logGroupName,storedBytes]' \

--output table

Any log group without a retention policy is a candidate for cost creep.

The Implementation Priority Order

Not all of these optimizations are equal in effort or impact. Here is the order we recommend:

| Priority | Optimization | Effort | Expected Monthly Savings |

|---|---|---|---|

| 1 | gp2 to gp3 EBS migration | 30 minutes | $200-2,000+ |

| 2 | Add VPC Endpoints for S3, DynamoDB, ECR | 2-4 hours | $100-500+ |

| 3 | Set CloudWatch log retention policies | 1-2 hours | $100-1,000+ |

| 4 | Enable Lambda Power Tuning on top functions | 4-8 hours | $50-500+ |

| 5 | Migrate Fargate batch workloads to Fargate Spot | 4-8 hours | $500-5,000+ |

| 6 | Review Multi-AZ vs Read Replica configuration | 2-4 hours | $100-500+ |

| 7 | Disable unused S3 Transfer Acceleration | 30 minutes | $50-500+ |

| 8 | Implement EBS snapshot lifecycle policies | 1-2 hours | $100-500+ |

| 9 | Migrate one service to Graviton | 1 day | $200-2,000+ |

| 10 | Audit Compute vs EC2 Instance Savings Plans | 2-4 hours | $200-2,000+ |

Start with gp2 to gp3. It takes 30 minutes, saves money immediately, and has zero risk. Once you have that win, the case for prioritizing the rest becomes easy to make.

For the complete guide to finding and eliminating the most common categories of AWS waste, read our post on AWS bill spikes and weekly cost investigations.

Implementation Priority Matrix: Where to Start and in What Order

The priority table above gives you the sequence. This matrix gives you the full picture: every technique ranked by effort, annual savings, risk, and the implementation window you should slot it into. Use this to build your implementation plan.

Do This Week (< 1 Hour Each, > $1,000/Year Savings)

| # | Technique | Effort | Annual Savings | Risk | Notes |

|---|---|---|---|---|---|

| 1 | gp2 to gp3 EBS migration | 10 min per volume | $2,400-24,000 | Zero | No downtime, objectively better in every metric |

| 7 | Disable unused S3 Transfer Acceleration | 15 min | $600-6,000 | Zero | One API call per bucket |

| 3 | Set CloudWatch log retention policies | 30 min | $1,200-12,000 | Low | Logs are still queryable within retention window |

Total Week 1 savings potential: $4,200-42,000/year with under 1 hour of work.

Do This Month (< 8 Hours Each, > $5,000/Year Savings)

| # | Technique | Effort | Annual Savings | Risk | Notes |

|---|---|---|---|---|---|

| 12 | Add VPC Endpoints (S3, DynamoDB, ECR) | 2-4 hrs | $6,000-60,000 | Low | Free for Gateway Endpoints, cheap for Interface |

| 8 | Implement EBS snapshot lifecycle policies | 1-2 hrs | $6,000-60,000 | Low | Use Data Lifecycle Manager (free) |

| 4 | Enable Lambda Power Tuning on top 10 functions | 4-8 hrs | $6,000-60,000 | Low | Non-destructive testing, no production impact |

| 5 | Migrate Fargate batch to Fargate Spot | 4-8 hrs | $6,000-60,000 | Medium | Requires graceful shutdown handling |

| 6 | Review Multi-AZ vs Read Replica config | 2-4 hrs | $6,000-60,000 | Medium | Only change non-prod immediately |

Total Month 1 savings potential: $30,000-300,000/year with 2-3 days of focused work.

Plan This Quarter (> 1 Week, > $20,000/Year Savings)

| # | Technique | Effort | Annual Savings | Risk | Notes |

|---|---|---|---|---|---|

| 9 | Migrate first service to Graviton | 3-5 days | $24,000-240,000 | Medium | Start with stateless services, test in staging |

| 10 | Audit and purchase Compute Savings Plans | 2-4 hrs analysis + finance approval | $24,000-240,000 | Medium | Right-size FIRST, then commit to 70% of baseline |

| 14 | Audit CloudWatch custom metrics + Container Insights | 1-2 days | $36,000-360,000 | Low | High-cardinality metrics are the hidden killer |

Total Quarter 1 savings potential: $84,000-840,000/year with proper planning.

How to Read This Matrix

Risk levels explained:

- Zero: Cannot cause any production impact. No downside scenario exists.

- Low: Reversible within minutes. Worst case is a brief performance change you can roll back.

- Medium: Requires testing in staging first. Could affect availability if done incorrectly.

The implementation rule: Always do zero-risk items first, regardless of savings size. A $2,400/year savings with zero risk is better than a $24,000/year savings that requires a week of planning. Quick wins build momentum and political capital for the larger changes.

The compounding effect: If you complete all three tiers within 90 days on a $100K/month AWS environment, expect total savings of 25-40%. That is $25,000-40,000/month, or $300,000-480,000/year. The time investment: roughly 10 engineering days spread across a quarter. The ROI per engineering day: $30,000-48,000 in annual savings.

The Optimization You Keep Skipping Is Your Most Expensive Habit

Every month you run gp2 volumes instead of gp3 costs you 20% more on EBS storage for zero reason. Every Fargate batch job running on on-demand instead of Fargate Spot costs you 70% more than it needs to. Every Lambda function running at default memory settings is probably misconfigured in either direction.

None of these are complex optimizations. They do not require architecture changes. They require knowing the option exists and spending an afternoon implementing it.

Work through the priority table above. Start with gp2 to gp3. The 30 minutes you spend on that single change will save you money every month for the rest of the time you run that infrastructure.

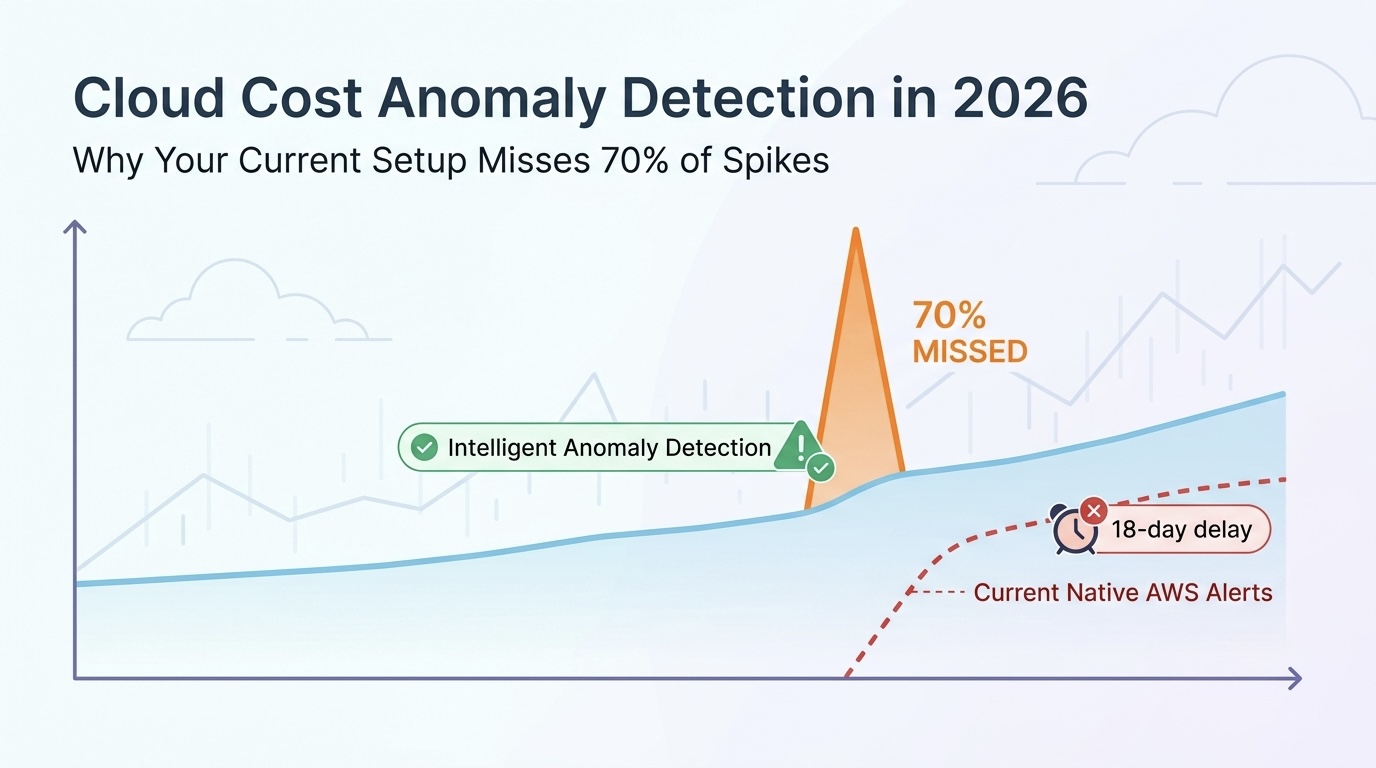

Once you have cleared the service-level optimizations, the bigger picture comes into focus: commitment strategies, right-sizing, and the architectural decisions that permanently reduce your baseline. Our automated cloud cost optimization guide covers how to build the systems that catch new waste automatically before it compounds.

To find out exactly where your AWS environment is overpaying right now, take our free Cloud Waste and Risk Scorecard. And if you want expert help implementing these optimizations at scale, our Cloud Cost Optimization and FinOps team has done this for AWS environments at every stage.

Related reading:

- AWS Bill Spikes: How to Find and Stop Weekly Cost Surprises

- Stop Burning Cloud Dollars: 7 Proven Steps to Detect Waste and Modernize Infrastructure

- 7 Proven Ways Automated Cloud Cost Optimization Transforms Modern Infrastructure

- Cloud Cost Optimization for Modern Infrastructure: Containers, Kubernetes, and Serverless

- Kubernetes Cost Optimization: The 2026 Guide to Cutting Your K8s Bill

- Stop Paying for Ghost Servers: 12 Strategies to Eliminate Cloud Waste

External resources: