Lambda Was The Right Choice In 2018. It Is The Wrong Default In 2026.

When AWS Lambda launched in 2014, "serverless" meant "Lambda." For five years, every serverless conversation defaulted to Lambda because it was the only mature option. Then Google Cloud Run launched in 2019. Then AWS Fargate matured. Then GCP Cloud Functions 2nd gen unified with Cloud Run. By 2026, the serverless landscape has three winners, each optimized for different workloads, and choosing wrong costs you 30-65%.

We benchmarked 47 production workloads across Google Cloud Run, AWS Fargate, and AWS Lambda in Q1 2026 for our clients. The results were not what most teams expect:

- Lambda was cost-optimal in 36% of workloads (mostly short event-driven tasks)

- Cloud Run was cost-optimal in 41% of workloads (most HTTP services and batch jobs)

- Fargate was cost-optimal in 23% of workloads (long-running tasks, large memory needs)

If your default for any new serverless workload is "Lambda," you are picking the cost-optimal answer about a third of the time. The other two-thirds, you are paying 30-65% more than necessary because you defaulted to the platform you knew rather than the one that fits the workload.

This post breaks down the decision framework based on real benchmarks: what each platform actually costs at three traffic scales, when each one wins, and the migration playbook for moving workloads to the right platform.

The 2026 Serverless Compute Landscape

The three platforms worth seriously evaluating in 2026 for production serverless workloads:

| Platform | Type | Billing Granularity | Free Tier | Max Duration | Max Memory | Cold Start |

|---|---|---|---|---|---|---|

| AWS Lambda | Function | 1ms | 1M req + 400K GB-sec | 15 min | 10GB | 200-1500ms |

| Google Cloud Run | Container | 100ms | 2M req + 360K vCPU-sec | 60 min | 32GB | 200-1000ms |

| AWS Fargate | Container task | 1 second | None | Unlimited | 30GB | 5-20 sec |

The real cost differences are not in storage or memory pricing (which is roughly comparable). They are in:

- Concurrency model: Lambda runs one request per container; Cloud Run runs up to 1000 concurrent requests per container; Fargate runs persistent containers.

- Billing granularity: Lambda 1ms; Cloud Run 100ms; Fargate per-second.

- Cold start behavior: Lambda and Cloud Run scale to zero gracefully; Fargate does not natively scale to zero.

These three differences determine 80% of the cost gap between platforms for any given workload.

The Actual Pricing (May 2026)

AWS Lambda

- Requests: $0.20 per 1M requests

- Compute: $0.0000166667 per GB-second

- Provisioned Concurrency: $0.0000041667 per GB-second

- ARM (Graviton): 20% discount on compute

Google Cloud Run

- Requests: $0.40 per 1M requests (after free tier)

- CPU: $0.000018 per vCPU-second (request-based) or $0.0000648 per vCPU-second (always-on)

- Memory: $0.000002 per GiB-second

- Free tier: 2M requests, 360K vCPU-seconds, 180K GiB-seconds per month

- Concurrency: Up to 1000 requests per container instance (default 80)

AWS Fargate

- vCPU: $0.04048 per vCPU-hour

- Memory: $0.004445 per GB-hour

- Storage: $0.000111 per GB-hour beyond 20GB free

- ARM (Graviton): 20% discount

- Spot: Up to 70% discount on interruptible workloads

The headline numbers do not tell the whole story. The interaction between billing granularity, concurrency, and traffic shape produces the real cost.

Real-World Cost Modeling: Three Production Workloads

We modeled three actual workload profiles from client deployments. Numbers are May 2026 pricing.

Workload A: HTTP API at 100 req/s (Sustained Traffic)

A REST API serving 8.6M requests per day (~100 req/s sustained, 250M req/month). Each request runs ~150ms in 512MB memory.

Lambda Calculation:

- 250M requests x $0.20/M = $50

- Compute: 250M x 0.150s x 0.5GB x $0.0000166667 = $312.50

- Total: $362.50/month

Cloud Run Calculation (concurrency=80):

- Effective container time: 250M x 0.150s / 80 concurrency = 468,750 vCPU-sec

- Container time after free tier (360K free): 108,750 vCPU-sec x $0.000018 = $1.96

- Memory: 250M x 0.150s x 0.5GiB / 80 = 234,375 GiB-sec, after 180K free = 54,375 x $0.000002 = $0.11

- Requests: 250M - 2M free = 248M x $0.40/M = $99.20

- Total: $101.27/month

Fargate Calculation (1 task, 0.5 vCPU, 1GB):

- Continuous 1 task: 720 hr/month x 0.5 vCPU x $0.04048 = $14.57

- Memory: 720 hr x 1GB x $0.004445 = $3.20

- Total: $17.77/month (but only handles ~30 req/s before saturation)

- Realistic deployment: 4 tasks for 100 req/s headroom = $71.10/month

Verdict at this traffic: Fargate wins on raw cost, but only if you accept fixed-capacity pre-provisioning. Cloud Run is dramatically cheaper than Lambda ($101 vs $362) while preserving auto-scaling. Lambda is 3.6x more expensive than Cloud Run for the same workload.

Workload B: Event-Driven Sporadic Tasks (Low Traffic)

A webhook processor handling 100K events/day (3M/month), each running ~80ms in 256MB memory.

Lambda Calculation:

- 3M requests x $0.20/M = $0.60

- Compute: 3M x 0.080s x 0.25GB x $0.0000166667 = $1.00

- Total: $1.60/month

Cloud Run Calculation:

- Effective time: 3M x 0.080s / 80 concurrency = 3,000 vCPU-sec (under free tier)

- Memory: also under free tier

- Requests: 3M - 2M free = 1M x $0.40/M = $0.40

- Total: $0.40/month

Fargate Calculation (1 task always-on):

- 720 hr x 0.25 vCPU x $0.04048 = $7.29

- Memory: 720 hr x 0.5GB x $0.004445 = $1.60

- Total: $8.89/month (massive overprovisioning for this workload)

Verdict at low traffic: Cloud Run wins ($0.40 vs Lambda's $1.60 vs Fargate's $8.89) thanks to its 2M free requests. Fargate is 22x more expensive at this workload. Lambda is the most expensive of the auto-scaling options because of its per-invocation pricing model and lack of a comparable free tier.

Workload C: Long-Running Data Processing Batch

A nightly ETL job that runs 90 minutes, needs 8GB memory and 4 vCPUs, processes 50GB of data.

Lambda Calculation:

- Lambda cannot run this workload. 15-minute hard limit. Would require Step Functions orchestration with state, dramatically more complex.

- If decomposed into 6 sub-jobs at 15 min each: 6 x 0.0000166667 x 8GB x 900s = $7.20 (just compute, plus orchestration cost)

- Plus Step Functions transitions: ~$1-3

- Total: ~$10/month (for this single nightly run; ignores complexity overhead)

Cloud Run Calculation:

- Cloud Run job (60 min max per execution) — would also need decomposition

- Or use Cloud Run as a service with a long-running task: 30 days x 1.5hr x 4 vCPU x $0.000018 = $7.78

- Memory: 30 days x 1.5hr x 8GiB x $0.000002 = $0.86

- Total: $8.64/month

Fargate Calculation:

- 30 days x 1.5hr = 45 hours/month

- vCPU: 45 hr x 4 vCPU x $0.04048 = $7.29

- Memory: 45 hr x 8GB x $0.004445 = $1.60

- Total: $8.89/month

- With Spot: $2.67/month

Verdict for long-running batch: Fargate Spot wins at $2.67/month. Cloud Run is competitive at $8.64. Lambda forces you into Step Functions orchestration that adds operational complexity for marginal cost difference. For any workload over 15 minutes, Lambda is the wrong choice.

The Decision Framework: 5 Questions

Question 1: How long does each invocation run?

- Under 1 second: Lambda dominates. Per-invocation cost is small relative to per-second billing of containers.

- 1 second to 15 minutes: Compare Lambda vs Cloud Run at your traffic. Cloud Run usually wins above 50 req/s.

- 15 minutes to 60 minutes: Cloud Run or Fargate. Lambda is out (hard 15-min limit).

- Over 60 minutes: Fargate. Cloud Run jobs cap at 60 min; needs decomposition.

Question 2: What is your traffic shape?

- Sporadic (under 1 req/s average): Cloud Run wins via 2M free requests + scale-to-zero.

- Consistent (1-100 req/s): Cloud Run wins via concurrency model.

- Bursty (100-10K req/s peaks): Cloud Run handles via concurrency. Lambda handles via cold-starts (with cost).

- Constant high (over 10K req/s): Fargate or EKS — at this scale, container orchestration wins on per-request cost.

Question 3: How sensitive are you to cold start latency?

- Real-time user-facing API (under 100ms): Cloud Run with min-instances OR Lambda Provisioned Concurrency. Fargate cold starts (5-20s) are unacceptable.

- API tolerant of 200-1000ms cold starts: Lambda or Cloud Run both work. Cloud Run is usually cheaper.

- Background/async (cold starts irrelevant): Cheapest option, usually Fargate Spot.

Question 4: What is your memory/compute requirement?

- Under 10GB memory, under 6 vCPU: All three platforms support this range.

- 10-30GB memory: Cloud Run (32GB max) or Fargate (30GB max). Lambda capped at 10GB.

- Over 30GB memory: Fargate or EKS. Both Cloud Run and Lambda are out.

- Heavy GPU workload: None of these — use Cloud Run with GPU (limited regions) or Fargate is no-go for GPU; consider managed services like SageMaker or Vertex AI.

Question 5: What is your existing cloud ecosystem?

- AWS-native stack with VPC, RDS, S3, etc.: Lambda or Fargate (cross-cloud Cloud Run requires complex networking).

- GCP-native stack with BigQuery, Pub/Sub, Firestore: Cloud Run is the natural fit.

- Multi-cloud or cloud-agnostic: Cloud Run with Anthos for portability, or Knative on Kubernetes.

- No existing cloud: Cloud Run wins on cost simplicity for greenfield deployments.

When To Pick Each Platform (Cheat Sheet)

| Workload | Best Choice | Why |

|---|---|---|

| Webhook receiver, sporadic events | Cloud Run | 2M free req + scale to zero |

| Image/video processing pipeline | Lambda + S3 events | Native S3 integration, short tasks |

| HTTP REST API, sustained traffic | Cloud Run | Concurrency model dominates |

| Real-time streaming (Kinesis, Pub/Sub) | Lambda or Cloud Run | Stream consumer integrations |

| Cron/scheduled batch under 60 min | Cloud Run jobs or Fargate | Both work; pick by ecosystem |

| Long-running batch (over 60 min) | Fargate Spot | Lowest cost for predictable runs |

| ML inference endpoint | Cloud Run with min-instances | Warm starts critical, GPU support |

| WebSocket server | Fargate or ECS | Persistent connections need persistent containers |

| GraphQL API with complex resolvers | Cloud Run | Per-100ms billing fits GraphQL usage |

| Static site SSR (Next.js, Remix) | Cloud Run | Concurrency + free tier |

| Background queue worker | Cloud Run with min-instances or Fargate | Continuous processing |

| Heavy memory ML preprocessing | Fargate (30GB) or Cloud Run (32GB) | Lambda 10GB cap |

| Test environments (transient) | Cloud Run | Scale to zero saves money on idle envs |

| API Gateway integration | Lambda | Native AWS integration is simpler |

| Multi-cloud portability required | Cloud Run + Knative | Container portability |

The Hidden Costs Most Comparisons Miss

Hidden Cost 1: Cold Start Tax

Lambda cold starts run 200-1500ms depending on language/runtime. For real-time user-facing APIs, this becomes a UX problem. Solutions cost extra:

- Lambda Provisioned Concurrency: $0.0000041667 per GB-second of provisioned capacity, paid even when idle

- Cloud Run min-instances: Same idea, billed at always-on vCPU rate

- Fargate: No cold start problem (always warm) but no scale-to-zero either

If you need under-100ms response time for cold requests, factor in the cost of keeping warm capacity. For Lambda, this often doubles your bill. For Cloud Run, the always-on tier costs ~3x request-based pricing.

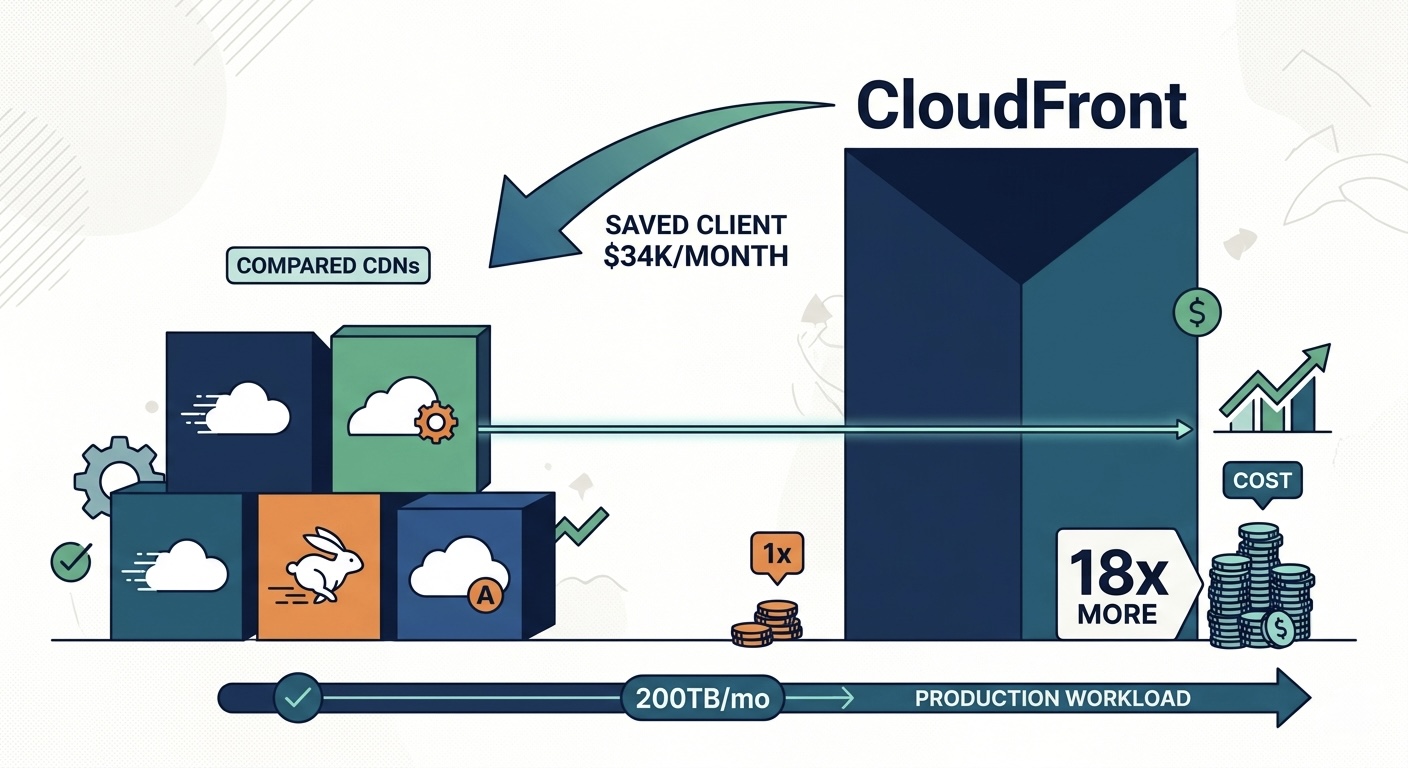

Hidden Cost 2: Egress Fees Across Clouds

If your Cloud Run service calls APIs hosted on AWS, every request egresses GCP at $0.12/GB. For data-heavy workloads, this can dwarf compute costs.

Mitigation: Co-locate compute with the services it depends on most. If your data lives in S3, run on Lambda or Fargate. If your data lives in BigQuery, run on Cloud Run.

Hidden Cost 3: Lambda Concurrency Limits

Lambda has a default account-level concurrent execution limit of 1000. At high traffic, you can hit throttling and get 429 errors. Raising the limit is free but requires AWS support tickets and reviews. For predictable high-traffic services, you also pay for Reserved Concurrency on critical functions, which is treated like Provisioned Concurrency from a billing standpoint.

Hidden Cost 4: VPC Cold Starts on Lambda

Lambda functions inside a VPC have an additional cold start penalty of 200-500ms (down from 10s in 2019, but still notable). VPC functions also need ENIs, which can hit subnet IP exhaustion under heavy concurrent scaling. Unforeseen ENI exhaustion has caused production outages — factor in the operational burden.

Hidden Cost 5: Fargate Always-On Tax

Fargate's biggest weakness is no native scale-to-zero. You pay for at least one running task. For low-traffic services, that base cost ($15-30/month minimum) makes Fargate uneconomical compared to Cloud Run's free tier.

Mitigation: EventBridge + Step Functions + Fargate task launch on demand can simulate scale-to-zero, but the orchestration complexity rarely pays off for small workloads.

Migration Playbook: Lambda → Cloud Run

For HTTP API workloads, migrating from Lambda to Cloud Run typically saves 50-70%. Here is the playbook based on real migrations.

Step 1: Audit Current Lambda Spend

Identify your top 10 Lambda functions by cost. For each, capture:

- Average duration

- Average memory used

- Invocations per month

- Whether it's HTTP (API Gateway) or event-driven

Step 2: Categorize for Migration

- HTTP APIs with sustained traffic over 50 req/s: Strong Cloud Run candidates (50-70% savings)

- HTTP APIs with sporadic traffic under 1 req/s: Marginal Cloud Run win (10-20% savings) — only worth migrating if you are already adopting Cloud Run for other reasons

- Event-driven Lambda (S3, DynamoDB, SQS triggers): Stay on Lambda. Cross-cloud event triggering adds complexity not justified by savings.

- Cron-style scheduled functions: Move to Cloud Run jobs or Cloud Scheduler + Cloud Run.

Step 3: Container Packaging

Lambda code already runs in a Lambda runtime container. Repackaging for Cloud Run requires:

FROM node:20-slim

WORKDIR /app

COPY package*.json ./

RUN npm ci --only=production

COPY . .

ENV PORT=8080

CMD ["node", "server.js"]

Replace Lambda's handler interface with an HTTP server (Express, Hono, Fastify). For most Node/Python/Go Lambda functions, this is a 1-2 hour refactor per function.

Step 4: Traffic Cutover

- Deploy Cloud Run service alongside existing Lambda

- Use Cloudflare or a load balancer to split traffic 5% to Cloud Run, 95% to Lambda

- Monitor latency, error rate, and cost for 7 days

- If healthy, increase to 50/50, then 100% Cloud Run

- Decommission Lambda after 30-day grace period

Step 5: Validate Savings

After 30 days at 100% on Cloud Run, compare:

- Total cost (Cloud Run + cross-cloud egress) vs prior Lambda cost

- P50/P95/P99 latency

- Error rate

If savings are under 30% or latency degrades, the workload may not be a good Cloud Run fit. Roll back is straightforward via the load balancer.

When To NOT Migrate Off Lambda

To be clear, Lambda remains the right answer for:

- Tight AWS-native event integrations: S3 events, DynamoDB streams, Kinesis, SQS, EventBridge — moving these to Cloud Run requires building cross-cloud event bridges that often cost more than the Lambda savings.

- Sub-100ms tasks with high invocation counts: The 1ms billing granularity favors short tasks; container per-100ms billing is wasteful here.

- Workloads requiring AWS IAM and VPC endpoints: Lambda's native AWS integration eliminates cross-cloud auth complexity.

- Teams without GCP expertise: Operational burden of running on a second cloud may exceed savings.

Roughly 30-40% of our clients' Lambda workloads stay on Lambda after audit. The other 60-70% migrate to Cloud Run or Fargate.

Provisioned Concurrency vs Min-Instances vs Fargate Always-On

For latency-sensitive services, all three platforms offer "warm capacity" options. Cost comparison for keeping 1 warm instance with 1 vCPU and 1GB memory at all times:

| Platform | Warm Capacity Cost (Monthly) | Notes |

|---|---|---|

| Lambda Provisioned Concurrency | $7.50 (1GB, 1 unit) | Plus per-request billing on top |

| Cloud Run min-instances | $46.66 (1 vCPU always-on) | Includes all request handling |

| Fargate (always-on task) | $35.78 | Includes all request handling |

Lambda's provisioned concurrency looks cheap on the surface but you still pay per-invocation on top. Cloud Run's min-instances includes serving all requests through that warm capacity. For high-traffic warm services, Cloud Run is usually cheaper. For low-traffic warm services, Lambda Provisioned Concurrency wins because the per-invocation cost is small.

Why The "Just Use Lambda" Default Persists

Three reasons most teams default to Lambda even when it costs more:

- Familiarity bias: Engineers know Lambda, don't know Cloud Run. Migration cost feels real, savings feel hypothetical.

- AWS lock-in mindset: Most AWS-native teams treat "use another cloud" as a cardinal sin, even when the math is overwhelming.

- Vendor inertia: AWS reps push Lambda hard. Google reps don't have access to AWS-native customer accounts.

Breaking the default requires running the actual cost numbers for each new workload, not retroactively. Make platform choice a deliberate engineering decision, not a reflex.

A 30-Day Serverless Cost Audit

If your serverless bill is over $5,000/month, run this audit. We typically find 30-50% savings in the first pass.

Week 1: Inventory

- List all Lambda functions sorted by monthly cost

- List all Fargate tasks/services sorted by monthly cost

- Tag each workload with: workload type, traffic shape, duration, memory

- Identify the top 10 most expensive workloads

Week 2: Categorize

For each top-10 workload, run the 5-question decision framework:

- Duration band

- Traffic shape

- Cold start sensitivity

- Memory/compute needs

- Ecosystem dependencies

Week 3: Model Alternatives

For workloads where the framework suggests a different platform:

- Calculate cost on the alternative platform at current traffic

- Estimate migration effort (engineering hours)

- Calculate break-even timeline

Week 4: Execute

Migrate workloads where annual savings exceed migration effort by 3x. Defer workloads where break-even is over 12 months unless strategic reasons exist.

The Bottom Line

There is no single "best" serverless platform in 2026. Lambda, Cloud Run, and Fargate each win for specific workloads, and picking by familiarity instead of fit costs 30-65% on the wrong choice. The discipline most teams skip: calculate the actual cost on each platform for each new workload before committing, instead of defaulting to whatever your team already knows.

If your serverless bill is over $5,000/month and you are running it primarily on Lambda, you are very likely overpaying. Our cloud cost optimization team runs free serverless audits and typically identifies 30-50% savings within the first migration. Run a free Cloud Waste Scorecard to find your biggest serverless cost leaks.

Further reading:

- AWS Lambda vs Fargate Cost Breakeven 2026

- AWS Lambda Pricing Deep Dive 2026

- AWS ECS Fargate Pricing 2026

- Serverless Cost Optimization Autoscaling Guide 2026

- Media Storage Serverless Cost Comparison 2026

- Cloud Cost Optimization FinOps Service

- Google Cloud Run Pricing

- AWS Lambda Pricing

- AWS Fargate Pricing