The Hidden Cost of Observability in Modern Infrastructure

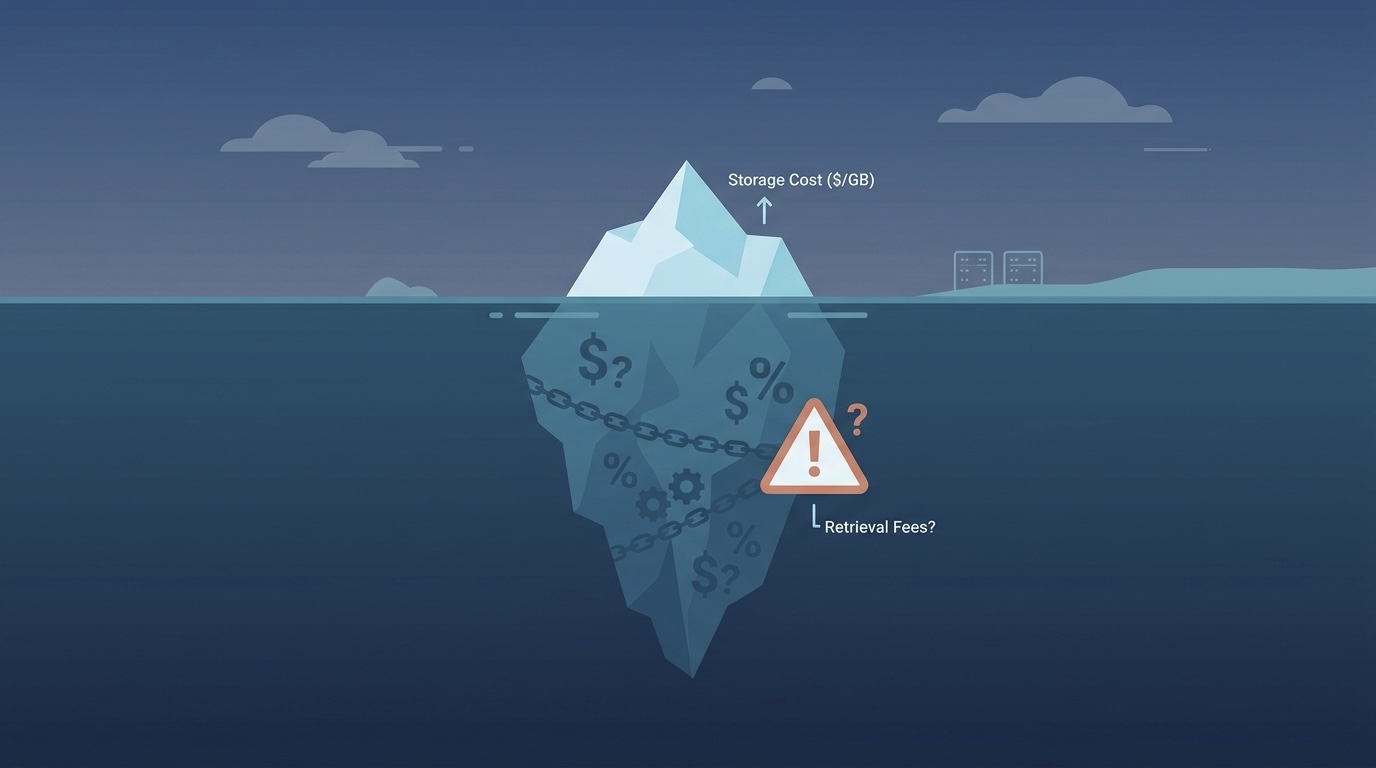

Cloud observability is essential for reliability, but in 2026 it has also become one of the most underestimated cost drivers for SaaS and AI-driven startups. Teams often assume their highest cloud costs come from compute or storage, but a closer look at invoices from AWS CloudWatch, Google Cloud Operations Suite, and Azure Monitor tells a different story. Telemetry volume, logs, metrics, and traces, is quietly consuming budgets and delaying infrastructure modernization initiatives.

Cloud cost optimization is no longer just about trimming EC2 instances or deleting orphaned disks. Observability now requires a practical FinOps lens, because every additional log line, high-cardinality metric, or unbounded trace retention silently multiplies spend across ingestion, storage, and retrieval layers.

In this guide, we will break down:

- The real economics of logs, metrics, and traces

- Five common cloud waste patterns in observability

- Actionable playbooks to reduce cloud costs without sacrificing reliability

- A FinOps framework for telemetry-driven infrastructure modernization

Along the way, you will get checklists, tables, and concrete examples to bring cloud financial management into your engineering culture.

Why Observability Costs Are Exploding

Traditional monitoring practices were designed for monoliths, not distributed SaaS or AI microservices. Each new microservice now generates exponential telemetry:

- Logs: Event-rich, often unbounded, and increasingly verbose due to modern frameworks

- Metrics: High cardinality from labels like user IDs, session IDs, or dynamic tags

- Traces: Deeply nested spans across services with long retention periods

With AI pipelines and serverless workloads, observability can easily account for 25–40% of total cloud spend.

The Cloud Economics of Telemetry

| Telemetry Type | Typical Billing Model | Common Cost Pitfalls |

|---|---|---|

| Logs | Ingestion + storage per GB | Verbose debug logs, duplicate pipelines |

| Metrics | Cardinality-based pricing | Dynamic labels, unbounded dimensions |

| Traces | Sampled ingestion + storage | Retaining all spans, high-frequency sampling |

Cloud providers have shifted observability pricing to heavily penalize volume and cardinality. For example:

- AWS CloudWatch now charges for log ingestion, storage, and queries separately

- Google Cloud Operations bills by metric cardinality and trace samples

- Azure Monitor applies tiered pricing for data ingestion and retention

Ignoring these details during an application modernization or hybrid cloud modernization initiative can inflate costs faster than compute growth.

The Five Most Common Observability Waste Patterns

1. Duplicate or Redundant Logging Pipelines

Teams often ship the same logs to multiple destinations for analysis. This doubles ingestion costs without improving insight.

FinOps Tip: Consolidate pipelines and use routing rules to avoid redundant copies.

2. High-Cardinality Metrics

Metrics with dimensions like userId or transactionId explode cardinality, driving GCP and Azure costs dramatically.

Action Step: Aggregate or batch metrics at the service level instead of per user.

3. Overzealous Trace Sampling

Retaining 100% of traces is rarely necessary. AI inference or high-traffic APIs can generate millions of spans per hour.

Playbook:

- Implement dynamic sampling for high-frequency endpoints

- Retain only 10–20% of traces for production workloads

4. Infinite Log Retention

Storing logs indefinitely multiplies storage costs and slows queries.

Checklist:

- Retain critical logs for 30–90 days

- Archive to S3 or GCS for compliance

- Delete noisy debug logs automatically

5. Lack of Cost-Aware Dashboards

Many teams visualize all telemetry without filtering for cost or value. Every chart refresh triggers costly queries.

Framework: Use metadata tagging and cost attribution dashboards within a cloud financial management strategy.

A FinOps Framework for Observability Cost Control

Successful infrastructure modernization requires aligning telemetry practices with FinOps principles. Here is a repeatable framework:

Step 1: Audit Telemetry Volume and Retention

Run an audit across AWS, Azure, and GCP:

- Identify top log groups by ingestion cost

- Map metric cardinality over time

- Analyze trace sampling rates and spans per request

Step 2: Map Observability to Business Value

Determine which telemetry sources directly support uptime, SLA adherence, or customer experience.

Table: Telemetry Value Matrix

| Source | Value to Reliability | Monthly Cost | Optimization Action |

|---|---|---|---|

| API trace spans | Medium | $2,500 | Reduce sample rate |

| Debug logs | Low | $3,000 | Move to cold storage |

| System metrics | High | $800 | Maintain |

Step 3: Apply Cloud-Specific Optimizations

AWS Cost Optimization:

- Use CloudWatch log class routing

- Enable Vended Logs for cost-effective storage

Azure Cost Management:

- Implement Sampling in Application Insights

- Use Diagnostic Settings for targeted logging

GCP Cost Optimization:

- Apply Log Exclusions for noisy resources

- Reduce metric cardinality with custom aggregations

Step 4: Enforce Policies with Automation

Use Infrastructure as Code and CI/CD pipelines to enforce retention, sampling, and routing policies.

Step 5: Integrate with FinOps and DevOps Transformation

Regular reviews of observability spend should be part of your FinOps consulting or internal cloud financial management practices. Include these in ongoing DevOps transformation initiatives to prevent regressions.

Practical Playbooks to Reduce Cloud Costs

Here are three actionable playbooks that teams can deploy today.

Playbook 1: Log Ingestion Reduction

- Identify the top 10 largest log groups in AWS, Azure, and GCP

- Implement filters or routing to exclude debug and health check noise

- Apply lifecycle policies to automatically move logs older than 30 days to cold storage

Playbook 2: Metric Cardinality Control

- Review metric dimensions for dynamic labels

- Replace per-user metrics with aggregated summaries

- Apply GCP custom metrics and Azure metric aggregation features

Playbook 3: Trace Sampling Optimization

- Determine critical versus non-critical services

- Apply 10% sampling for high-traffic, low-priority traces

- Store only sampled spans in long-term storage

Following these playbooks will cut observability spend by 30–50% without reducing reliability.

Real-World Example: SaaS Startup Cloud Cost Optimization

A fast-growing SaaS company running a hybrid cloud modernization project found that 38% of its cloud bill was coming from observability. By applying the FinOps framework:

- Log ingestion dropped by 45% after excluding repetitive debug logs

- Metric cardinality was reduced by 60% by removing session-specific labels

- Trace storage fell by 70% after implementing dynamic sampling

This freed budget for application modernization and an accelerated cloud migration strategy, improving both performance and infrastructure modernization outcomes.

Observability Cost Reduction Checklist

- Audit all telemetry sources and retention policies

- Eliminate duplicate log pipelines

- Aggregate high-cardinality metrics

- Apply dynamic trace sampling

- Enforce lifecycle policies for log storage

- Allocate costs by service using tags and labels

- Automate compliance with FinOps guardrails

Modern Infrastructure Needs Cost-Aware Observability

Modern infrastructure cannot succeed without financial discipline. By embedding observability cost controls into your cloud cost optimization and FinOps practices, your team can:

- Reduce cloud waste by 30–50%

- Improve cloud financial management

- Accelerate legacy system modernization

- Support hybrid cloud modernization and cloud migration initiatives

Want to learn more? Explore our Cloud Cost Optimization & FinOps Services to build a sustainable, cost-efficient observability strategy that supports your long-term modernization goals.

For an in-depth resource on modern FinOps practices, check out The FinOps Foundation.