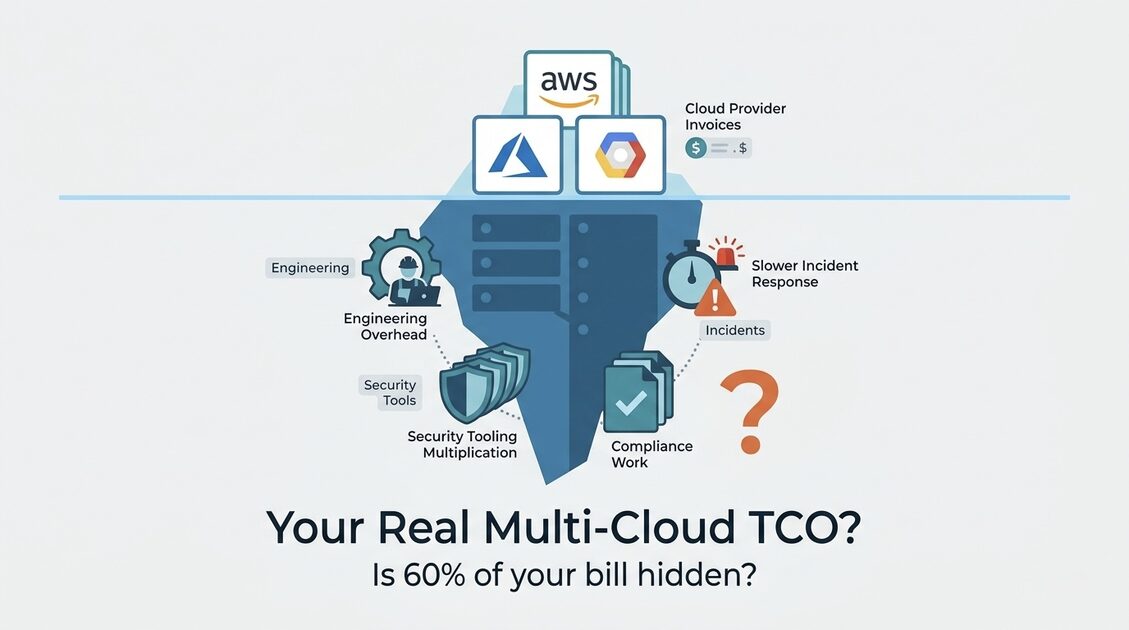

The Cloud Bill Is the Smallest Part of What You Actually Pay

Go find your last three cloud invoices. Add up AWS, Azure, and GCP. That number feels big, right? It might be $80,000 a month. Maybe $200,000. Whatever it is, write it down.

Now here is the thing nobody tells you: that number is probably 40 to 60% of what your multi-cloud strategy actually costs your organization.

The rest is not on any invoice. It does not show up in any billing dashboard. It lives in your salary expenses, your security tooling subscriptions, your HR budget for recruiting engineers who know three different cloud platforms, and the quiet drag on every incident that goes 90 minutes longer than it should because your on-call engineer is not fluent in the provider that just went down.

Most multi-cloud cost guides focus entirely on the infrastructure bill, because that is the only number anyone can easily see. This guide focuses on the full picture. And once you have seen the full picture, the decision about whether to optimize your multi-cloud spend or consolidate your providers becomes much clearer.

How Most Companies Accidentally Became Multi-Cloud

Before we get into numbers, it is worth understanding how most organizations end up multi-cloud in the first place. Because the origin story shapes the solution.

Very few companies sat in a room, weighed the tradeoffs, and deliberately chose to distribute their workloads across three cloud providers. Most of them drifted into it.

Here is how it usually happens.

The acquisition path: Company acquires a startup that runs on GCP. The main company runs on AWS. Nobody wants to tackle a migration during the post-acquisition chaos. Two years later, both environments are still running, both have grown, and the "temporary" multi-cloud setup has become permanent infrastructure.

The team autonomy path: One engineering team picks AWS because that is what they know. A second team spinning up a data platform chooses GCP BigQuery because it is genuinely better for their use case. A third team building Windows-based tooling defaults to Azure. Each decision made sense locally. Together, they created three separate billing accounts, three separate IAM systems, and three separate observability stacks.

The sales demo path: A cloud provider offers significant credits to run a proof of concept. The proof of concept becomes production. The credits run out. The workload stays.

The "best of breed" path: An architect decides each workload should run on its optimal provider. Lambda for serverless, BigQuery for analytics, Azure AD for identity. The architecture is theoretically elegant. Operationally, it is expensive to run and even more expensive to hire for.

In all of these scenarios, nobody calculated the total cost of ownership before committing. They calculated the infrastructure cost. They missed everything else.

The 5 Multi-Cloud Costs That Never Appear on Your Invoice

Cost 1: Engineering Overhead (The Biggest One)

Your engineers are your most expensive infrastructure. A senior cloud engineer costs $150,000 to $200,000 per year in salary alone. Fully loaded with benefits, employer taxes, and overhead, plan for $200,000 to $280,000 per engineer per year.

Running a multi-cloud environment adds meaningful overhead to every one of them. How much? Industry data suggests 15 to 25% of engineering capacity spent on provider-specific work that would not exist on a single cloud: learning new services, maintaining separate deployment pipelines, building provider-specific monitoring, debugging issues in unfamiliar environments, keeping certifications current across platforms.

The calculation for a 10-person cloud engineering team at $240,000 fully loaded cost per engineer:

- Total team cost: $2,400,000/year

- Multi-cloud overhead at 20%: $480,000/year

That $480,000 is not on your cloud bill. It is on your payroll. But it is absolutely a cost of your multi-cloud architecture, and it compounds as your team grows.

What does that overhead look like in practice? It is the engineer spending a day figuring out why the same Terraform module behaves differently on GCP than it does on AWS. It is the three-hour postmortem that ran long because the on-call engineer had to pull in a specialist for the provider they do not know. It is the onboarding checklist that now takes five days instead of two because new engineers need accounts, permissions, and context on three separate platforms.

Cost 2: Security and Compliance Multiplication

Every cloud provider you add to your environment multiplies your security surface area. This has direct, measurable cost implications.

SIEM and log ingestion: Security information and event management tools (Splunk, Datadog, Elastic Security) charge based on data volume ingested. AWS CloudTrail, Azure Monitor, and GCP Cloud Audit Logs each generate separate audit streams. A single provider generates roughly 2 to 5GB of audit log data per day for a medium-sized environment. Three providers generate 6 to 15GB. At Splunk pricing of $150 per GB per year, that difference is $600 to $1,500/month in additional SIEM costs, just from having three audit pipelines instead of one.

Identity and access management: Every provider has its own IAM system. Managing consistent access policies, enforcing least-privilege, and auditing permissions across AWS IAM, Azure Active Directory, and GCP IAM is not a trivial task. Most organizations either hire dedicated IAM engineers or let it drift, creating security gaps. The cost of dedicated IAM management across three providers: estimate one quarter of a senior security engineer's time, or $50,000 to $75,000/year.

Compliance certifications and audits: If your organization needs SOC 2, HIPAA, or PCI compliance, auditors review your cloud environments. More providers means more environments to audit, more evidence to collect, more time from your security team during audit season. Multi-cloud does not disqualify you from certifications, but it meaningfully increases the time and cost of achieving and maintaining them.

The compliance theater problem: Here is the one that will frustrate you. A significant number of organizations maintain multi-cloud specifically for compliance reasons that turn out to be incorrect. "We use Azure because our EU customers require GDPR compliance on Azure" is a real statement we hear regularly. AWS, GCP, and Azure all hold identical GDPR certifications. None of the major cloud providers has a regulatory certification that the others lack for common frameworks (GDPR, HIPAA, SOC 2, ISO 27001, FedRAMP Moderate). If your multi-cloud exists because of a compliance requirement that you have not verified recently, check the current certification pages of your primary provider before assuming you need a second one.

Cost 3: Tooling Fragmentation and Duplication

Native cloud tools are deeply integrated, well-supported, and free. The moment you add a second provider, you need cross-cloud tools to maintain visibility, and those tools carry real subscription costs.

But the less obvious cost is the tools you run twice. Consider:

- Infrastructure as code: Your primary provider has excellent native deployment tools (CDK, ARM templates, Deployment Manager). Multi-cloud requires a neutral tool like Terraform or Pulumi, which your team then needs to learn, maintain, and upgrade. If you were already using Terraform, this is a wash. If you were using CloudFormation, this is a migration and a new skill requirement.

- Cost visibility: AWS Cost Explorer is free. GCP Billing Reports are free. Azure Cost Management is free. Vantage, CloudHealth, or Cloudability to aggregate all three runs $500 to $5,000/month.

- Container orchestration: If you run Kubernetes on multiple providers, each cluster's management tooling (logging, monitoring, networking) needs to be provider-agnostic. Standard provider integrations do not work cross-cloud. The cost is either more engineering time to build provider-agnostic tooling or more subscription cost for third-party tools that abstract the differences.

- Secrets management: HashiCorp Vault to replace three native secrets managers (AWS Secrets Manager, Azure Key Vault, GCP Secret Manager) costs $0.03/secret/month at scale and requires operational overhead to maintain the Vault cluster itself.

A realistic tooling overhead for a company running all three major providers: $1,500 to $6,000/month in additional subscriptions plus 10 to 20% engineering capacity to maintain cross-cloud tooling.

Cost 4: Incident Response and MTTR Tax

This one is felt acutely by every on-call engineer and rarely quantified by anyone in finance.

When infrastructure fails, the speed of recovery depends heavily on the responder's familiarity with the failing system. An engineer who uses AWS daily will resolve an AWS incident faster than an engineer who uses it occasionally. The same is true for Azure and GCP.

In multi-cloud environments, your on-call rotation almost always has someone covering infrastructure on a provider they are less comfortable with. The practical effect: mean time to resolution (MTTR) for incidents on secondary providers runs 40 to 100% longer than on the primary provider.

For a company with four significant cloud incidents per month, where two happen on the primary cloud and two on secondary providers:

- Primary provider MTTR: 45 minutes average

- Secondary provider MTTR: 80 minutes average (78% longer)

- Extra incident time per month: 2 incidents x 35 extra minutes = 70 engineer-minutes per incident resolution

That is not catastrophic on its own. But multiply by 12 months, add in the additional context-switching that happens during the investigation, and factor in the cost of engineers on-call for multiple providers, and you are spending $15,000 to $40,000 per year in extra incident time. This does not count the business impact of longer outages.

Cost 5: Recruiting and Retention Premium

Hiring engineers who are proficient in a single cloud provider is competitive. Hiring engineers who are proficient in two or three is measurably more expensive.

AWS, Azure, and GCP certifications each take 3 to 6 months to achieve proficiency and represent real market value. Engineers with multi-cloud expertise command 10 to 20% higher salaries than equivalent single-cloud engineers. For a 15-person engineering team, that premium costs $180,000 to $360,000/year in additional salary.

Beyond salary, multi-cloud environments have higher turnover risk for two reasons. First, engineers comfortable with all three providers have more job options, giving them more leverage. Second, the operational complexity of managing multiple providers creates burnout faster than single-cloud environments.

Calculating Your Real Multi-Cloud TCO

Here is a framework to calculate your actual total cost of ownership. Work through this for your specific environment:

Infrastructure costs (the visible part):

- Monthly AWS invoice: $__

- Monthly Azure invoice: $__

- Monthly GCP invoice: $__

- Cross-cloud tooling subscriptions: $__

- Multi-cloud security tooling premium: $__

- Subtotal visible costs: $__/month

Hidden people and operational costs (the invisible part):

- Engineering team size: __ engineers

- Fully loaded cost per engineer: $__/year

- Multi-cloud overhead percentage (estimate 15-25%): __%

- Annual engineering overhead cost: $__

- Monthly engineering overhead: $__/month

- Additional recruiting/retention premium (10-20% of engineering salaries): $__/month

- Estimated additional compliance/audit costs: $__/month

- Additional SIEM/security tooling for multiple providers: $__/month

- MTTR premium for secondary provider incidents: $__/month

- Subtotal hidden costs: $__/month

Total real multi-cloud cost: $__/month

For most companies running three providers with a team of 10 or more engineers, the hidden costs equal or exceed the visible infrastructure costs. The full TCO is typically 1.8 to 2.5 times the invoice total.

The Three-Option Decision Framework

Once you have your real TCO number, you have three paths. Here is how to decide which one applies to you.

Option 1: Strategic Consolidation

When it applies: Your multi-cloud setup exists for historical or accidental reasons rather than genuine technical necessity. Different teams chose different providers. You acquired a company on a different platform. Someone got convinced by a sales rep with attractive credits.

The consolidation math: Estimate the cost of migrating the secondary provider workloads to your primary provider. Consider engineering time for the migration, temporary dual-running costs during transition, and any refactoring needed to replace provider-specific services. Most migrations of non-trivial scale cost $50,000 to $200,000 in engineering time.

Then calculate annual savings: add up the eliminated infrastructure duplication, reduced tooling costs, and a conservative estimate of engineering overhead reduction (10 to 15% of your team's capacity freed up). For a 10-person team, that recovered capacity is worth $240,000 to $360,000/year.

Payback period: $150,000 migration cost / $300,000 annual savings = 6 months to break even. After that, you save $300,000 per year permanently.

This math holds for the majority of accidental multi-cloud environments. The migration feels daunting, but the post-migration savings are substantial and permanent.

Option 2: Deliberate Optimization

When it applies: Your multi-cloud setup has genuine technical or business justification. BigQuery is significantly better for your analytics workload than the alternatives. You have Windows workloads benefiting from Azure Hybrid Benefit that would be expensive to move. You have regulatory requirements that genuinely mandate geographic separation.

In this case, the goal is not to eliminate multi-cloud but to stop paying the hidden overhead tax while keeping the legitimate technical advantages.

The key optimizations that reduce the TCO without migration:

- Centralize tooling on cross-cloud platforms rather than running native tools for each provider (reduces both subscription cost and engineering complexity)

- Assign provider ownership by team: each provider has a clear owner who is the expert for that platform, reducing context switching and improving incident response

- Standardize deployment patterns across providers using Terraform so the deployment skill transfers even when the underlying services differ

- Consolidate observability into a single cross-cloud dashboard (Datadog or Grafana) rather than running CloudWatch, Azure Monitor, and Cloud Monitoring separately

- Run a quarterly "provider rationalization" review asking whether each workload on each provider still belongs there

Option 3: Full FinOps Governance

When it applies: You are committed to multi-cloud for strategic reasons, the technical advantages are clear and quantified, and the goal is to minimize the cost overhead of running multiple providers without reducing the engineering agility that multi-cloud provides.

This is the most complex path and requires the most investment to execute well. It involves building a cross-cloud FinOps practice, implementing unified cost attribution, standardizing commitment strategies across providers, and creating engineering incentives that reward cost-efficient architecture decisions.

Our multi-cloud FinOps guide covers the full governance framework for this option.

The Commitment Renewal Trap During Consolidation

This is the most expensive mistake teams make when they decide to consolidate, and almost nobody talks about it.

When you decide to consolidate from three providers to one, the natural timeline is 12 to 18 months for a full migration. During that period, your existing Reserved Instances, Savings Plans, and Committed Use Discounts will expire.

Here is the trap: auto-renewal is enabled by default on most cloud commitment programs. If nobody actively cancels or lets commitments expire on the provider you are leaving, you will spend 12 months paying commitment fees for a provider you are winding down. One team we know paid $180,000 in renewed GCP Committed Use Discounts on instances that were migrated to AWS within the same quarter.

The fix: the moment you decide to consolidate, inventory every active commitment on every provider. Map each one to its expiration date. Set calendar reminders to not renew commitments on the providers you are leaving. Let them expire naturally rather than renewing for 1-year terms on infrastructure you plan to shut down.

The Free Tier Dependency Lock-In

This one is sneaky and it compounds over time.

Cloud providers offer generous free tiers to attract new workloads. Some of these free tiers are permanent: GCP's Always Free tier includes one f1-micro VM instance per month, 5GB of Cloud Storage, and a small BigQuery allowance. AWS has a similar permanent free tier. These are genuinely useful for small workloads.

The problem: teams build dependencies on free-tier resources in secondary providers, making those providers sticky even when the rest of the reason for using them has gone away.

A team that uses GCP primarily for BigQuery might also have 12 development databases running on GCP's free-tier Cloud SQL tier, a handful of Cloud Functions for lightweight automation, and several development VMs on the f1-micro free tier. None of these cost money individually. Collectively, they represent 20 to 30 resources that would need to be migrated or recreated before you can fully exit GCP.

Free tier resources do not appear on cost reports because they cost zero. They do not show up in migration planning because nobody inventories zero-cost resources. They become visible only after you have already committed to migration and then discover you have dozens of loose ends to tie off.

Run a free tier audit on every secondary provider. Export all resources regardless of cost. Find the zero-cost resources and include them in any consolidation plan. They are often the last thing to get migrated and the most likely to extend your timeline.

The 90-Day Waste Cycle in Multi-Cloud Environments

Cloud waste does not accumulate randomly. It follows a predictable pattern, and multi-cloud environments have it in triplicate.

Here is how it works. Engineering teams work in quarterly cycles. Each quarter brings new initiatives, new proof-of-concepts, new experiments. Resources get provisioned. Initiatives complete or get cancelled. Resources frequently do not get cleaned up at the same cadence they get created, because cleanup is not tied to any deliverable or sprint story.

After 90 days, the orphaned resources from last quarter's initiatives are still running. After 180 days, two quarters of waste overlap. After a year, you have four layers of accumulated ghost infrastructure.

In a single-cloud environment, a monthly cleanup sweep finds this waste reasonably efficiently. In a multi-cloud environment, cleanup processes almost always run on the primary provider and are inconsistently applied to secondary providers. The result: secondary providers accumulate waste faster relative to their active resource count.

Teams auditing their secondary cloud for the first time consistently find 20 to 35% of that provider's running resources are orphaned or idle. This is not carelessness. It is the predictable result of running cleanup processes that were designed for one cloud on an environment that spans three.

The fix is systematic: automated ghost detection must run on every provider, not just the primary one. Our guide on eliminating ghost servers covers the specific CLI commands and automation patterns for all three major providers.

Building the Business Case for Consolidation

If the numbers point toward consolidation, you need to build a business case that finance and leadership will approve. Here is the structure that works.

Start with the full TCO calculation from the framework above. Show the visible infrastructure costs plus the hidden people and operational costs. Most leadership teams have never seen the complete number and are surprised by it.

Show the consolidation cost as a one-time investment. Engineering time for migration (hours x loaded cost per engineer), temporary dual-running period, and any refactoring or service replacement work. Be honest about the timeline and realistic about the effort.

Calculate the payback period. Total migration cost divided by monthly savings. For most accidental multi-cloud environments, this is 4 to 12 months.

Show the 3-year value. One-time migration cost subtracted from 3-year savings. For an organization spending $50,000/month on cloud infrastructure with 15 engineers, a typical consolidation produces $600,000 to $900,000 in 3-year savings after accounting for migration cost.

Address the risk. Leadership will ask what happens if the migration goes wrong. The honest answer: migrations of this type are well-understood, reversible at each step (you keep both environments running until migration is validated), and lower risk than the ongoing operational complexity of maintaining three providers indefinitely.

For help building this business case or executing the migration, our Cloud Migration team has done this calculation and transition for organizations at every scale.

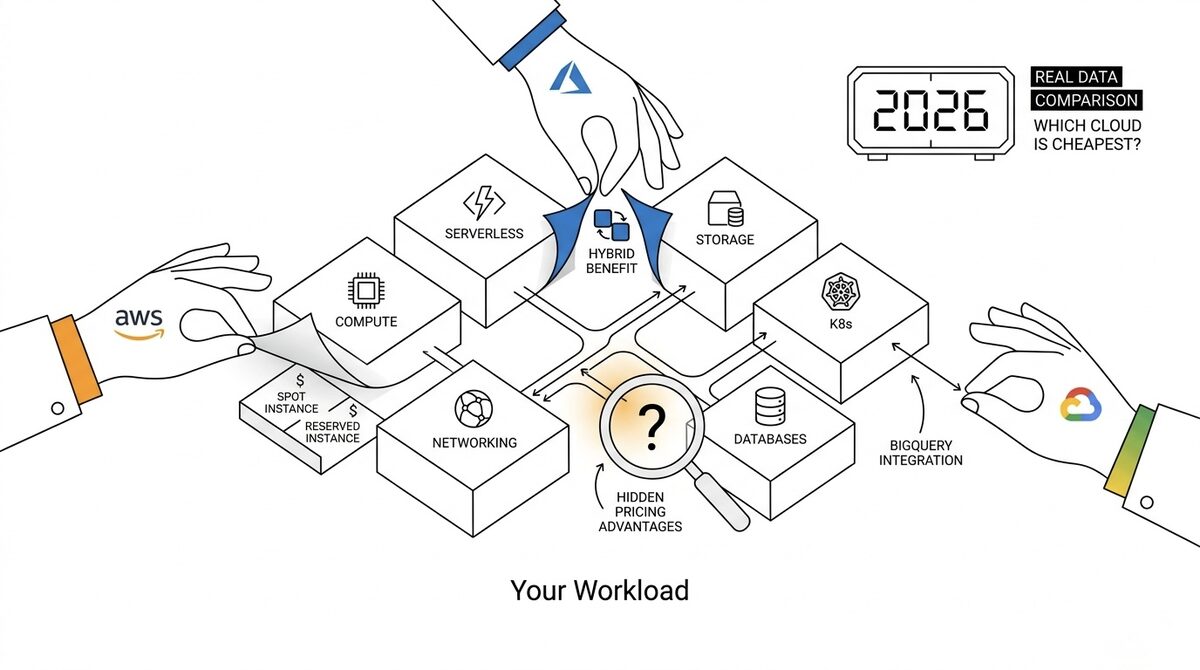

Multi-Cloud vs Single-Cloud: The Honest Financial Model

Everyone debates multi-cloud vs single-cloud in the abstract. Here is the concrete math for an organization with $5M in annual cloud spend.

| Factor | Single Cloud | Multi-Cloud | Multi-Cloud Overhead |

|---|---|---|---|

| Engineering team size needed | 10 | 14-16 (40-60% more) | +$960K-1.44M/year |

| Tool duplication (Terraform, monitoring, CI/CD) | $5K/month | $12-18K/month | +$84-156K/year |

| Egress between clouds | $0 | $0.02-0.09/GB | +$2.4K-108K/year (varies) |

| Training & certification | $20K/year | $50K/year | +$30K/year |

| Incident MTTR | 30 min avg | 45-60 min avg | +33-100% longer outages |

| Total overhead for $5M cloud spend | Baseline | +$1.1M-1.8M/year | 22-36% premium |

That 22-36% premium is the real price of multi-cloud. Not the infrastructure cost difference between providers (which is usually within 5-10%), but the organizational cost of operating across multiple platforms simultaneously.

If your multi-cloud strategy does not deliver at least $1.1M/year in quantifiable benefits (better pricing, regulatory compliance, genuine best-of-breed advantages), you are paying a premium for complexity.

When Multi-Cloud Actually Saves Money (3 Valid Scenarios)

Multi-cloud is not always wrong. Here are the three scenarios where the math genuinely works in favor of running multiple providers.

1. Best-of-Breed for Specific Workloads

GCP BigQuery for analytics costs $6.25/TB queried with no infrastructure to manage. Running the equivalent analytical workload on AWS Redshift or self-managed infrastructure costs 2-5x more for teams processing 10-50TB/month.

The math: A data team processing 20TB/month on BigQuery pays $125/month. The same workload on a Redshift cluster costs $3,000-8,000/month for equivalent performance. Savings: $50K-200K/year on analytics alone.

Net after accounting for cross-cloud overhead: positive, even after $30K/year in cross-cloud egress and tooling duplication for the analytics workload. This is a valid multi-cloud use case because the savings on the specific workload exceed the overhead of maintaining a second provider.

2. Regulatory Requirement

Financial services organizations requiring data sovereignty in regions where one provider lacks adequate presence. Some countries mandate that certain data types reside within national borders, and not every provider has a region in every country.

This is not a cost play. It is a compliance mandate. The multi-cloud overhead is the cost of doing business in that regulatory environment. The relevant question is not "does multi-cloud save money?" but "does the revenue from operating in that jurisdiction exceed the multi-cloud overhead?"

3. Negotiation Leverage

Having production workloads on two or more clouds gives you real walk-away power during contract negotiations. Cloud sales teams know the difference between a customer who could theoretically move and a customer who has already proven they can run on an alternative provider.

Typical discount improvement from credible multi-cloud leverage: 5-15% on committed spend. On $5M annual spend, that translates to $250K-750K/year in better pricing.

The catch: you need real production workloads on the alternative provider, not just a proof of concept. Sales teams can tell the difference. The cost of maintaining that credible alternative (a meaningful workload running in production on a second provider) is roughly $100K-200K/year in overhead. If your total spend is above $3M/year, the negotiation leverage alone can justify the overhead.

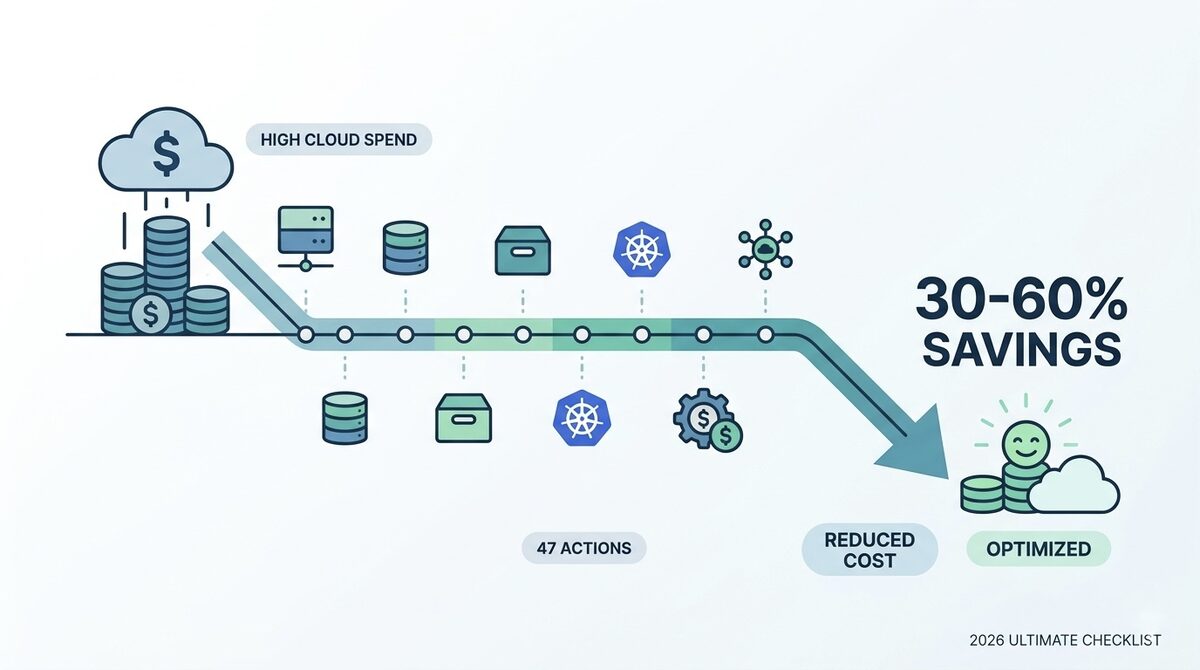

The Exit Plan Nobody Writes

If you are already multi-cloud and the numbers say consolidation makes sense, here is the phased approach that minimizes risk while capturing savings as quickly as possible.

Phase 1: Inventory (Month 1-2)

Catalog all workloads by cloud provider. Tag each workload with migration difficulty:

- Easy: Stateless services, scheduled jobs, containerized applications with no provider-specific dependencies. These run anywhere with a Dockerfile change and a new deployment target.

- Medium: Stateful services with standard databases (PostgreSQL, MySQL, Redis). Data migration is straightforward but requires careful cutover planning.

- Hard: Workloads using vendor-locked services (DynamoDB, BigQuery, Azure Cognitive Services, Cloud Spanner). These require application refactoring or acceptance of a different service with different behavior.

Phase 2: Quick Wins (Month 3-6)

Migrate "easy" workloads first. These deliver immediate savings with minimal risk. A typical organization has 30-40% of their secondary-provider workloads in the "easy" category.

Expected effort: 2-4 engineering days per service. Expected savings: immediate reduction in secondary provider spend plus reduced tooling overhead as fewer services depend on that provider.

Phase 3: Stateful Services (Month 6-12)

Migrate "medium" workloads. These require data migration planning, potentially using AWS DMS, GCP Database Migration Service, or manual pg_dump/restore depending on scale.

Key risk: data migration cutover windows. Mitigate by running dual-write patterns for 1-2 weeks before cutting over reads, then decommissioning the old database.

Phase 4: Evaluate Hard Workloads (Month 12-18)

For "hard" workloads (DynamoDB, BigQuery, provider-specific ML services), the decision is not always "migrate." Sometimes the correct answer is "keep this specific workload on the secondary provider indefinitely because the migration cost exceeds the lifetime savings."

Run the math for each hard workload:

- Cost of refactoring to remove provider dependency

- Ongoing cost of running that single workload on the secondary provider (including its share of multi-cloud overhead)

- If ongoing cost is less than migration cost amortized over 3 years, leave it alone

Expected Outcomes

- Timeline: 12-18 months for 80% consolidation

- Expected savings: $500K-1.5M/year for a $5M cloud spend (10-30% after migration costs)

- Break-even: 6-12 months after migration completion

- Residual multi-cloud: 10-20% of workloads may remain on the secondary provider permanently, and that is fine if the math supports it

The most common mistake in consolidation: trying to reach 100% on a single provider. The last 20% of workloads typically cost more to migrate than they save. Accept that "mostly single cloud with one or two justified exceptions" is often the optimal outcome.

The Full Picture Changes the Decision

Most multi-cloud cost conversations never get past the infrastructure invoices. That is why most multi-cloud environments never get meaningfully cheaper: the teams optimizing costs are looking at 40 to 60% of the actual number.

The hidden costs are real. The engineering overhead is the largest of them, and it is the one most directly within your control. Whether you fix it by consolidating to a single provider or by reducing the overhead of managing multiple providers through better tooling and clearer ownership, the path starts with seeing the full picture.

Run the TCO calculation. Most teams who do it are surprised by the total. And for most teams who run it, the consolidation math becomes obvious in a way it never was when they were only looking at the cloud bill.

To find out where your highest-impact savings are across your full cloud environment, take our free Cloud Waste and Risk Scorecard. And if you want expert help running the consolidation math for your specific environment, our Cloud Cost Optimization and FinOps team does exactly this.

Related reading:

- Multi-Cloud Cost Optimization: 7 Strategies to Stop Paying Double Across AWS, Azure, and GCP

- Multi-Cloud FinOps in 2026: How to Manage Costs Across AWS, Azure, and GCP

- AWS vs Azure vs GCP Cost Comparison: Which Cloud Is Actually Cheaper?

- Stop Paying for Ghost Servers: 12 Strategies to Eliminate Cloud Waste

- 7 Proven Ways Automated Cloud Cost Optimization Transforms Modern Infrastructure

- Cloud Financial Management in 2026: 7 FinOps Strategies That Cut Waste by 40%

External resources: