80% of Engineering Orgs Will Have Platform Teams by 2027. The Question Is Whether Yours Will Be One That Actually Works.

Gartner's prediction has a caveat nobody reads: 80% will have platform teams, but fewer than 30% will achieve measurable developer productivity gains from them. The gap between "we have a platform team" and "our platform genuinely accelerates engineering" is where most organizations live in 2026, spending $500K-2M annually on internal developer platforms that developers ignore in favor of the same Slack-and-ticket workflow they have always used.

The platform teams that do work share specific patterns. They treat their platform as a product. They measure developer satisfaction, not just adoption. They embed cost and security into the golden path rather than bolting it on as an afterthought. And increasingly in 2026, they are integrating AI assistants that make the platform smarter than a static service catalog.

This post identifies the 11 trends separating effective platform engineering from expensive infrastructure bureaucracy in 2026. Each trend includes specific tooling examples, adoption data, and implications for your platform strategy.

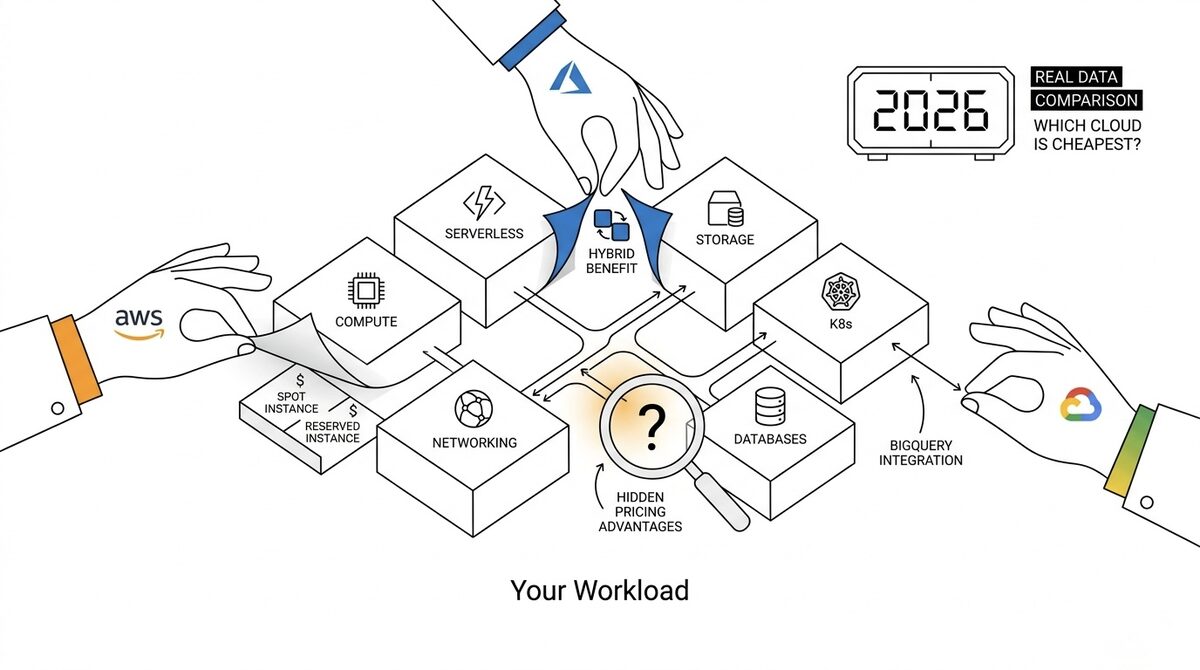

For context on how platform engineering intersects with cloud cost optimization, see our FinOps trends in 2026 post which covers the financial governance side of this equation.

Trend 1: AI-Native Developer Platforms

This is the single biggest shift in platform engineering since Kubernetes became the common runtime. In 2026, 73% of platform teams have integrated AI assistants into at least one developer workflow (source: CNCF Platform Engineering Survey 2026).

What AI-Native Means in Practice

| Capability | 2024 State | 2026 State |

|---|---|---|

| Code review | Manual pull request reviews | AI pre-review flags issues before human review |

| Incident response | PagerDuty alert → human triage | AI summarizes incident context, suggests remediation |

| Infrastructure provisioning | Developer writes Terraform or clicks portal | Developer describes intent, AI generates infra spec |

| Documentation | Manually maintained (outdated) | AI generates from code, keeps synchronized |

| Onboarding | Read the wiki (good luck) | AI assistant answers contextual questions about the platform |

The Tooling Shift

- IDE-embedded assistants (GitHub Copilot, Cursor, Claude Code) are no longer optional productivity boosters. Platform teams are configuring them with organization-specific context: internal API docs, platform conventions, approved patterns.

- AI-powered service catalogs that answer "how do I deploy a new service?" in natural language rather than requiring developers to navigate a portal. Backstage + AI plugin integrations are the most common implementation.

- Automated pull request analysis that checks not just code quality but platform compliance: "This deployment does not use the approved database connection pattern" or "This Terraform plan provisions resources outside the allowed cost range."

What Top Teams Do Differently

The best platform teams are not just adding AI as a feature. They are making their platform AI-accessible: every capability the platform offers is available through a conversational interface as well as the traditional UI/API. This means the platform's internal APIs need to be well-documented, versioned, and describable to an LLM.

Implication for your strategy: If your internal developer platform does not have an AI interaction layer by end of 2026, your developers will build their own (worse) version using ChatGPT plus tribal knowledge. Get ahead of this.

Trend 2: FinOps Guardrails Embedded at Provisioning Time

The most impactful platform engineering trend for CFOs: cost visibility moves from "invoice review after deployment" to "cost estimation before deployment." Platform teams are building cost guardrails directly into the infrastructure provisioning layer.

How It Works

# Example: Platform-enforced cost annotation in a service manifest

apiVersion: platform.company.io/v1

kind: ServiceDeployment

metadata:

name: recommendation-engine

spec:

compute:

size: medium # Platform-defined T-shirt size

# Platform auto-calculates: ~$180/month on current pricing

estimatedMonthlyCost: $180

costBudget: $500 # Fails deployment if estimated cost exceeds budget

costOwner: team-ml-platform

The Data

| Metric | Without Platform FinOps | With Platform FinOps |

|---|---|---|

| Average time to discover cost anomaly | 18-26 days (invoice) | 0 days (blocked at deploy) |

| Developer awareness of resource cost | 12% know their service cost | 89% see cost at deploy time |

| Over-provisioning rate | 60-70% | 20-30% |

| Cloud waste reduction | Reactive (manual cleanup) | Preventive (right-sized by default) |

Implementation Patterns

- T-shirt sizing with cost labels. Instead of exposing raw instance types, the platform offers "small/medium/large" with cost ranges attached. Developers choose based on need and budget.

- Cost estimation in CI/CD. Before a Terraform plan applies, the pipeline shows: "This change adds $340/month to your team's cloud spend. Current budget remaining: $2,100/month."

- Automatic idle resource detection. The platform identifies services with zero traffic for 7+ days and proposes scale-to-zero or deletion via automated PR.

- Commitment management. The platform team centrally manages Reserved Instances and Savings Plans, allocating discounts to teams transparently.

Implication: Platform teams that ignore FinOps integration are leaving 30-40% cost optimization on the table. The most effective cloud cost reduction happens at provisioning time, not cleanup time. For a deeper dive into specific tools, see our best cloud cost optimization tools comparison.

Trend 3: Security as a Platform Capability (Not a Gate)

"Shift left" has been a security buzzword for years. In 2026, the mature version is "build in": security is a platform capability that developers consume automatically, not a checklist they must satisfy or a gate they must pass.

What This Looks Like

| Security Domain | Gate Model (Old) | Platform Capability (2026) |

|---|---|---|

| Secrets management | "Submit a ticket to get a secret" | Platform auto-provisions and rotates secrets for new services |

| Network policies | "Security team reviews network config" | Platform generates default-deny policies with service-mesh allowlists |

| Container scanning | "Scan fails CI, developer must fix" | Base images pre-scanned; platform maintains approved image registry |

| Compliance | "Quarterly audit finds violations" | Policy-as-code (OPA/Kyverno) prevents non-compliant deploys |

| Access control | "Request IAM permissions via ticket" | Platform grants least-privilege automatically based on service type |

The CNCF Stack for Platform Security in 2026

- Open Policy Agent (OPA) / Gatekeeper: Enforce organizational policies at admission control

- Kyverno: Kubernetes-native policy engine (simpler than OPA for K8s-only environments)

- Sigstore / Cosign: Supply chain security (image signing and verification)

- Falco: Runtime threat detection as a platform service

- cert-manager: Automatic TLS certificate provisioning and rotation

- External Secrets Operator: Unified secrets interface across Vault, AWS SM, GCP SM

The key insight: when security is a gate, developers find workarounds. When security is a capability the platform provides transparently, compliance rates hit 95%+ because the path of least resistance is also the secure path.

Trend 4: Platform-as-Product Maturity

The defining characteristic of successful platform teams in 2026 is that they operate like product teams, not infrastructure teams. This is not a new idea (Team Topologies introduced it in 2019), but 2026 is when the practices became mainstream.

Product Metrics for Platforms

| Metric | What It Measures | Target (Mature Teams) |

|---|---|---|

| Developer NPS | Satisfaction with platform experience | 40+ (world-class product-level) |

| Time-to-first-deploy | How quickly a new developer ships their first change | Under 1 day |

| Golden path adoption | % of services using platform-recommended patterns | 80%+ |

| Self-service ratio | % of infra requests fulfilled without ticket | 90%+ |

| Mean time to provision | Time from "I need a database" to "it is running" | Under 15 minutes |

| Cognitive load score | Developer-reported complexity of platform interaction | Decreasing quarter-over-quarter |

What Product Thinking Changes

Internal marketing. Yes, actually marketing your platform internally. The best platform teams run launch announcements, demo sessions, onboarding workshops, and even internal "release notes" newsletters. Developers cannot adopt what they do not know exists.

User research. Quarterly developer surveys, shadowing sessions (watching developers use the platform), and feedback channels that get responses within hours. Platform teams that skip user research build what they think developers need rather than what developers actually struggle with.

Roadmap transparency. Published quarterly roadmaps visible to all engineering teams. "We are building X next quarter based on feedback Y. If you need Z, here is the workaround until Q3." This builds trust and reduces shadow platform proliferation.

Deprecation management. Treating platform changes like API versioning: announce deprecation 6 months early, provide migration tooling, and never break consumers without warning.

Trend 5: Composable Platforms Over Monolithic IDPs

The "buy one platform that does everything" approach has failed for most organizations. In 2026, the winning pattern is composable: assemble your IDP from best-of-breed components connected by thin integration layers.

The Composable Stack

| Layer | Purpose | Common Tools (2026) |

|---|---|---|

| Developer Portal | Service catalog, docs, API discovery | Backstage, Port, Cortex |

| Infrastructure Provisioning | Self-service infra | Crossplane, Terraform + Atlantis, Pulumi |

| Delivery | Deploy and promote | ArgoCD, Flux, Spinnaker |

| Runtime | Container orchestration | Kubernetes (EKS, GKE, AKS) |

| Observability | Metrics, logs, traces | Grafana stack, Datadog, Honeycomb |

| Security | Policy, scanning, secrets | OPA, Kyverno, Vault, Trivy |

| Cost | FinOps integration | Kubecost, OpenCost, Infracost |

| AI | Developer assistance | Custom integrations, Copilot |

Why Composable Wins

- No vendor lock-in. Replace any component without rewriting the platform.

- Best-of-breed performance. ArgoCD is better at GitOps than any all-in-one platform's built-in deployment feature.

- Incremental adoption. Start with one layer (portal) and add capabilities over time.

- Team expertise. Your team probably already knows Terraform and ArgoCD. Building on familiar tools accelerates delivery.

The Backstage Ecosystem in 2026

Backstage (Spotify's open-source developer portal) remains the most popular portal layer, but the ecosystem has matured significantly:

- 200+ community plugins for integrating every tool in your stack

- Backstage Software Templates for golden-path scaffolding

- TechDocs for auto-generated documentation from markdown

- Backstage Search unified across services, docs, APIs, and teams

- Alternatives gaining traction: Port (SaaS, faster setup), Cortex (SaaS, scorecards-focused), OpsLevel (SaaS, service maturity)

The trend: organizations under 200 engineers increasingly choose SaaS portals (Port, Cortex) over self-hosting Backstage, because the maintenance burden of running Backstage itself became a platform-team distraction.

Trend 6: Platform Engineering Extends to Data and ML

In 2026, platform engineering is no longer exclusively for application developers. Data engineers, ML engineers, and analysts are the next consumers of platform capabilities.

Data Platform Engineering

| Capability | Traditional | Platform-Enabled (2026) |

|---|---|---|

| Provision a database | Ticket to DBA team (days) | Self-service from approved catalog (minutes) |

| Create a data pipeline | Custom Airflow DAGs per team | Templated pipeline patterns from portal |

| Access production data | Manual permission grants | Policy-based access with automatic PII masking |

| Monitor data quality | Team-specific checks (or none) | Platform-provided data quality framework |

| Manage ML experiments | Each team chooses their own tooling | Unified experiment tracking as platform service |

Why This Matters Now

The same fragmentation problem that hit application development in 2018-2020 (every team with their own deployment scripts, monitoring stack, and on-call process) is now hitting data and ML teams. Platform engineering provides the same solution: standardize the how, let teams focus on the what.

Organizations that extended their platform to data/ML workflows report 40% faster time-to-production for ML models and 60% reduction in "infrastructure friction" reported by data engineers.

Trend 7: Golden Paths That Are Actually Golden

The concept of golden paths (opinionated, platform-recommended ways to accomplish common tasks) is not new. What has changed in 2026 is that platform teams have learned what makes golden paths actually get adopted versus ignored.

Why Most Golden Paths Fail

- Too restrictive. Developers feel constrained and find workarounds.

- Too outdated. The golden path uses patterns from 18 months ago because nobody maintains it.

- No escape hatch. When the golden path does not fit a legitimate use case, there is no supported alternative.

- No migration tooling. Existing services cannot easily move onto the golden path.

What 2026 Golden Paths Look Like

- Opinionated defaults, optional overrides. "Here is how we recommend deploying a service. If you need to deviate, here is the supported escape hatch with clear tradeoffs documented."

- Continuously updated. Golden paths are treated as living code, updated quarterly based on lessons learned and new capabilities.

- Migration-first design. Every new golden path includes automated migration tooling for existing services. A golden path nobody can adopt is just documentation.

- Observable. The platform measures golden path adoption rates and investigates why teams deviate. Low adoption is a product failure, not a developer failure.

Trend 8: Platform Engineering Metrics That Matter

The industry has moved beyond "we shipped a portal" toward measuring actual outcomes. The metrics framework maturing in 2026:

The Four Pillars of Platform Effectiveness

| Pillar | Metrics | Why It Matters |

|---|---|---|

| Developer Experience | NPS, cognitive load score, time-to-first-deploy | Measures whether developers actually like using the platform |

| Delivery Performance | Deploy frequency, lead time, change failure rate, MTTR | DORA metrics prove the platform accelerates delivery |

| Efficiency | Self-service ratio, golden path adoption, cost per service | Measures operational leverage and cost optimization |

| Reliability | Platform uptime, incident rate from platform issues, blast radius | Ensures the platform itself is not causing outages |

Anti-Metrics (What NOT to Measure)

- Number of services in the catalog. Quantity without quality is vanity.

- Portal page views. Traffic does not equal value.

- Tickets deflected. If your baseline is "lots of tickets," you are measuring improvement from a bad starting point.

- Features shipped. Platform teams shipping fast but not measuring adoption are building ghost features.

Trend 9: The Rise of Platform Engineering for Regulated Industries

Financial services, healthcare, and government organizations in 2026 are adopting platform engineering specifically to solve their compliance automation challenge. The insight: if the platform enforces compliance by default, audits become trivial.

Compliance-as-Code Through Platforms

| Regulation | Platform Implementation |

|---|---|

| SOC 2 | Audit logging auto-enabled for all services via platform |

| HIPAA | PII encryption at rest/transit enforced by platform network policies |

| PCI DSS | Payment services auto-deployed in isolated, hardened runtime |

| GDPR | Data residency constraints enforced at provisioning (EU data stays in EU) |

| FedRAMP | Only approved images from platform registry can deploy to production |

The regulatory burden that historically slowed engineering teams becomes a non-issue when the platform guarantees compliance by construction. Developers do not need to think about SOC 2 logging because the platform provides it automatically. This alone justifies the platform investment for many regulated organizations.

Trend 10: Platform Team Structures That Work

After years of experimentation, clear organizational patterns have emerged for effective platform teams in 2026:

The Three Models

| Model | Team Size | Org Size | Description |

|---|---|---|---|

| Embedded | 2-4 engineers | 20-100 eng | Platform responsibility shared with senior engineers who also ship product |

| Dedicated | 5-15 engineers | 100-500 eng | Standalone platform team with product manager |

| Federated | 15-50+ engineers | 500+ eng | Central platform team + domain-specific platform engineers in each vertical |

The Anti-Pattern: The Platform Team That Became a Bottleneck

The number one failure mode: a platform team that takes on so much scope that it becomes the new "infrastructure ticket queue." Signs you are heading here:

- Platform team sprint is 80%+ interrupt-driven requests

- Time-to-deliver new platform capabilities exceeds 3 months

- Developers bypass the platform because it is too slow to get what they need

- Platform team burnout and attrition exceeds organizational average

The fix: Ruthless scoping. A platform team should own the most leveraged 20% of capabilities (the ones that, if done well, make 80% of teams faster). Everything else is either self-service tooling or a supported-but-not-owned community contribution model.

Trend 11: Platform Engineering ROI Becomes Measurable

The hardest question in platform engineering has always been "what is the ROI?" In 2026, organizations finally have enough data to answer it:

Measured ROI From Mature Platform Teams

| Metric | Before Platform | After Platform (12+ months) | Source |

|---|---|---|---|

| Deploy frequency | Weekly | Multiple per day | DORA + internal data |

| Time to onboard new engineer | 2-4 weeks productive | 2-5 days productive | Internal surveys |

| Infrastructure provisioning time | 3-14 days | 15 minutes | Portal analytics |

| Incident MTTR | 45-90 minutes | 15-30 minutes | Incident management data |

| Cloud cost per engineer | $2,500-4,000/month | $1,500-2,500/month | FinOps data |

| Security compliance rate | 60-75% | 95%+ | Audit results |

The ROI Calculation

A typical platform team of 8 engineers (fully loaded cost: $2M/year) supporting 200 application engineers can demonstrate:

- Developer productivity gain: 200 engineers x 1 hour/day saved = 200 hours/day = 50,000 hours/year = $5M+ value (at $100/hour loaded cost)

- Cloud cost reduction: 20-40% savings on $500K+/month spend = $1.2-2.4M/year

- Security/compliance: Avoided audit failures, reduced incident response time = hard to quantify but real

- Attrition reduction: Better DX correlates with lower attrition (2-5% improvement = $200K-500K saved in hiring)

Total measurable ROI: 3-5x the platform team's cost within 12-18 months of maturity.

The Bottom Line

Platform engineering in 2026 is not about building a portal. It is about building a product that makes your entire engineering organization faster, cheaper, and more secure by construction. The trends that matter: AI-native workflows, FinOps at provisioning time, security as a capability, product thinking with real metrics, and composable architectures that evolve with your needs.

The organizations winning are those that started their platform journey 18-24 months ago and are now seeing compounding returns. If you are starting now, the good news: the patterns are well-established, the tooling is mature, and you can learn from others' expensive mistakes.

The organizations losing are those with 10-person platform teams that built a portal nobody uses, measure adoption instead of satisfaction, and have not integrated cost or security into the developer workflow.

If your cloud costs are growing faster than your engineering output, platform engineering with embedded FinOps guardrails is the structural fix. Our cloud cost optimization team works with platform teams to embed cost visibility and optimization directly into the developer workflow, typically reducing cloud waste by 30-50% within 90 days while improving developer experience rather than restricting it.

Further reading: