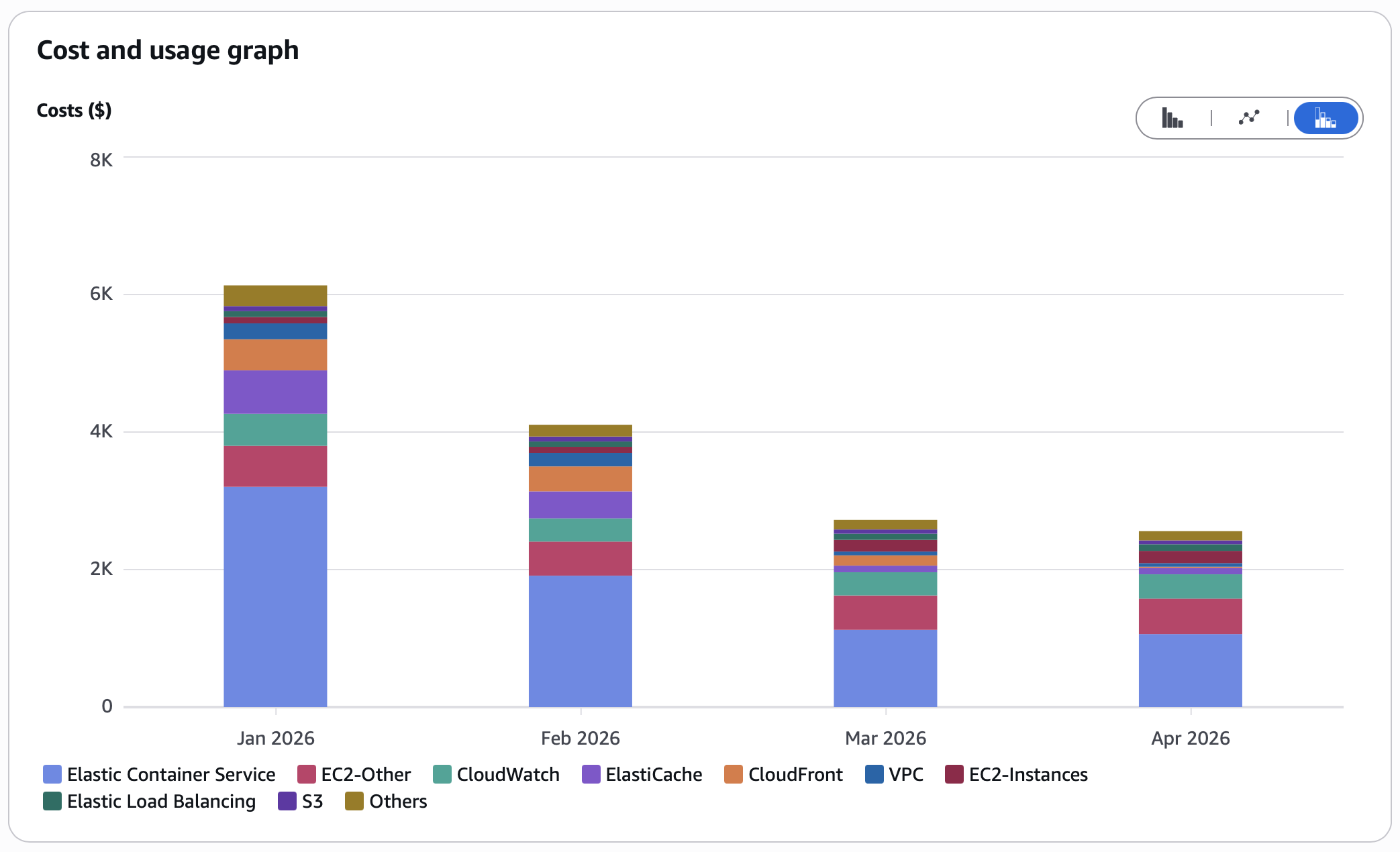

The Problem: A $6.1K/Month AWS Bill That Grew Every Quarter

This growth-stage SaaS company came to us with a familiar story: their AWS bill was climbing 15-20% every quarter while their actual traffic was flat. Engineering leadership knew they were overspending but couldn't identify where the waste was hiding without dedicating engineering time they didn't have.

Their infrastructure had been built by a fast-moving team optimizing for reliability over cost. Every service was oversized "just in case." Every instance ran 24/7 regardless of actual usage patterns. Every feature launch added resources that never got cleaned up after the initial spike.

The $6.1K/month bill broke down into what looked like reasonable individual line items. No single service screamed "waste." But in aggregate, they were paying 2-3x what the workload required.

What We Found: 5 Areas of Hidden Waste

1. ElastiCache: Paying for 5x the Memory They Actually Used ($632 → $92)

The caching layer had been provisioned during a period of rapid growth. Actual utilization? 18% average, 34% peak. They were paying for capacity they would never touch.

Our approach: We analyzed two weeks of peak usage patterns and applied our proprietary rightsizing methodology to match the caching layer precisely to real-world demand. Monthly cost dropped from $632 to $92 with zero impact on cache hit rates or latency.

Risk mitigation: We validated performance metrics in parallel before cutting over, confirming hit rates remained above 99.2% and P99 latency stayed under 2ms.

2. ECS: Always-On Compute for Part-Time Workloads ($3.2K → $1K)

The largest waste was in compute tasks configured to run 24/7 for workloads that only needed processing during business hours. Batch processors, report generators, and internal tools were all running around the clock.

Our approach: We implemented intelligent scheduling policies that precisely match compute capacity to actual demand patterns. Workloads now run exactly when needed at exactly the capacity required.

Monthly ECS spend dropped from $3.2K to $1K without any change to application behavior or availability during business hours.

3. CloudFront: A CDN That Was Costing Money Instead of Saving It ($450+ → $17)

This was the most shocking finding. The CDN had a 5% cache hit ratio. 95% of requests were passing through to origin servers, meaning CloudFront was adding cost and latency rather than reducing it.

The root causes were configuration issues that had accumulated over multiple product launches. The CDN was effectively a passthrough proxy billing them $450+/month for nothing.

Our approach: We resolved the misconfigurations using our CDN optimization playbook. Cache hit ratio went from 5% to 94%. Monthly cost dropped from $450+ to $17 while actually improving end-user latency by 40%.

4. RDS: Oversized Database for a Modest Workload ($100 → $39)

The production database was provisioned for a workload 3-4x larger than what it actually served. CPU never exceeded 12% and connections used less than 25% of available capacity.

Our approach: We performed a controlled migration to a right-sized instance during a maintenance window. Monthly cost: $100 → $39 with no measurable change in query performance.

5. VPC and Networking: Silent Drain from Idle Resources

We identified networking resources that had been provisioned for infrastructure that no longer existed. These individually small costs ($72/month combined) represent $864/year in pure waste with zero functional purpose.

6. Redundant SaaS Subscriptions ($125+/month)

During the infrastructure audit, we discovered overlapping tools: duplicate monitoring platforms, unused seats, and services replicating functionality already available natively. Cancelling the redundancies saved an additional $125+/month ($1,500/year).

The Results: $43,200/Year in Sustained Savings

| Service | Before | After | Monthly Savings | Annual Savings |

|---|---|---|---|---|

| ElastiCache | $632 | $92 | $540 | $6,480 |

| ECS Fargate | $3,200 | $1,000 | $2,200 | $26,400 |

| CloudFront | $450+ | $17 | $433 | $5,196 |

| RDS | $100 | $39 | $61 | $732 |

| VPC/Networking | $72 | $0 | $72 | $864 |

| SaaS subscriptions | $125+ | $0 | $125 | $1,500 |

| Total | $6,100+ | $2,500 | $3,600+ | $43,200+ |

The optimizations were completed in 4 weeks. The engagement paid for itself in the first month. Every month since has been pure savings.

What Made This Work

We did not guess. Every optimization was backed by real utilization data:

- Comprehensive monitoring analysis across all services

- Detailed cost attribution using AWS Cost Explorer

- Access pattern analysis to understand actual demand

- Performance validation before and after every change

No optimization went live without a rollback plan. Every change was deployed incrementally with monitoring gates. The client's engineering team reviewed and approved each change before execution.

Could Your AWS Bill Look Like This?

If your monthly AWS spend has grown faster than your traffic, you likely have similar patterns hiding in plain sight. The most common waste we find across clients:

- 60-80% of compute spend goes to resources sized for peak but running at average

- 70%+ of caching spend is on capacity that never gets used

- CDN misconfigurations often mean you pay for a service while getting no benefit from it

- Database instances are almost always significantly larger than the workload requires

Our cloud cost optimization service identifies and implements these optimizations with a 30% savings guarantee. If we don't find at least 30% in savings, you don't pay.

Get your free Cloud Waste Assessment and we'll show you exactly where your bill is bloated within one week.