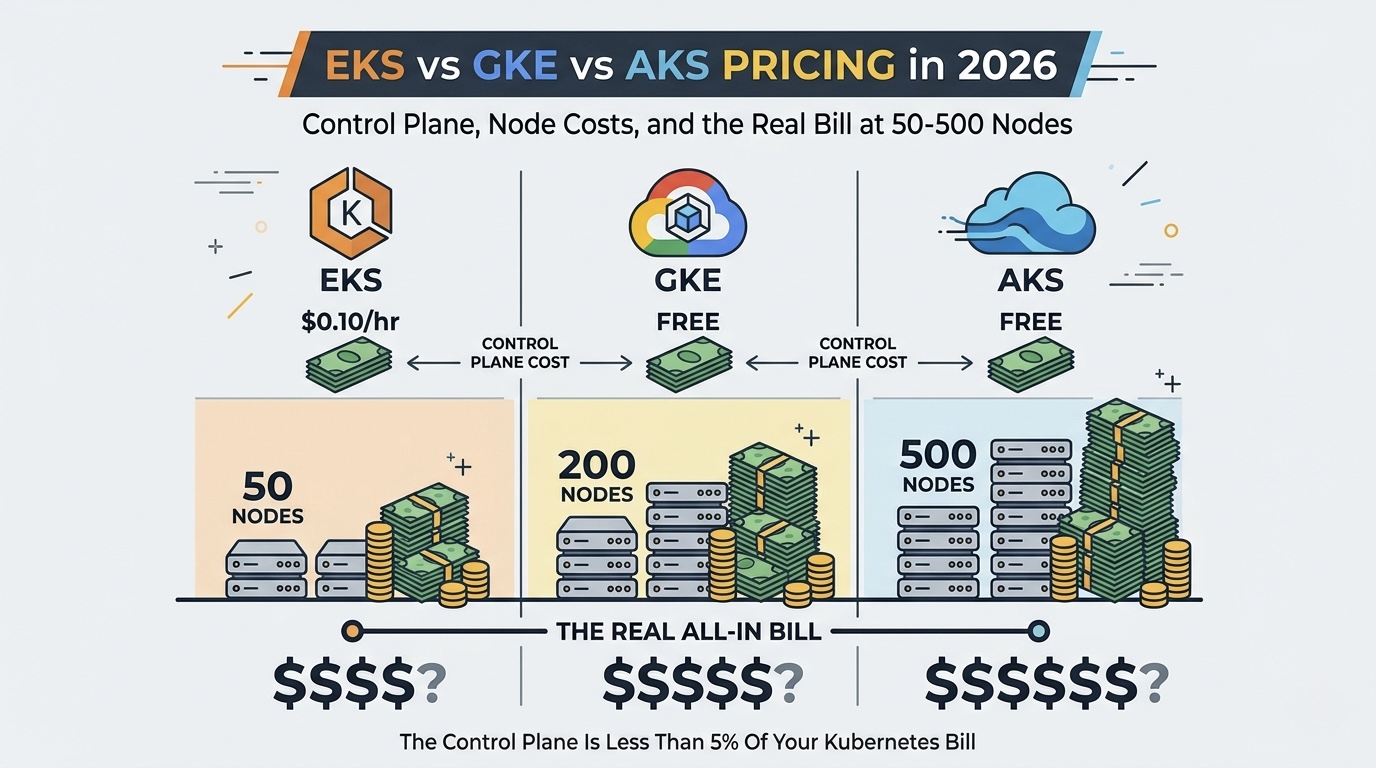

The Control Plane Fee Is a Distraction. Your Real Kubernetes Bill Is Hiding Elsewhere.

Every time someone asks "which managed Kubernetes is cheapest?" the conversation immediately goes to control plane pricing. EKS charges $73/month. GKE has a free tier. AKS is free. Decision made, right?

Not even close. The control plane represents less than 5% of your total Kubernetes spend. The other 95% comes from compute nodes, persistent storage, load balancers, NAT gateways, inter-zone traffic, container registry pulls, and logging. These "secondary" costs vary wildly between providers and are where teams actually overspend by 30-60%.

We have optimized Kubernetes clusters at LeanOps across all three platforms, and the provider that wins on control plane pricing frequently loses on total cost of ownership. A $73/month EKS cluster can be cheaper all-in than a "free" AKS cluster if AWS gives you better Spot availability for your instance types, or if your workloads are egress-heavy and hit Azure's higher cross-zone transfer fees.

This post models the complete cost stack for EKS, GKE, and AKS at three production scales so you can make a decision based on real numbers, not marketing pages.

Control Plane Pricing: The Simple Part

Let us get this out of the way first, since it is the only pricing component that is straightforward.

| Provider | Service | Control Plane Cost | Notes |

|---|---|---|---|

| AWS | EKS | $0.10/hour ($73/month) | Per cluster, no free tier |

| AWS | EKS on Fargate | $0.10/hour + Fargate pricing | Same control plane fee |

| GCP | GKE Standard (zonal) | Free | One free zonal cluster per account |

| GCP | GKE Standard (regional) | $0.10/hour ($73/month) | Multi-zone HA control plane |

| GCP | GKE Autopilot | $0.10/hour ($73/month) | Per cluster |

| Azure | AKS (Free tier) | Free | No SLA on control plane |

| Azure | AKS (Standard tier) | $0.10/hour ($73/month) | 99.95% SLA, mission-critical |

| Azure | AKS (Premium tier) | $0.10/hour ($73/month) | Long-term support + mission-critical |

The pricing convergence is striking: everyone effectively charges $73/month for production-grade control planes. GKE's free zonal cluster and AKS's free tier are for development only (no HA, limited SLA). For production workloads requiring multi-zone availability, all three providers land at $73/month per cluster.

The practical takeaway: Do not choose your Kubernetes platform based on control plane cost. At 50+ nodes, the $73/month is noise. Choose based on compute, networking, operational costs, and team expertise.

Compute Node Pricing: Where 80% of Your Bill Lives

Compute is the dominant cost in any Kubernetes deployment. The comparison here is really about the underlying VM pricing, Spot/preemptible discounts, and committed-use savings plans.

On-Demand Compute Comparison (General Purpose, 2026)

| Instance Type | Provider | vCPU | RAM | On-Demand/hr | Monthly |

|---|---|---|---|---|---|

| m7i.xlarge | AWS | 4 | 16 GB | $0.2016 | $147.17 |

| n2-standard-4 | GCP | 4 | 16 GB | $0.1942 | $141.77 |

| Standard_D4s_v5 | Azure | 4 | 16 GB | $0.1920 | $140.16 |

| m7i.2xlarge | AWS | 8 | 32 GB | $0.4032 | $294.34 |

| n2-standard-8 | GCP | 8 | 32 GB | $0.3884 | $283.53 |

| Standard_D8s_v5 | Azure | 8 | 32 GB | $0.3840 | $280.32 |

| m7i.4xlarge | AWS | 16 | 64 GB | $0.8064 | $588.67 |

| n2-standard-16 | GCP | 16 | 64 GB | $0.7769 | $567.14 |

| Standard_D16s_v5 | Azure | 16 | 64 GB | $0.7680 | $560.64 |

At the on-demand level, Azure is 3-5% cheaper than AWS for equivalent general-purpose VMs, with GCP in between. But nobody runs a production Kubernetes cluster on 100% on-demand pricing. The real competition is in discounting mechanisms.

Spot/Preemptible Pricing Comparison

This is where the providers diverge significantly.

| Feature | AWS Spot | GCP Spot VMs | Azure Spot |

|---|---|---|---|

| Typical discount | 60-80% | 60-91% | 60-90% |

| Maximum discount | ~90% (rare) | 91% | 90% |

| Interruption notice | 2 minutes | 30 seconds | 30 seconds (eviction) |

| Price stability | Variable, market-based | Fixed discount per type | Variable, market-based |

| Availability | High (diversified pools) | Medium (popular types scarce) | Medium-High |

| Best integration | Karpenter | GKE NAP | AKS Node Autoprovisioning |

AWS Spot with Karpenter is the most mature and flexible Spot integration for Kubernetes. Karpenter automatically diversifies across instance types, availability zones, and capacity pools to maximize availability while minimizing interruptions. At scale, this typically delivers 65-75% savings with <2% interruption rate.

GCP Spot VMs offer the highest ceiling discount (91%) but availability can be inconsistent for popular instance families. GKE's Node Auto-Provisioning handles Spot selection, but with less flexibility than Karpenter.

Azure Spot offers aggressive pricing but historically has higher eviction rates than AWS during capacity crunches. AKS's cluster autoscaler handles Spot, but the integration is less sophisticated than Karpenter.

Committed-Use Discounts Comparison

| Mechanism | Provider | Term | Typical Savings | Flexibility |

|---|---|---|---|---|

| Compute Savings Plans | AWS | 1yr / 3yr | 30% / 50% | Any instance family, region |

| EC2 Instance Savings Plans | AWS | 1yr / 3yr | 35% / 55% | Specific family, any size |

| Committed Use Discounts | GCP | 1yr / 3yr | 20% / 35% | Specific machine family |

| Flexible CUDs | GCP | 1yr / 3yr | 15% / 28% | Cross-family within region |

| Reserved Instances | Azure | 1yr / 3yr | 30% / 50% | Specific VM series and region |

| Azure Savings Plans | Azure | 1yr / 3yr | 15% / 30% | Any VM family, flexible |

AWS's Compute Savings Plans offer the best balance of discount depth and flexibility. GCP's committed-use discounts are the weakest (20-35%) but their sustained-use discounts (automatic 30% after running a full month) partially compensate. Azure matches AWS on reservation depth but offers less flexibility in their savings plan tiers.

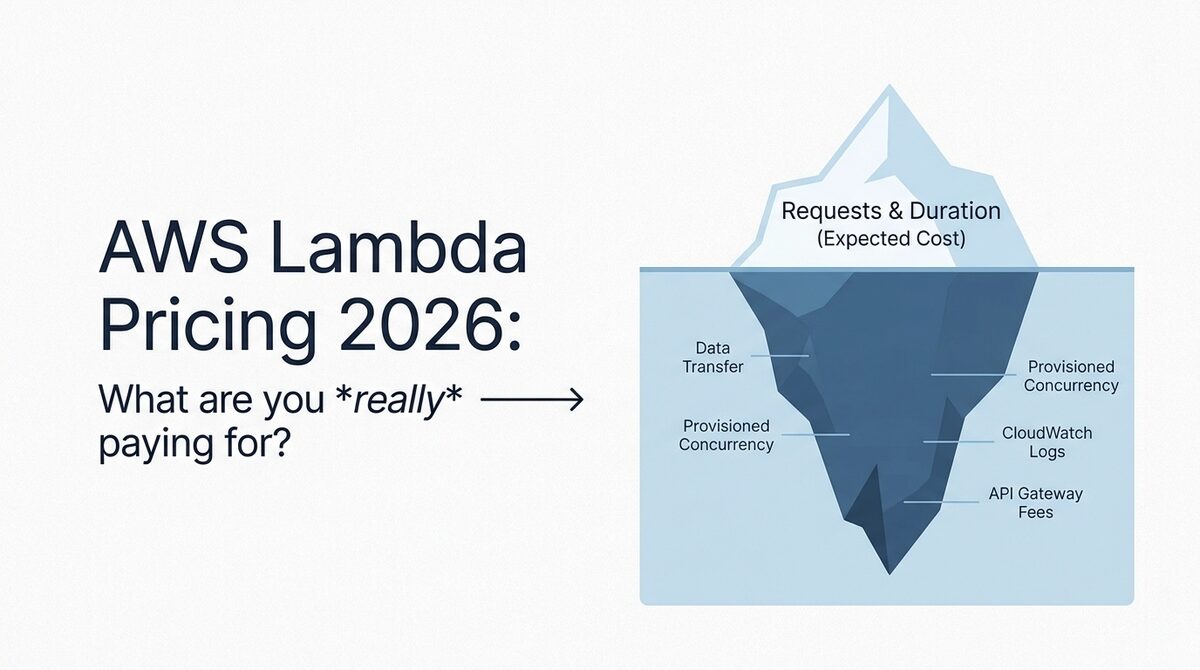

Networking Costs: The Hidden 15-25% of Your Kubernetes Bill

Networking is where cloud Kubernetes bills become genuinely confusing. Inter-zone traffic, NAT gateway fees, load balancer charges, and egress pricing all add up, and they vary substantially across providers.

NAT Gateway / Cloud NAT Pricing

Kubernetes pods in private subnets need NAT for outbound internet access. This is one of the most complained-about costs on AWS.

| Component | AWS (NAT Gateway) | GCP (Cloud NAT) | Azure (NAT Gateway) |

|---|---|---|---|

| Hourly charge | $0.045/hr ($32.85/mo) | $0.044/hr per gateway ($32.12/mo) | $0.045/hr ($32.85/mo) |

| Data processing | $0.045/GB | $0.045/GB | Free |

| Typical 50-node cost | $200-400/month | $180-350/month | $33-65/month |

Azure wins decisively on NAT. No per-GB processing charge means your NAT costs scale with time, not traffic. A 200-node cluster pushing 2TB outbound through NAT costs $90/month on Azure vs $122/month on AWS/GCP. This adds up quickly for clusters running pods that call external APIs.

Load Balancer Pricing

Every Kubernetes Service of type LoadBalancer provisions a cloud load balancer. At scale, clusters can have dozens.

| Component | AWS (ALB) | GCP (External LB) | Azure (Standard LB) |

|---|---|---|---|

| Hourly charge | $0.0225/hr ($16.43/mo) | $0.025/hr ($18.25/mo) | Free (5 rules included) |

| Per-rule charge | $0.008/hr per rule | Included | $0.01/hr per extra rule |

| Data processed | $0.008/LCU-hour | $0.008-0.012/GB | $0.005/GB |

| 10 LBs typical cost | $250-400/month | $300-450/month | $50-150/month |

Azure's Standard Load Balancer is free for the base configuration with 5 rules. This is a significant advantage for microservices architectures with many services, where EKS and GKE clusters can easily have 10-20 load balancers costing $200-400/month.

Inter-Zone Traffic

Kubernetes spreads pods across availability zones for resilience. Every cross-zone API call, database query, or service mesh hop incurs charges.

| Route | AWS | GCP | Azure |

|---|---|---|---|

| Same zone | Free | Free | Free |

| Cross-zone (same region) | $0.01/GB each direction | $0.01/GB each direction | $0.01/GB each direction (first 5TB free in some regions) |

| Cross-region | $0.02/GB | $0.01-0.08/GB | $0.02-0.05/GB |

| Internet egress (first 10TB) | $0.09/GB | $0.12/GB | $0.087/GB |

Cross-zone traffic is identical across providers ($0.01/GB), but it adds up fast in Kubernetes. A 200-node cluster with a service mesh (Istio, Linkerd) generating 500GB of cross-zone traffic per month pays $5,000 in inter-zone fees alone. This is the same on all three providers.

Optimization tip: Use topology-aware routing (available on all three platforms) to prefer same-zone communication. This typically reduces cross-zone traffic by 60-80%.

Real-World Cost Modeling: 50, 200, and 500 Nodes

Model Assumptions

- Compute mix: 70% on-demand/committed, 30% Spot/Preemptible

- Instance size: 8 vCPU, 32GB RAM (general purpose)

- Committed discount applied: 1-year term at platform rates

- Load balancers: 5 per cluster

- Persistent storage: 5TB SSD (gp3/pd-ssd/Premium SSD)

- NAT traffic: 500GB/month per 50 nodes

- Cross-zone traffic: 100GB/month per 50 nodes

- Logging: 200GB/month per 50 nodes (CloudWatch/Cloud Logging/Azure Monitor)

- Cluster count: 1 (multi-AZ production)

50-Node Cluster (Startup/Mid-Stage)

| Cost Component | EKS (AWS) | GKE Standard | GKE Autopilot | AKS |

|---|---|---|---|---|

| Control plane | $73 | $73 | $73 | $73 |

| Compute (35 committed + 15 Spot) | $10,302 | $9,919 | $11,400 | $9,810 |

| Persistent storage (5TB gp3/pd-ssd) | $400 | $850 | $850 | $460 |

| Load balancers (5) | $165 | $183 | $183 | $25 |

| NAT/egress | $265 | $255 | $255 | $65 |

| Cross-zone traffic | $100 | $100 | $100 | $100 |

| Logging & monitoring | $350 | $200 | $200 | $275 |

| Container registry | $50 | $30 | $30 | $40 |

| Total | $11,705 | $11,610 | $13,091 | $10,848 |

At 50 nodes, AKS wins by approximately 7-10% over EKS/GKE Standard, primarily from free load balancers and NAT without processing fees. GKE Autopilot costs 12% more than GKE Standard due to the management premium on compute pricing.

200-Node Cluster (Growth-Stage/Enterprise)

| Cost Component | EKS (AWS) | GKE Standard | GKE Autopilot | AKS |

|---|---|---|---|---|

| Control plane | $73 | $73 | $73 | $73 |

| Compute (140 committed + 60 Spot) | $41,208 | $39,676 | $45,600 | $39,240 |

| Persistent storage (20TB) | $1,600 | $3,400 | $3,400 | $1,840 |

| Load balancers (10) | $330 | $365 | $365 | $50 |

| NAT/egress | $1,060 | $1,020 | $1,020 | $133 |

| Cross-zone traffic | $400 | $400 | $400 | $400 |

| Logging & monitoring | $1,400 | $800 | $800 | $1,100 |

| Container registry | $150 | $80 | $80 | $120 |

| Total | $46,221 | $45,814 | $51,738 | $42,956 |

At 200 nodes, AKS leads by 7% over GKE Standard and 7.5% over EKS. The networking cost advantage compounds at scale. GKE's storage pricing (pd-ssd at $0.17/GB vs gp3 at $0.08/GB) is a meaningful disadvantage for storage-heavy workloads.

500-Node Cluster (Large Enterprise)

| Cost Component | EKS (AWS) | GKE Standard | GKE Autopilot | AKS |

|---|---|---|---|---|

| Control plane | $146 (2 clusters) | $146 | $146 | $146 |

| Compute (350 committed + 150 Spot) | $103,020 | $99,190 | $114,000 | $98,100 |

| Persistent storage (50TB) | $4,000 | $8,500 | $8,500 | $4,600 |

| Load balancers (20) | $660 | $730 | $730 | $100 |

| NAT/egress | $2,650 | $2,550 | $2,550 | $165 |

| Cross-zone traffic | $1,000 | $1,000 | $1,000 | $1,000 |

| Logging & monitoring | $3,500 | $2,000 | $2,000 | $2,750 |

| Container registry | $300 | $150 | $150 | $250 |

| Total | $115,276 | $114,266 | $129,076 | $107,111 |

At 500 nodes, AKS saves $7,000-8,000/month over EKS/GKE Standard. That is $84,000-96,000 annually. GKE's expensive SSD storage and GCP's higher egress rates erode its compute pricing advantage. GKE Autopilot at this scale costs 13% more than Standard, making it harder to justify unless operational simplicity is worth $15K/month.

The Hidden Costs Nobody Models

The tables above capture the big-ticket items. But there is an entire layer of costs that never appear in a pricing calculator because they stem from system behavior under production load. At 200-500 nodes, these "invisible" line items add 8-15% to your real bill.

Node Group Overhead: Bin-Packing Inefficiency

Kubernetes does not pack pods perfectly into nodes. There is always stranded capacity: CPU or memory left on a node that is too small for another pod to claim. This wasted headroom is real money.

| Autoscaler | Provider | Typical Bin-Packing Efficiency | Wasted Capacity | Monthly Waste (200 nodes) |

|---|---|---|---|---|

| Karpenter | EKS | 78-85% | 15-22% | $6,200-9,100 |

| GKE Autopilot | GKE | 85-92% | 8-15% | $3,600-7,600 |

| Cluster Autoscaler | AKS | 70-78% | 22-30% | $8,600-11,800 |

| AKS with KEDA + NAP | AKS | 76-83% | 17-24% | $6,700-9,400 |

Why GKE Autopilot wins here: Autopilot provisions exact pod-shaped compute. No node-level fragmentation. You pay per vCPU/GB requested, so there is zero stranded capacity by design. The 10-20% Autopilot premium is partially (or fully) offset by eliminating bin-packing waste.

Why AKS's default Cluster Autoscaler is worst: It provisions nodes in fixed-size node pools. If your pods request 3.2 vCPU and your nodes are 8 vCPU, you fit 2 pods per node with 1.6 vCPU (20%) permanently wasted. Karpenter's ability to select right-sized instances dynamically gives EKS a 5-8% edge over stock AKS.

Quantified at 500 nodes: A 500-node EKS cluster with Karpenter wastes approximately $15,500-22,700/month in stranded capacity. The same workload on GKE Autopilot wastes $0 in bin-packing (you pay exactly for what pods request). On AKS with standard Cluster Autoscaler, waste reaches $21,500-29,500/month. This single factor can flip the total cost ranking.

DNS Costs at Scale: CoreDNS Is Not Free

Every Kubernetes cluster runs CoreDNS for internal service discovery. At small scale it is negligible. At 500 nodes with thousands of services, DNS becomes a real line item.

EKS (CoreDNS self-managed):

- Default: 2 CoreDNS pods handling all cluster DNS

- At 200+ nodes: you need 6-10 CoreDNS pods to avoid latency spikes and dropped queries

- Each CoreDNS pod needs 0.5 vCPU + 512MB RAM minimum at scale

- Cost: 10 pods x (0.5 vCPU x $0.025/hr + 0.5GB x $0.003/hr) x 730 hours = $102/month

- Plus: NodeLocal DNSCache DaemonSet (recommended at 100+ nodes) adds another 0.1 vCPU + 128MB per node

- NodeLocal DNSCache at 500 nodes: 500 x (0.1 vCPU x $0.025/hr + 0.128GB x $0.003/hr) x 730 = $1,053/month

- Total EKS DNS cost at 500 nodes: ~$1,155/month

GKE (Cloud DNS integration):

- GKE offers Cloud DNS for GKE at $0.04/zone/hour for cluster-local zones

- Typical cluster: 1 zone, $29.20/month

- Query charges: $0.40 per million queries (first billion/month free)

- At 500 nodes generating ~50M queries/day: still within free tier

- Total GKE DNS cost at 500 nodes: ~$30/month

AKS (CoreDNS self-managed):

- Same CoreDNS scaling issues as EKS

- AKS does not have a Cloud DNS equivalent for in-cluster resolution

- Total AKS DNS cost at 500 nodes: ~$1,050/month (slightly cheaper compute)

At 500 nodes, GKE saves $1,000+/month on DNS alone. This is never in any pricing calculator.

Image Pull Costs: Container Registry Data Transfer

Every time a node starts (or a pod is scheduled to a new node), it pulls container images. At 500 nodes doing 100 deploys/week, the data transfer adds up.

Assumptions:

- Average image size: 500MB (compressed)

- 100 deploys/week = 14.3 deploys/day

- Each deploy pulls to ~30% of nodes (rolling update, 150 nodes)

- Weekly pull volume: 100 deploys x 150 nodes x 500MB = 7.5TB/week = 30TB/month

| Registry | Provider | Same-region pull cost | Cross-region pull cost | Monthly cost (30TB, same region) |

|---|---|---|---|---|

| ECR | AWS | Free | $0.09/GB | $0 |

| GCR/AR | GCP | Free | $0.12/GB | $0 |

| ACR | Azure | Free | $0.087/GB | $0 |

Good news: all three providers offer free same-region pulls. But here is what catches teams:

Multi-region clusters change everything. If you run nodes in us-east-1 and eu-west-1 with a single registry:

- ECR cross-region: 15TB x $0.09/GB = $1,350/month

- GCR cross-region: 15TB x $0.12/GB = $1,800/month

- ACR cross-region: 15TB x $0.087/GB = $1,305/month

The real hidden cost is pull latency causing node startup delays. Cold pulls of 500MB images take 15-45 seconds depending on region and congestion. At 500 nodes with frequent scaling events, this translates to pods in Pending state, which means either you over-provision headroom (cost) or accept slower scaling (reliability). The fix is registry replication ($0.10/GB on ECR, included in ACR Premium at $1.67/day), which adds $150-300/month but eliminates the latency penalty.

ECR also charges for storage: $0.10/GB/month. A team with 200 images averaging 2GB each (with 5 tags per image) stores 2TB in ECR at $200/month. GCR and ACR have similar storage costs but ACR's Basic tier ($0.167/day = $5/month) includes 10GB free.

Commitment Strategy by Provider

The tables above use a blended compute rate (70% committed, 30% Spot). But HOW you commit matters enormously. The wrong commitment structure locks you into capacity you do not need or leaves savings on the table.

EKS: Savings Plans + Reserved Instances

AWS offers two main commitment vehicles for EKS workloads:

| Mechanism | 1-Year Savings | 3-Year Savings | Flexibility | Best For |

|---|---|---|---|---|

| Compute Savings Plans | 30% | 50% | Any instance family, any region | Growing clusters, uncertain mix |

| EC2 Instance Savings Plans | 35% | 55% | Specific family, any size/AZ | Stable workloads, known family |

| Standard Reserved Instances | 38% | 60% | Specific instance type + AZ | Ultra-stable baseline only |

The EKS play: Layer Compute Savings Plans at 60-70% of your baseline, then use Spot for the remaining 30-40%. Do not buy Standard RIs unless you have 12+ months of stable capacity data proving you will use specific instance types. The 3-5% extra discount on RIs vs Savings Plans is not worth the inflexibility risk.

1-year vs 3-year tradeoff: A 1-year Compute Savings Plan saves 30%. A 3-year saves 50%. The breakeven for 3-year is only worth it if you are confident your compute needs will not shrink AND you will stay on AWS. For Kubernetes workloads that might migrate or right-size aggressively, 1-year terms preserve optionality.

GKE: Committed Use Discounts (CUDs)

GCP's commitment model is simpler but less flexible:

| Mechanism | 1-Year Savings | 3-Year Savings | Flexibility | Best For |

|---|---|---|---|---|

| Resource-based CUDs | 28% | 46% | Specific machine family + region | Stable workloads, known family |

| Flexible CUDs | 15% | 28% | Cross-family within region | Variable workloads |

| Spend-based CUDs | 20% | 35% | Dollar commitment, any resource | Mixed compute + other services |

How CUDs interact with Autopilot: CUDs apply to Autopilot pod resources. If you commit to 100 vCPUs in n2 family, and your Autopilot pods run on n2 instances, the CUD discount applies automatically. This makes Autopilot + CUDs a powerful combination: you get pod-level scaling with committed pricing on the baseline.

The GKE play: Use resource-based CUDs for 50-60% of your steady-state Autopilot pod CPU/memory. Layer Spot pods for batch and fault-tolerant workloads. The 46% 3-year discount is the deepest standard commitment GCP offers and partially closes the gap with AWS Savings Plans.

AKS: Reserved VM Instances + Azure Savings Plans

Azure has the most layered commitment options:

| Mechanism | 1-Year Savings | 3-Year Savings | Flexibility | Best For |

|---|---|---|---|---|

| Reserved VM Instances | 36% | 60% | Specific VM series + region | Stable baseline, known series |

| Azure Savings Plans | 15% | 30% | Any VM family, any region | Variable workloads |

| Azure Hybrid Benefit | 40-72% | N/A (license) | Windows/SQL Server VMs | Windows container workloads |

The AKS play: Azure Reserved Instances at 3-year offer the deepest discount (60%) of any provider for committed baseline. Combine with Azure Savings Plans for the variable layer. If running Windows containers, Azure Hybrid Benefit (bring your own license) stacks with RIs for up to 72% total discount.

Concrete Calculation: 200-Node Cluster with 70% Committed

Let us model a 200-node cluster (8 vCPU, 32GB each, general purpose) with 140 nodes committed and 60 on Spot:

Without commitments (on-demand + Spot):

| Provider | 140 On-Demand (monthly) | 60 Spot (monthly) | Total Compute |

|---|---|---|---|

| EKS | 140 x $294 = $41,160 | 60 x $88 = $5,280 | $46,440 |

| GKE | 140 x $284 = $39,760 | 60 x $71 = $4,260 | $44,020 |

| AKS | 140 x $280 = $39,200 | 60 x $84 = $5,040 | $44,240 |

With 1-year commitments on the 140-node baseline:

| Provider | Commitment Type | Committed Rate | 140 Committed | 60 Spot | Total | Annual Savings |

|---|---|---|---|---|---|---|

| EKS | Compute Savings Plan 1yr | 30% off | $28,812 | $5,280 | $34,092 | $148,176 |

| GKE | Resource CUD 1yr | 28% off | $28,627 | $4,260 | $32,887 | $133,596 |

| AKS | Reserved Instances 1yr | 36% off | $25,088 | $5,040 | $30,128 | $169,344 |

With 3-year commitments on the 140-node baseline:

| Provider | Commitment Type | Committed Rate | 140 Committed | 60 Spot | Total | Annual Savings |

|---|---|---|---|---|---|---|

| EKS | Compute Savings Plan 3yr | 50% off | $20,580 | $5,280 | $25,860 | $247,008 |

| GKE | Resource CUD 3yr | 46% off | $21,470 | $4,260 | $25,730 | $219,480 |

| AKS | Reserved Instances 3yr | 60% off | $15,680 | $5,040 | $20,720 | $282,240 |

Read that table again. AKS with 3-year Reserved Instances saves $282,240/year on a 200-node cluster compared to on-demand pricing. That is $35,000/year more than EKS and $63,000/year more than GKE at the same commitment level. Azure's 60% RI discount is the most aggressive in the market.

The tradeoff: 3-year Azure RIs lock you to a specific VM series in a specific region. If Azure releases a better instance type in 18 months, you are stuck. EKS Compute Savings Plans let you shift between instance families freely, which is worth the 10% shallower discount for teams still optimizing their workload profiles.

When to Switch Providers (And When to Stay)

After reading the numbers above, the temptation is obvious: "We should move to AKS and save $84K/year." Maybe. But the migration itself has a cost that most teams severely underestimate.

Migration Cost Estimate

Moving a 200-node Kubernetes cluster between cloud providers is not a lift-and-shift. It involves rewriting cloud-specific integrations, re-architecting networking, migrating data, retraining teams, and running parallel environments during cutover.

| Migration Component | Effort (200-node cluster) | Cost Estimate |

|---|---|---|

| Infrastructure-as-code rewrite | 3-6 weeks | $50,000-100,000 |

| CI/CD pipeline migration | 2-4 weeks | $30,000-60,000 |

| Cloud-native service replacements | 4-8 weeks | $60,000-150,000 |

| Data migration (databases, queues) | 2-4 weeks | $30,000-80,000 |

| Testing and validation | 2-3 weeks | $20,000-50,000 |

| Parallel running costs | 1-2 months | $40,000-80,000 |

| Team training and ramp-up | Ongoing (3-6 months) | $20,000-40,000 |

| Total | 2-4 months | $250,000-560,000 |

"Cloud-native service replacements" is the wildcard. If your EKS cluster uses ALB Ingress Controller, AWS Secrets Manager, IAM Roles for Service Accounts (IRSA), RDS with IAM auth, SQS-triggered workers, and CloudWatch Container Insights, every one of those needs a GKE or AKS equivalent. Some are straightforward (Secrets Manager to Key Vault). Others require significant re-architecture (SQS triggers to Pub/Sub or Service Bus, IRSA to Workload Identity).

Break-Even Timeline at Different Cost Gaps

The question is simple: how long until the monthly savings pay off the upfront migration investment?

| Monthly savings after migration | Migration cost ($350K mid-range) | Break-even | 3-year net savings |

|---|---|---|---|

| $3,000/month | $350,000 | 9.7 years | -$242,000 (loss) |

| $5,000/month | $350,000 | 5.8 years | -$170,000 (loss) |

| $8,000/month | $350,000 | 3.6 years | -$62,000 (loss) |

| $10,000/month | $350,000 | 2.9 years | +$10,000 |

| $15,000/month | $350,000 | 1.9 years | +$190,000 |

| $20,000/month | $350,000 | 1.5 years | +$370,000 |

This table assumes zero productivity loss during migration, which is generous. In reality, teams spend 3-6 months operating at 60-70% velocity while learning the new platform. That opportunity cost is not captured above.

The Real Answer

If your cost gap between providers is under $5,000/month, do not migrate. The break-even is too far out, the risk is too high, and you will almost certainly find $5K/month in optimization within your current provider. Seriously. Right-sizing pods, deploying Karpenter (EKS) or switching to Autopilot (GKE), optimizing Spot usage, and fixing egress routing will close a $5K gap in weeks, not months.

If your gap is $5,000-10,000/month, optimize aggressively within your current provider first. If after 90 days of focused optimization the gap persists, then begin scoping a migration. But validate the gap is structural (provider pricing differences) not operational (your configuration is suboptimal).

If your gap exceeds $10,000/month and persists after optimization, migration is worth evaluating seriously. At $15K+/month savings, you break even within 2 years and gain $190K+ over 3 years. That justifies the disruption.

The scenarios where migration is clearly right:

- You are running 500+ nodes with a $15K+/month gap that is structural

- You are already planning a major re-architecture (microservices migration, K8s version upgrade, multi-region expansion) and can piggyback the cloud move

- Your existing provider is end-of-lifing a service you depend on

- Your enterprise agreement is expiring and the new provider offers a 30%+ EA discount that compounds with infrastructure savings

The scenarios where migration is clearly wrong:

- The cost gap is under $5K/month at any scale

- Your team has deep expertise on the current provider and would need 6+ months to reach equivalent proficiency

- You have 3-year commitments (Savings Plans, CUDs, RIs) with 18+ months remaining

- The gap is primarily from operational inefficiency, not structural pricing

For teams that decide to optimize within their current provider (which is most teams), our Kubernetes cost optimization service typically finds 30-50% savings without any migration. Start with a free Cloud Waste Assessment to quantify your optimization headroom before considering a provider switch.

GKE Autopilot vs Standard: When Is the Premium Worth It?

GKE Autopilot charges per pod resource request (vCPU and memory) rather than per node. Google manages the nodes, enforces security policies, and auto-scales automatically.

Autopilot Pricing Model

| Resource | Price/hour | Monthly (per unit, full utilization) |

|---|---|---|

| vCPU | $0.0445 | $32.49 |

| Memory (per GB) | $0.0049 | $3.58 |

| Ephemeral storage (per GB) | $0.000054 | $0.04 |

| Spot Pods vCPU | $0.0134 | $9.78 |

| Spot Pods Memory (per GB) | $0.0015 | $1.10 |

When Autopilot Wins

- Small teams (under 5 engineers): Eliminating node management saves 10-20 hours/week of operations work. At $150/hr engineering cost, that is $6,000-12,000/month in labor savings, more than offsetting the 10-20% compute premium.

- Variable workloads: Autopilot scales to zero during off-hours. A batch processing cluster that runs 8 hours/day pays 33% of a 24/7 Standard cluster.

- Security-conscious environments: Autopilot enforces hardened nodes, prevents privileged containers, and handles OS patching automatically.

When Standard Wins

- Large teams with platform engineers: If you already have a team managing nodes, the operational savings disappear and you are just paying a premium.

- GPU workloads: Autopilot GPU support is limited and more expensive than self-managed GPU nodes.

- Cost-optimized architectures: Standard mode lets you run Spot instances, right-size nodes, bin-pack aggressively, and use node pools tuned to workload profiles.

- Workloads with specific node requirements: DaemonSets, host networking, privileged access patterns that Autopilot restricts.

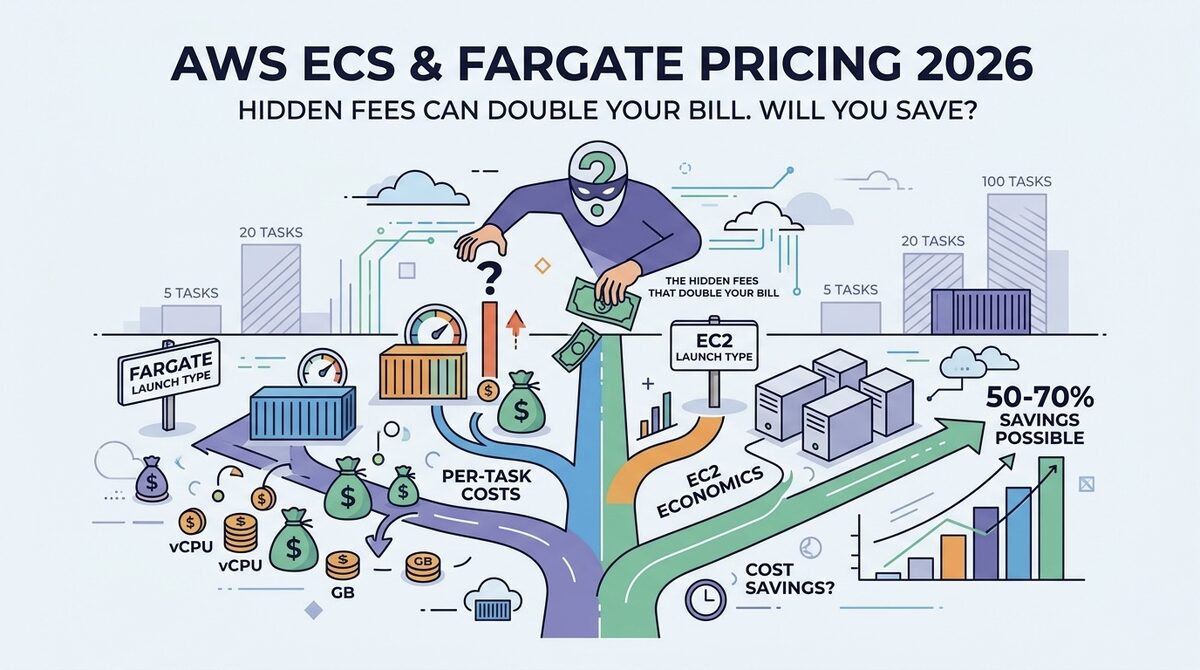

EKS on Fargate vs EC2: The Serverless K8s Tax

EKS on Fargate eliminates node management by running each pod on dedicated compute. The pricing:

| Resource | Fargate Price/hour | Equivalent EC2 (m7i) |

|---|---|---|

| vCPU | $0.04048 | ~$0.025 (with Savings Plan) |

| Memory (per GB) | $0.004445 | ~$0.003 (with Savings Plan) |

Fargate costs roughly 60% more per unit of compute compared to EC2 with Savings Plans. A pod requesting 2 vCPU and 4GB RAM costs $3.23/day on Fargate vs $2.02/day on EC2.

At 200 pods running 24/7, that is $19,380/month on Fargate vs $12,120/month on EC2. The delta is $7,260/month for not managing nodes.

When Fargate makes sense:

- Batch jobs and cron workloads (pay only for execution time)

- Small clusters under 20 pods where node management overhead is disproportionate

- Dev/test environments that should scale to zero

When EC2 is clearly better:

- Steady-state production workloads above 20 pods

- Cost-sensitive environments where 60% premium is unjustifiable

- Workloads requiring DaemonSets, host volumes, or GPU access

For a deeper dive on ECS/Fargate pricing, see our AWS ECS and Fargate pricing breakdown.

Operational Cost: The Factor Nobody Models

Raw infrastructure pricing is only part of the Kubernetes total cost of ownership. Operational complexity varies significantly across providers, and that translates to engineering hours (and dollars).

Platform Maturity Comparison

| Capability | EKS | GKE | AKS |

|---|---|---|---|

| Managed upgrades | Manual (with managed add-ons) | Automatic (with release channels) | Automatic (with maintenance windows) |

| Node auto-scaling | Karpenter (best-in-class) | NAP (good) / Autopilot | Cluster Autoscaler / NAP (preview) |

| GPU scheduling | Full support (Karpenter) | Full support (GKE GPU pools) | Full support (GPU node pools) |

| Service mesh built-in | No (add-on required) | Anthos Service Mesh (included) | Open Service Mesh (deprecated) / Istio |

| Monitoring included | CloudWatch (extra cost) | Cloud Monitoring (generous free tier) | Azure Monitor (extra cost) |

| Secret management | AWS Secrets Manager ($0.40/secret/mo) | Secret Manager ($0.06/version/mo) | Key Vault ($0.03/10K operations) |

| Policy enforcement | Custom (OPA/Gatekeeper) | Policy Controller (built-in) | Azure Policy (built-in) |

| Cluster creation time | 10-15 minutes | 5-10 minutes | 5-10 minutes |

GKE has the most batteries-included experience. Cloud Monitoring free tier, built-in service mesh, release channels with automatic upgrades, and policy controller reduce operational overhead significantly. For teams without dedicated platform engineers, this can save 20-40 engineering hours per month.

EKS has the best ecosystem. Karpenter is genuinely the best node auto-scaler in the Kubernetes ecosystem. The AWS Marketplace, deep IAM integration (IRSA/Pod Identity), and massive community mean almost every tool and pattern has been battle-tested on EKS.

AKS is the cheapest to operate from pure infrastructure cost perspective, with free load balancers, free NAT processing, and competitive compute pricing. But Azure's Kubernetes ecosystem is less mature, and some features lag 6-12 months behind EKS/GKE.

Cost Optimization Strategies by Provider

EKS Cost Optimization (Top 5)

-

Deploy Karpenter (saves 30-50% on compute): Replaces Cluster Autoscaler. Automatically selects optimal instance types, uses Spot with diversification, and consolidates underutilized nodes. This is the single highest-impact EKS optimization. See our Kubernetes cost optimization guide for implementation details.

-

Replace NAT Gateway with VPC endpoints (saves $200-2000/month): For traffic to AWS services (ECR, S3, DynamoDB), gateway VPC endpoints are free. Interface VPC endpoints cost $7.30/month but eliminate $0.045/GB processing fees.

-

Use Compute Savings Plans (saves 30-50% on baseline): Cover your minimum steady-state compute with 1-year Compute Savings Plans. Layer Spot on top for variable capacity.

-

Right-size resource requests (saves 20-40%): Most pods over-request CPU and memory by 3-5x. Use VPA recommendations or tools like Kubecost to right-size. Every MB of unnecessary request keeps node capacity hostage.

-

Consolidate clusters (saves $73/month per eliminated cluster): Many teams run separate EKS clusters for dev/staging/QA. Consolidate into namespaces within fewer clusters where isolation requirements allow.

GKE Cost Optimization (Top 5)

-

Use Spot VMs aggressively (saves 60-91%): GCP offers the deepest Spot discounts. Combine with Node Auto-Provisioning for automatic instance type selection and PodDisruptionBudgets for graceful preemption handling.

-

Leverage sustained-use discounts (saves up to 30% automatically): GCP automatically applies a 30% discount for instances running a full month. No commitment required. Stack this with CUDs for the baseline portion.

-

Consider Autopilot for variable workloads (saves 30-60% on idle time): Autopilot scales pod resources to zero when unused. For batch, development, and overnight-idle workloads, this eliminates paying for idle nodes.

-

Use regional persistent disks wisely (saves on redundant storage): Standard regional pd-standard costs $0.04/GB vs pd-ssd at $0.17/GB. Use standard disks for data that does not need SSD performance.

-

Optimize egress with Cloud CDN (saves 50-70% on egress): If your workloads serve content to the internet, Cloud CDN reduces egress from $0.12/GB to $0.02-0.04/GB for cached content.

AKS Cost Optimization (Top 5)

-

Use Azure Spot VMs with multiple node pools (saves 60-90%): Create separate node pools for Spot and regular VMs. Use taints and tolerations to direct fault-tolerant workloads to Spot pools.

-

Reserved Instances for baseline (saves 30-50%): Azure RIs offer 30% (1yr) or 50% (3yr) savings. Cover your minimum steady-state with reservations and use Spot for burst capacity.

-

Take advantage of free networking (already cheaper): AKS's free load balancer and free NAT processing are automatic. Make sure you are on Standard Load Balancer (not legacy Basic) to get the full feature set at no extra cost.

-

Use Azure Container Registry with geo-replication wisely (saves 20-40% on pull costs): Place ACR in the same region as your cluster to eliminate cross-region pull fees. Use task-based builds in ACR to avoid expensive build agents.

-

Scale to zero with KEDA (saves 40-70% for event-driven workloads): KEDA (Kubernetes Event-Driven Autoscaling) is an AKS first-class feature that scales deployments to zero replicas when idle. Perfect for queue processors and batch workloads.

When to Choose Each Provider

Choose EKS If:

- Your team already has deep AWS expertise and existing AWS infrastructure

- You need the most mature Spot instance integration (Karpenter)

- Your workloads integrate heavily with AWS services (RDS, DynamoDB, SQS, Lambda)

- You need the largest ecosystem of third-party Kubernetes tools and operators

- GPU workloads requiring diverse instance types (P4d, P5, Inf2, Trn1)

Choose GKE If:

- You want the most managed experience with least operational burden

- Your team is small and cannot dedicate engineers to cluster operations

- You are already on GCP or use BigQuery/Vertex AI extensively

- Multi-cluster management is important (GKE Fleet, Anthos)

- You value automatic security patching and release channel management

Choose AKS If:

- Cost is the primary decision factor (free control plane, free LB, free NAT processing)

- You are in an enterprise with existing Microsoft/Azure agreements (EA discounts stack)

- Compliance requirements favor Azure (FedRAMP High, government clouds)

- Windows containers are part of your workload mix

- You need tight integration with Azure DevOps, Active Directory, or Azure Arc

Choose Multiple Providers If:

- Vendor lock-in risk outweighs operational complexity

- Geographic requirements demand presence in regions only available on specific clouds

- Negotiating leverage with cloud vendors requires multi-cloud credibility

- Team expertise is genuinely split across platforms

For multi-cloud Kubernetes cost management, see our multi-cloud FinOps guide.

The Bottom Line

The managed Kubernetes pricing war is surprisingly close on raw compute. The real differentiation is in networking overhead, operational complexity, and ecosystem maturity:

- AKS is cheapest all-in at every scale we modeled, saving 7-10% over EKS/GKE Standard primarily through free load balancers and zero NAT processing fees. At 500 nodes, that is $84,000+/year in savings.

- GKE Standard offers the best operations experience with automatic upgrades, built-in monitoring, and Autopilot as an easy button for teams that want zero node management.

- EKS has the best optimization ceiling thanks to Karpenter, the deepest Spot integration, and the largest Kubernetes ecosystem. Teams willing to invest in optimization can beat AKS pricing despite higher base costs.

The worst decision? Choosing a Kubernetes platform based solely on control plane pricing. The $73/month difference represents less than 0.5% of a 100-node cluster bill. Focus on compute optimization, networking architecture, and operational efficiency instead.

If your Kubernetes bill exceeds $10,000/month on any platform, our Kubernetes cost optimization team typically finds 30-50% in savings within the first assessment. Start with a free Cloud Waste Assessment to benchmark your cluster efficiency against industry standards.

Further reading: