The GPU Bill That Blindsides Every AI Team

Here is a number worth sitting with: a single p3.2xlarge GPU instance on AWS costs $3.06 per hour. That is $2,203 per month. If it runs at 20% actual utilization, which is common for inference workloads without proper scaling, you are paying $2,203 for $440 worth of compute.

Now multiply that by 10 GPU nodes, which is modest for a production AI team, and you have $17,624 per month in waste. That is $211,000 per year on capacity that is technically "running" but not actually working.

The brutal part is that most teams do not realize this is happening. Their monitoring shows GPU utilization at 80% during peaks. What it does not show is that those peaks last 3 minutes per hour and the node is idle for the other 57. The metrics are not lying. They are just being read wrong.

This guide covers the specific mechanisms that make Kubernetes cost optimization for AI workloads different from standard K8s optimization, the tools that actually fix them, and the configurations that make the difference. Nothing generic. No surface-level advice you have already read three times.

Why Standard K8s Optimization Advice Fails AI Workloads

Generic Kubernetes cost optimization guides tell you to right-size your pods, use Spot instances, and enable autoscaling. That advice is not wrong. It is just incomplete for AI workloads, because AI on Kubernetes has three cost dynamics that standard workloads do not.

Dynamic 1: GPU utilization metrics are deceptive

The metric nvidia-smi reports and that most dashboards display is SM utilization: the percentage of streaming multiprocessors actively computing at the moment of sampling. A GPU training a model at 95% SM utilization looks fully utilized. But if the training loop stalls waiting for data to load from S3 every 30 seconds, the time-averaged utilization might be 25%.

The metric that matters is DCGM_FI_DEV_GPU_UTIL averaged over 5-minute windows from NVIDIA's Data Center GPU Manager. Teams running DCGM alongside Prometheus often find their "well-utilized" GPU clusters are actually running at 15 to 30% time-averaged utilization. That discovery is uncomfortable but valuable.

Dynamic 2: CPU request inflation compounds at AI cluster scale

Kubernetes CPU requests affect scheduling, not actual consumption. A pod requesting 8 CPUs holds 8 CPUs on the node for scheduling purposes, whether it uses them or not. AI workloads are particularly prone to inflated CPU requests because engineers add headroom for data preprocessing pipelines that only spike during certain training phases.

On a 50-node cluster of m5.4xlarge instances (16 vCPU, $0.768/hour), a 40% average request inflation rate means 320 vCPUs reserved but never used. At $0.048/vCPU-hour, that is $3,686 per month in phantom compute reservations before you count a single GPU.

Dynamic 3: Training jobs and inference endpoints have completely opposite cost profiles

Training jobs are bursty, high-intensity, and short-lived. You want maximum compute for minimum time, with Spot instances absorbing interruption risk via checkpointing. Inference endpoints are persistent, variable-load services. You want scale-to-zero during quiet periods and fast scale-out during demand spikes.

Most teams manage both with the same node pool strategy, same scaling configuration, and same instance types. The result is suboptimal for both. Training jobs sit in queues waiting for capacity that inference endpoints are holding idle, and inference endpoints run on oversized nodes provisioned for training workloads.

Understanding GPU Cost Across the Three Major Clouds

Before optimizing, you need to understand what you are actually paying and where the pricing traps are.

| GPU Instance | AWS | GCP | Azure | Common Use Case |

|---|---|---|---|---|

| NVIDIA T4 (16GB) | g4dn.xlarge: $0.526/hr | n1 + T4: ~$0.35/hr | NC4as T4: $0.526/hr | Inference, fine-tuning small models |

| NVIDIA A10G (24GB) | g5.xlarge: $1.006/hr | Not available | NV6ads A10: $0.908/hr | Mid-size inference, diffusion models |

| NVIDIA A100 (40GB) | p4d.24xlarge: $32.77/hr (8x A100) | a2-highgpu-1g: $3.673/hr | NC24ads A100: $3.673/hr | Large model training |

| NVIDIA H100 (80GB) | p5.48xlarge: $98.32/hr (8x H100) | a3-highgpu-8g: ~$32.77/hr | NCH100 v5: available | Foundation model training |

The detail most teams miss on GCP: the A100 at $3.673/hour is per-GPU with no minimum cluster requirement. On AWS, the smallest A100 instance is p4d.24xlarge with 8 A100s at $32.77/hour. If you only need 2 A100s for a training run, you are paying for 8 on AWS. On GCP, you pay for exactly 2. That single difference in pricing granularity changes the TCO calculation significantly for teams running varied model sizes.

NVIDIA MIG: The Optimization Most Teams Have Never Heard Of

NVIDIA A100 and H100 GPUs support Multi-Instance GPU (MIG) partitioning, which lets you carve one physical GPU into up to 7 independent GPU instances with dedicated memory and compute slices.

Why this matters for AI workloads on Kubernetes: most inference endpoints for small models (sub-7B parameters) do not saturate a full A100. They use 20 to 30% of the GPU memory and a fraction of the compute throughput. Without MIG, you pay for the entire A100 to serve one small model. With MIG enabled, you can run 4 to 7 separate inference endpoints on a single A100.

The cost math: an a2-highgpu-1g on GCP at $3.673/hour hosts one A100. With 7-way MIG partitioning, that same instance effectively hosts 7 independent inference workloads. Cost per inference endpoint drops from $3.673/hour to $0.524/hour for the same GPU class.

The NVIDIA device plugin for Kubernetes supports MIG natively. The configuration looks like this:

apiVersion: v1

kind: ConfigMap

metadata:

name: nvidia-mig-config

namespace: kube-system

data:

config.yaml: |

version: v1

flags:

migStrategy: single

mig-configs:

all-7g.40gb:

- devices: all

mig-enabled: true

mig-devices:

"7g.40gb": 1

all-1g.5gb:

- devices: all

mig-enabled: true

mig-devices:

"1g.5gb": 7

Most teams running inference-heavy workloads on A100s are leaving the majority of the GPU's value on the table by not enabling MIG. This is the highest-leverage optimization available for inference cost reduction on modern NVIDIA hardware.

Karpenter vs Cluster Autoscaler: Why the Difference Matters for AI

Both tools scale your cluster. The gap between them is significant for GPU workloads.

The Cluster Autoscaler works with pre-defined node groups. When a pod cannot be scheduled, it picks a node group and adds a node from that group. The problem: you define node groups statically, so you end up creating groups for every GPU instance type you might need. This leads to node pool sprawl with 8 to 15 separate groups, each sitting at minimum capacity.

Karpenter reads the pod's resource requirements directly and selects the right instance type from a pool of allowed instance types at scheduling time. A pod requesting nvidia.com/gpu: 1 and memory: 16Gi gets exactly the right-sized GPU instance, not whatever was available in the nearest pre-defined node group.

For AI workloads, Karpenter's consolidation feature is where the real cost savings come from. It continuously evaluates whether cluster nodes can be consolidated by migrating pods from underutilized nodes to bins where other pods already exist, then terminating the now-empty nodes.

Here is a production-ready Karpenter NodePool configuration for mixed GPU workloads:

apiVersion: karpenter.sh/v1beta1

kind: NodePool

metadata:

name: gpu-workloads

spec:

template:

metadata:

labels:

workload-type: gpu

spec:

nodeClassRef:

apiVersion: karpenter.k8s.aws/v1beta1

kind: EC2NodeClass

name: gpu-nodeclass

requirements:

- key: karpenter.k8s.aws/instance-gpu-count

operator: Gt

values: ["0"]

- key: karpenter.sh/capacity-type

operator: In

values: ["spot", "on-demand"]

- key: karpenter.k8s.aws/instance-family

operator: In

values: ["g5", "g4dn", "p3"]

disruption:

consolidationPolicy: WhenUnderutilized

consolidateAfter: 5m

limits:

nvidia.com/gpu: "20"

The consolidateAfter: 5m setting means Karpenter checks for consolidation opportunities every 5 minutes and terminates underutilized nodes. For bursty AI workloads that spike and then idle, this alone can reduce GPU node costs by 30 to 50% compared to static node groups that hold capacity indefinitely.

Scale-to-Zero for Inference Endpoints: The Right Way to Do It

Horizontal Pod Autoscaler scales on CPU and memory. For LLM inference, these are the wrong metrics. An LLM inference pod at 5% CPU utilization can be fully saturated in terms of throughput because all the work is happening on the GPU, which HPA cannot see.

KEDA (Kubernetes Event-Driven Autoscaling) solves this by scaling on custom metrics: Prometheus queue depth, SQS queue length, Redis list size, or any metric you expose. For inference endpoints, the correct scaling signal is request queue depth, not CPU.

Here is a KEDA ScaledObject configuration that scales an inference deployment based on a Prometheus metric tracking inference request queue depth:

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: llm-inference-scaler

spec:

scaleTargetRef:

name: llm-inference-deployment

minReplicaCount: 0

maxReplicaCount: 10

cooldownPeriod: 300

triggers:

- type: prometheus

metadata:

serverAddress: http://prometheus:9090

metricName: inference_queue_depth

threshold: "5"

query: sum(inference_requests_pending{service="llm-api"})

The minReplicaCount: 0 is where the cost savings live. When no requests are queued for 5 minutes (cooldownPeriod), the deployment scales to zero replicas. Karpenter then detects the empty GPU node and terminates it within its configured consolidation window.

For an inference endpoint that runs 8 hours per day instead of 24, the cost reduction is 67%. A g5.xlarge at $1.006/hour running 8 hours instead of 24 saves $481 per month per node.

Scale-to-Zero Savings Calculator

The formula is straightforward: (hours idle per day × hourly cost × 30) = monthly savings.

Real example: A team with 3 p3.2xlarge instances idle 16 hours/day saves 3 × $3.06 × 16 × 30 = $4,406/month = $52,877/year.

Second example: 5 g5.xlarge for inference, idle 20 hours/day: 5 × $1.006 × 20 × 30 = $3,018/month = $36,216/year.

These numbers assume Karpenter provisions in < 2 minutes. Actual savings are 90-95% of calculated due to spin-up overhead. Even with that haircut, scale-to-zero is the single highest-ROI change available for intermittent GPU workloads. The savings compound with every additional idle hour you eliminate.

Spot Instances for Training Jobs: The Checkpointing Strategy That Makes It Work

Running training jobs on Spot instances saves 60 to 70% on GPU compute. The reason most teams avoid it is fear of interruption. That fear is justified without checkpointing, and completely manageable with it.

AWS gives 2 minutes notice before Spot reclamation. Two minutes is enough time to save model state if your training framework handles it correctly.

Both PyTorch Lightning and the Hugging Face Trainer support checkpoint-on-interrupt hooks. Here is how to configure a Kubernetes Job to handle Spot interruptions with proper checkpointing:

apiVersion: batch/v1

kind: Job

metadata:

name: model-training

spec:

template:

spec:

terminationGracePeriodSeconds: 120

nodeSelector:

karpenter.sh/capacity-type: spot

containers:

- name: trainer

image: your-training-image:latest

command:

- python

- train.py

- --checkpoint-dir=s3://your-bucket/checkpoints/

- --resume-from-checkpoint=latest

- --save-steps=500

lifecycle:

preStop:

exec:

command:

["/bin/sh", "-c", "python save_checkpoint.py && sleep 90"]

restartPolicy: OnFailure

The terminationGracePeriodSeconds: 120 gives the preStop hook the full 2-minute Spot notice window to save state. The --resume-from-checkpoint=latest flag ensures the job picks up from the last saved checkpoint rather than starting over after an interruption.

A training job that takes 8 hours and gets interrupted twice (losing 30 minutes of progress each time) but runs entirely on Spot still saves 55 to 60% compared to running on-demand, even accounting for the repeated work. The math almost always favors Spot for training jobs longer than 2 hours.

Spot GPU Price Table (May 2026)

Current Spot pricing ranges for the most common GPU instances on AWS. These fluctuate by region and time of day, but these ranges represent typical us-east-1 and us-west-2 pricing over the past 90 days:

| Instance | On-Demand | Spot Range | Typical Savings |

|---|---|---|---|

| p3.2xlarge | $3.06/hr | $0.92-1.22/hr | 60-70% |

| p3.8xlarge | $12.24/hr | $3.67-4.90/hr | 60-70% |

| g5.xlarge | $1.006/hr | $0.30-0.50/hr | 50-70% |

| g5.2xlarge | $1.212/hr | $0.36-0.61/hr | 50-70% |

| p4d.24xlarge | $32.77/hr | $9.83-14.80/hr | 55-70% |

The g5 family consistently offers the best Spot availability because of higher fleet capacity. The p4d.24xlarge has the most variable Spot pricing and lowest availability, so always configure Karpenter with on-demand fallback for large training runs on this instance type.

The Control Plane Cost Nobody Talks About

Teams with multiple clusters pay for control plane compute in ways that vary significantly by provider:

| Provider | Control Plane Pricing | For 5 Clusters |

|---|---|---|

| EKS | $0.10/hour = $72/month per cluster | $360/month |

| GKE Standard | $0.10/hour = $72/month per cluster | $360/month |

| GKE Autopilot | No control plane charge | $0/month |

| AKS | Free control plane | $0/month |

GKE Autopilot adds something more interesting than just free control plane: you pay per pod-second of requested CPU, memory, and GPU rather than for underlying node capacity. For bursty AI workloads, this means you only pay when pods are actually running, not for the idle node capacity that Karpenter would terminate anyway but with some delay.

For a team running 5 EKS clusters, switching to AKS or GKE Autopilot saves $360/month in control plane fees alone, before touching a single workload optimization. Across a 3-year period, that is $12,960 in savings that required exactly zero engineering effort to capture.

VPA Recommendations: The Safe Way to Right-Size Production Pods

The Vertical Pod Autoscaler (VPA) in Kubernetes can automatically adjust CPU and memory requests based on observed usage history. The problem with running it in Auto mode on AI workloads is that applying resource changes requires pod restarts, which interrupts running training jobs.

The pattern that works: run VPA in Recommendation mode only. It collects historical data and surfaces right-sizing recommendations without applying them automatically. Review the recommendations weekly, validate against recent peak usage patterns, and apply them during planned maintenance windows.

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: training-job-vpa

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: training-job-deployment

updatePolicy:

updateMode: "Off"

resourcePolicy:

containerPolicies:

- containerName: trainer

minAllowed:

cpu: 1

memory: 4Gi

maxAllowed:

cpu: 16

memory: 128Gi

With updateMode: "Off", VPA only generates recommendations. Check kubectl describe vpa training-job-vpa to see what it recommends. Over 2 to 4 weeks of data collection, VPA recommendations typically reveal 30 to 50% request over-provisioning in AI workloads compared to actual peak consumption.

Namespace Cost Attribution: The Foundation of Accountability

You cannot optimize what you cannot measure at the right granularity. For Kubernetes AI clusters, the minimum viable labeling strategy covers four dimensions: team, project, environment, and model version.

apiVersion: apps/v1

kind: Deployment

metadata:

name: gpt-inference-api

labels:

team: nlp-platform

project: customer-support-ai

environment: production

model-version: gpt-j-6b-v2

Kubecost uses these labels to allocate costs by namespace, team, and project. Without them, you see aggregate cluster spend but cannot answer the question every engineering leader eventually asks: "Which team's model is responsible for the $85,000 spike last month?"

The allocation model in Kubecost is based on resource requests, not actual usage. A pod requesting 4 CPUs and 32GB RAM gets allocated the cost of those resources for its running duration, regardless of what it actually consumes. This is why fixing request inflation directly reduces the cost numbers that show up in chargeback reports, creating a visible incentive for teams to right-size their pods.

The Descheduler: The Tool Most Teams Have Never Deployed

The Kubernetes Descheduler is a CNCF project that runs as a CronJob and evicts pods from underutilized nodes, allowing Karpenter or the Cluster Autoscaler to consolidate those nodes and terminate the empty ones.

The standard Kubernetes scheduler makes placement decisions once, at pod creation time. It never revisits those decisions. A pod placed on a node that later becomes underutilized stays there indefinitely. The Descheduler finds these situations and proactively moves pods to better-utilized nodes.

For AI clusters, the most relevant Descheduler policy is LowNodeUtilization:

apiVersion: "descheduler/v1alpha2"

kind: "DeschedulerPolicy"

profiles:

- name: default

pluginConfig:

- name: LowNodeUtilization

args:

thresholds:

cpu: 30

memory: 30

pods: 10

targetThresholds:

cpu: 60

memory: 60

pods: 40

plugins:

balance:

enabled:

- LowNodeUtilization

This configuration evicts pods from nodes where CPU and memory utilization are both below 30%, and only when there are nodes in the cluster where utilization is below 60%. Combined with Karpenter's consolidation, the Descheduler closes the gap that Karpenter misses: pods that are running but on underutilized nodes that Karpenter cannot consolidate because the pods are technically schedulable where they are.

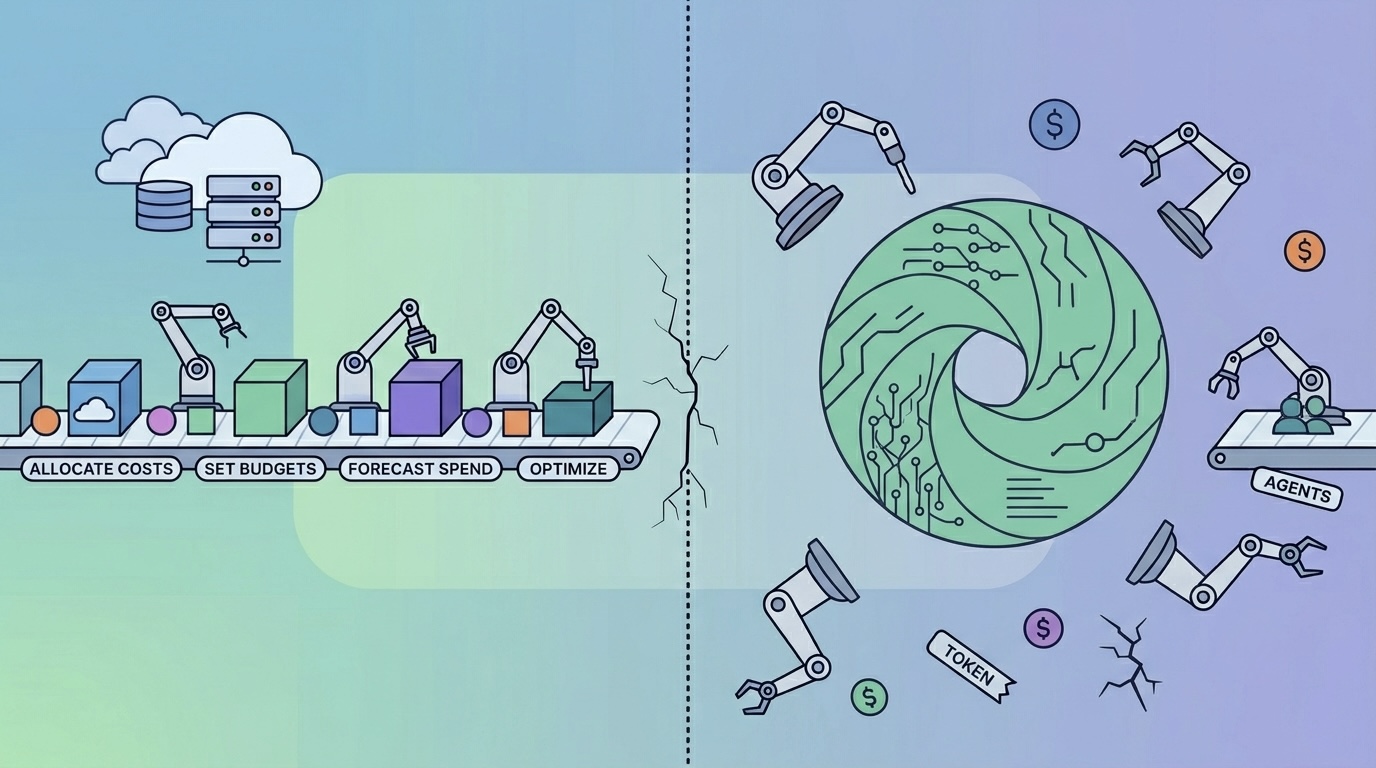

The FinOps Operating Model for AI Kubernetes Clusters

Technical optimizations work. They work even better when the organizational structure around them creates incentives and accountability.

The operating model that works for AI-heavy engineering organizations:

Weekly: GPU utilization review across all clusters. Surface any namespace with time-averaged GPU utilization below 25% for the week. Present these in the weekly engineering sync as a list with owner names attached. The visibility alone changes behavior within 2 to 3 weeks.

Bi-weekly: VPA recommendation review. Pull VPA recommendations for your top 10 highest-spend deployments. Validate against recent peak usage. Schedule right-sizing changes for the next sprint.

Monthly: Spot coverage analysis. For each GPU node pool, calculate the percentage of node-hours that ran on Spot vs On-Demand. Set a target of 60%+ Spot for training workloads and 40%+ for inference. Track progress monthly.

Per model deployment: Require every new model deployment to include a KEDA ScaledObject with scale-to-zero configured for non-production environments, and a minimum idle time threshold for production. Cost approval gates for anything requesting more than 2 GPU hours per day.

For teams that want structured FinOps governance applied to AI workloads, FinOps consulting provides the frameworks and tooling to implement this operating model without building it from scratch.

Complete Configuration Reference

| Optimization | Tool | Expected Savings | Complexity |

|---|---|---|---|

| DCGM GPU utilization monitoring | Prometheus + DCGM Exporter | Visibility only; enables all other optimizations | Low |

| VPA Recommendation mode | VPA | 20-50% compute cost reduction | Low |

| Karpenter with consolidation | Karpenter | 25-45% node cost reduction | Medium |

| KEDA scale-to-zero for inference | KEDA | 50-70% for sporadic endpoints | Medium |

| MIG partitioning for small inference | NVIDIA device plugin + MIG | 50-85% GPU cost reduction | Medium-High |

| Spot for training with checkpointing | Karpenter + training framework | 60-70% training compute cost | Medium |

| Descheduler for node consolidation | Descheduler CronJob | 10-20% additional node cost | Low |

| Namespace cost attribution | Kubecost labels | Enables chargeback accountability | Low |

The right starting point is not the highest-savings item on this list. It is the lowest-complexity item that gives you visibility: DCGM monitoring and Kubecost namespace attribution. Every other optimization builds on being able to see what is actually happening.

Connecting K8s Cost Optimization to Broader Cloud Cost Strategy

Kubernetes is one layer of a larger cloud cost picture. The GPU instances your pods run on are AWS, GCP, or Azure resources subject to the same commitment and pricing optimization strategies as any other compute.

Kubernetes-level optimizations (right-sizing, scale-to-zero, Karpenter consolidation) reduce the number of GPU node-hours you consume. Cloud-level optimizations (Savings Plans, Committed Use Discounts, Reserved Instances) reduce the per-hour cost of the GPU node-hours you do consume. Both levers need to be pulled.

For the full picture on cloud-level cost optimization across AWS, Azure, and GCP, the real-time cloud cost optimization tools guide covers how to instrument visibility at the infrastructure layer, and the AWS cost optimization playbook details specific EC2 and GPU instance commitment strategies that apply directly to EKS clusters.

For teams running AI workloads alongside RAG pipelines and vector databases, the patterns for RAG and vector database cost optimization address the data layer costs that often dwarf the training costs people fixate on.

For cloud operations support in implementing these configurations in production clusters, the operational patterns here translate directly to real infrastructure changes.

Combined Savings: The Full Math

Here is the complete math for a realistic scenario: a team running 5 p3.2xlarge GPU instances 24/7 for mixed training and inference workloads.

Before optimization: 5 × p3.2xlarge × $3.06/hr × 24hr × 30 days = $11,016/month

After scale-to-zero (16 hours idle per day): 5 instances × $3.06/hr × 16hr × 30 = -$7,344/month eliminated

After Spot for training (remaining 8 active hours): $2,203/month remaining per instance × 65% Spot savings = -$1,432/month additional reduction

After right-sizing 2 instances from p3.2xlarge to g5.xlarge: 2 × ($3.06 - $1.006) × 8hr × 30 = -$987/month Plus those 2 right-sized instances also get Spot: 2 × $1.006 × 8 × 30 × 0.60 = -$290/month additional

Total monthly savings: ~$10,053 → 91% reduction

The remaining spend of ~$963/month covers 3 p3.2xlarge instances running 8 hours/day on Spot pricing, plus 2 g5.xlarge instances running 8 hours/day on Spot. That is sufficient compute for the same workload that previously cost $11,016.

Even conservative implementation (12 hours scale-to-zero + Spot only for batch training jobs) saves 60%+. The 91% figure requires full commitment to all three strategies, but 60% is achievable in the first 2-week sprint with just scale-to-zero and Spot for training.

The Bottom Line on AI Kubernetes Cost Optimization

The gap between what engineering teams pay for AI infrastructure and what they should pay is not primarily a tooling problem. The tools exist, most of them are open source, and the configurations are not secret.

The gap exists because teams move fast to build and deploy models, and the cost optimization work gets scheduled for "later." Later becomes never. The GPU bill grows. Finance asks questions. Engineering scrambles.

The teams that avoid this cycle build cost optimization into how they deploy AI workloads from the start: DCGM monitoring before the first GPU node comes online, KEDA scale-to-zero as part of every inference deployment template, Karpenter instead of static node groups from day one, and VPA in Recommendation mode as a permanent fixture of cluster operations.

None of these are large initiatives. Each is a configuration decision made once that pays dividends indefinitely. The compounding effect of getting five of these right is the difference between an AI infrastructure budget that grows with your models and one that grows ahead of your business.

For broader context on cloud infrastructure cost control, see the cloud cost optimization for modern infrastructure guide.

External resources: Karpenter documentation, KEDA documentation, NVIDIA MIG user guide, and DCGM Exporter for Kubernetes.