$6.25 Per Terabyte Sounds Reasonable Until Someone Runs SELECT * on Your 50TB Table

BigQuery is one of those services that feels almost free when you start. The first terabyte of queries each month is free. Storage is $0.02/GB. There is no infrastructure to manage, no clusters to size, no indexes to maintain. You write SQL, you get results in seconds. It is genuinely impressive.

Then your data team grows. Your tables grow. Someone joins a 500GB fact table to a 2TB dimension table without a partition filter, and a single query costs $15.60. Another analyst runs a dashboard that refreshes every 15 minutes, scanning 200GB each time. That is $1.25 per refresh, $120/day, $3,600/month from one dashboard.

We have seen BigQuery bills go from $200/month to $15,000/month in a single quarter, with no change in the underlying data volume. The data did not get more expensive. The queries got lazier.

The good news: BigQuery is one of the most optimizable cloud services we work with at LeanOps. Partitioning, clustering, materialized views, and the right pricing model can cut BigQuery costs by 50-80% without changing what your team actually does with the data. This post breaks down every BigQuery cost in 2026, models real-world bills, and gives you the specific optimizations that make the biggest difference.

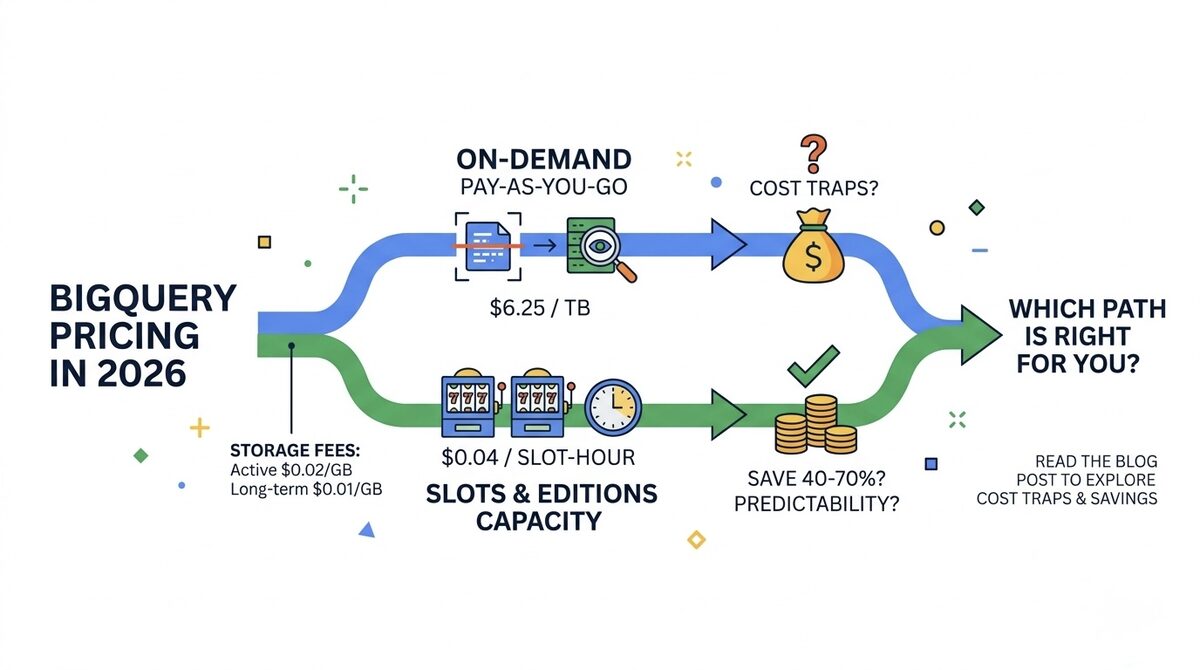

BigQuery Compute Pricing: On-Demand vs Editions

BigQuery offers two fundamentally different pricing models for compute (query processing). Choosing the wrong one is the single most expensive mistake teams make.

On-Demand Pricing

| Component | Rate | Free Tier |

|---|---|---|

| Queries (data scanned) | $6.25 per TB | 1 TB/month |

| Multi-statement queries | $6.25 per TB (each statement) | Included in 1TB free |

| BI Engine (in-memory) | $0.0416 per GB-hour | 1 GB free |

| Scheduled queries | Same per-TB rate | N/A |

How it works: You pay based on the number of bytes your query reads from tables. A query that scans 500GB costs $3.13. A query that scans 50GB costs $0.31. If your table is partitioned and your WHERE clause limits the scan to 5% of the table, you pay for 5% of the data.

Best for: Teams scanning under 20TB/month, exploratory analytics with unpredictable query patterns, development and testing environments, teams that want zero commitment.

BigQuery Editions (Capacity Pricing)

BigQuery Editions replaced the old flat-rate pricing in 2023. You purchase compute capacity measured in "slots" (virtual CPUs for query processing).

| Edition | Slot-Hour Rate | Autoscale | Baseline Commitment | Key Features |

|---|---|---|---|---|

| Standard | $0.04/slot-hour | Yes | None (pay-as-you-go) | Basic analytics, no materialized views |

| Enterprise | $0.06/slot-hour | Yes | Optional (1yr saves 25%) | Materialized views, row-level security, streaming |

| Enterprise Plus | $0.10/slot-hour | Yes | Optional (1yr/3yr) | CMEK, multi-region failover, advanced security |

How slots work: One slot = one virtual CPU that can process queries. A simple query might use 10 slots for 3 seconds. A complex JOIN across billions of rows might use 2,000 slots for 30 seconds. Autoscaling adjusts your slot count based on demand, up to a maximum you configure.

The math that determines which model wins:

On-demand: 1TB costs $6.25, regardless of how fast it runs.

Editions: Cost = (slots used) x (time in hours) x (per-slot-hour rate).

At $0.04/slot-hour (Standard), 100 slots running for 1 hour costs $4.00. Those 100 slots can process roughly 5-15TB of data per hour depending on query complexity. So the effective per-TB cost on Editions is approximately $0.27-0.80/TB when slots are fully utilized.

That is 8-23x cheaper than on-demand at $6.25/TB. The catch: if your slots sit idle, you are paying for unused capacity.

When to Switch from On-Demand to Editions

| Monthly Query Volume | On-Demand Cost | Editions Cost (Standard, avg 50% utilization) | Savings |

|---|---|---|---|

| 5 TB/month | $31.25 | ~$60 (min baseline) | On-demand wins |

| 20 TB/month | $125 | ~$90 | Editions saves 28% |

| 50 TB/month | $312.50 | ~$140 | Editions saves 55% |

| 100 TB/month | $625 | ~$200 | Editions saves 68% |

| 500 TB/month | $3,125 | ~$600 | Editions saves 81% |

The crossover point is roughly 15-25 TB/month depending on your query patterns and slot utilization. Below that, on-demand is simpler and cheaper. Above that, Editions saves serious money.

BigQuery Storage Pricing

Storage is billed separately from compute and applies whether or not you query the data.

Storage Rates

| Storage Type | Rate | Trigger |

|---|---|---|

| Active storage | $0.02/GB/month ($20/TB) | Tables modified in last 90 days |

| Long-term storage | $0.01/GB/month ($10/TB) | Tables untouched for 90+ days |

| Time travel storage | $0.02/GB/month | Default 7-day window, configurable |

| Fail-safe storage | $0.02/GB/month | 7-day non-configurable window |

Key details:

- Long-term pricing is automatic. If a table (or partition) is not modified for 90 consecutive days, its storage price drops by 50%. You do not need to do anything. This makes BigQuery surprisingly cheap for archival data.

- Time travel lets you query data as it existed up to 7 days ago. You pay for the delta (changed data) stored for this feature. For tables with frequent updates, time travel storage can add 20-50% to your storage bill.

- Fail-safe is a non-configurable 7-day recovery window after time travel expires. You cannot turn it off, and you pay $0.02/GB for it. For large tables with frequent updates, this is a hidden cost.

- Partitioned tables get long-term pricing per partition. A daily-partitioned table with 365 partitions will have 275 partitions at the $0.01 long-term rate (those older than 90 days) and 90 at the $0.02 active rate. This is another reason to always partition.

Storage Cost Example

A 10TB analytics warehouse with:

- 2TB active data (recent 90 days): 2,000GB x $0.02 = $40/month

- 8TB long-term data: 8,000GB x $0.01 = $80/month

- Time travel overhead (~500GB of deltas): 500GB x $0.02 = $10/month

- Total storage: $130/month

That is genuinely cheap for 10TB of fully queryable data. Storage is rarely the problem in BigQuery. Compute is where the money goes.

Data Ingestion Pricing

Getting data into BigQuery has several pricing options depending on the method.

| Ingestion Method | Rate | Notes |

|---|---|---|

| Batch load (from GCS) | Free | No charge for loading from Cloud Storage |

| Batch load (from local) | Free | Up to 10GB/day per project |

| Streaming inserts (legacy) | $0.01 per 200MB | Real-time row-by-row inserts |

| Storage Write API | $0.025 per 200MB | Newer, more efficient streaming |

| BigQuery Data Transfer | Free (transfer service) | Charges may apply for source extraction |

| Cross-region data transfer | $0.01-0.08/GB | Depends on source/destination regions |

The most important thing to know: Batch loading from Google Cloud Storage is completely free. If you can tolerate even a 5-minute delay (micro-batching instead of true streaming), you can avoid streaming insert costs entirely. For most analytics workloads, micro-batching is the right answer.

Streaming inserts at $0.01/200MB sound cheap, but at scale they add up. A pipeline ingesting 1TB/day via streaming inserts costs $50/day ($1,500/month). The same data batch-loaded from GCS costs $0.

Real-World Cost Modeling

Let us model BigQuery costs for three realistic scenarios.

Scenario 1: Small Analytics Team (Startup)

- 500GB data warehouse

- 5 analysts running ad-hoc queries

- ~5TB scanned per month

- Batch loading from GCS

| Component | Monthly Cost |

|---|---|

| Storage (500GB, mostly active) | $10 |

| Compute (5TB on-demand, minus 1TB free) | $25 |

| Ingestion (batch from GCS) | $0 |

| Total | $35/month |

BigQuery is absurdly cheap for small teams. The 1TB free tier covers exploratory queries, and batch loading is free. Hard to beat $35/month for a fully managed data warehouse.

Scenario 2: Growth-Stage Company

- 10TB data warehouse

- 20 analysts and data scientists

- ~80TB scanned per month

- Mix of batch and streaming ingestion (200GB/day streaming)

| Component | Monthly Cost |

|---|---|

| Storage (10TB, 70% long-term) | $130 |

| Compute (80TB on-demand) | $500 |

| OR Compute (Editions Standard, 100 slots avg) | $292 |

| Streaming inserts (6TB/month) | $300 |

| Total (on-demand) | $930/month |

| Total (Editions) | $722/month |

At this scale, switching to Editions saves $208/month (22%). But the real savings come from reducing the 80TB scanned. If partitioning and clustering reduce scans by 60%, on-demand drops to $200/month, making Editions unnecessary.

Scenario 3: Enterprise Data Platform

- 100TB data warehouse

- 100+ users (analysts, scientists, BI dashboards)

- ~500TB scanned per month

- Heavy streaming (2TB/day)

- BI Engine for dashboards (50GB reservation)

| Component | Monthly Cost |

|---|---|

| Storage (100TB, 80% long-term) | $1,200 |

| Compute (Editions Enterprise, 500 slots avg) | $2,190 |

| Streaming (60TB/month Storage Write API) | $7,500 |

| BI Engine (50GB) | $1,500 |

| Total | $12,390/month |

For comparison, the same 500TB on on-demand pricing would cost $3,125 just in compute. At 500 slots average utilization on Enterprise Editions, compute is $2,190. But the big cost driver at this scale is streaming ingestion at $7,500/month. Converting even 50% of that streaming to micro-batch loading saves $3,750/month.

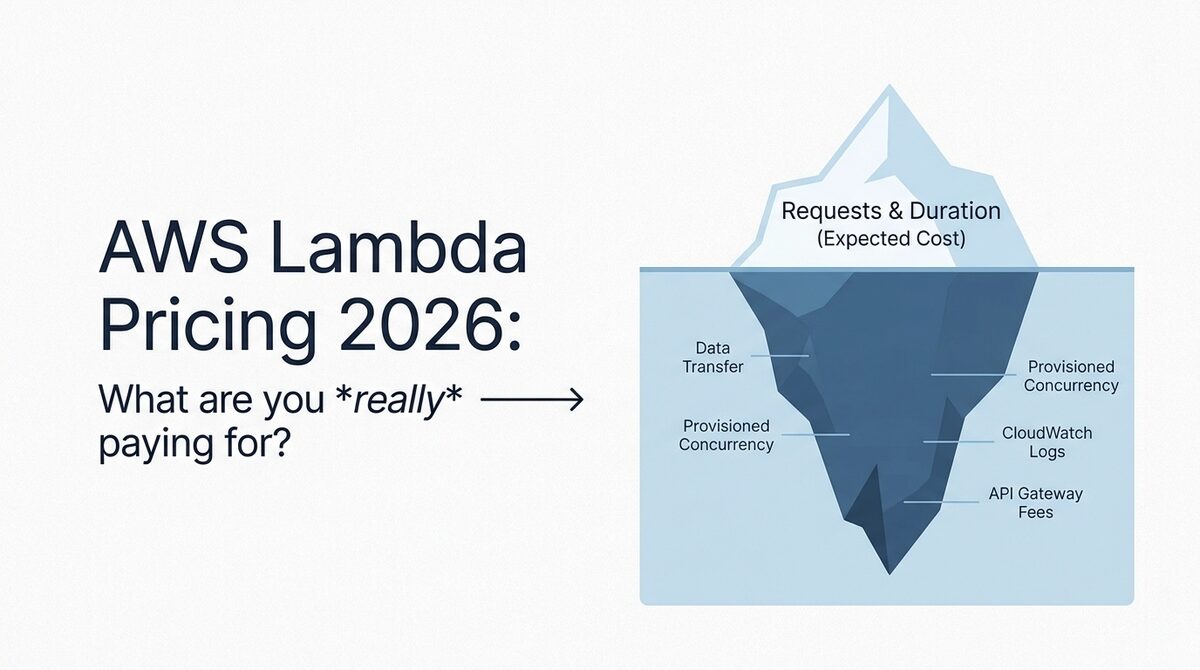

The 5 Biggest BigQuery Cost Traps (And How to Avoid Them)

1. SELECT * on Large Tables

Every column you SELECT causes BigQuery to scan the entire column across all rows (BigQuery uses columnar storage). A SELECT * on a 5TB table with 200 columns costs $31.25 even if you only look at the first 10 rows in the preview.

Fix: Always specify columns. SELECT col1, col2, col3 FROM table scans only those three columns. If col1, col2, and col3 together represent 5% of the table width, your query costs $1.56 instead of $31.25.

2. Missing Partition Filters

A table partitioned by date but queried without a date filter still scans all partitions. The partition exists but provides zero cost benefit unless your WHERE clause references it.

Fix: Set require_partition_filter = true on large partitioned tables. This forces all queries to include a partition filter, preventing accidental full-table scans.

ALTER TABLE `project.dataset.events`

SET OPTIONS (require_partition_filter = true);

3. Dashboards That Refresh Too Frequently

A BI dashboard with 10 panels, each scanning 50GB, refreshing every 15 minutes:

- Per refresh: 500GB = $3.13

- Per hour: $12.50

- Per day: $300

- Per month: $9,000

Fix: Use materialized views (Enterprise Edition), BI Engine (caches results in memory), or reduce refresh frequency. Most dashboards do not need 15-minute freshness. Hourly or 4x daily refresh cuts this cost by 75-96%.

4. Streaming When Batch Would Work

Streaming inserts cost $0.01/200MB ($50/TB). Batch loading from GCS is free. If your data can tolerate even a 5-minute delay, micro-batch loading saves 100% of ingestion costs.

Fix: Use Dataflow or a simple Cloud Function to buffer records into GCS files, then batch-load them into BigQuery every 5-10 minutes. This pattern gives near-real-time freshness at zero ingestion cost.

5. No Cost Controls or Budgets

BigQuery on-demand has no default spending cap. A single runaway query or a misconfigured scheduled query can scan hundreds of TB before anyone notices. We have seen a single rogue query cost $2,000 because it joined two large tables without filters in a loop.

Fix: Set project-level and user-level custom quotas:

-- Set maximum bytes billed per query (1TB limit)

ALTER PROJECT `my-project`

SET OPTIONS (

`region-us.default_query_job_timeout_ms` = 600000,

`region-us.default_query_bytes_billed_limit` = 1099511627776

);

Also set billing alerts at 50%, 80%, and 100% of your expected monthly BigQuery budget.

BigQuery Optimization Playbook: Cut Costs 50-80%

Partitioning (Saves 60-90% on Query Costs)

Partition every table over 1GB by the most commonly filtered column (usually a date/timestamp).

| Partition Type | Best For | Example |

|---|---|---|

| Date/Timestamp | Event data, logs, time-series | Partition by event_date |

| Integer range | Sequential IDs, tenant IDs | Partition by customer_id range |

| Ingestion time | Data without a natural partition key | Partition by _PARTITIONTIME |

A 10TB table partitioned by day with 365 days of data: querying a single day scans ~27GB instead of 10TB. That is $0.17 instead of $62.50 per query.

Clustering (Saves 30-70% on Top of Partitioning)

Clustering sorts data within each partition by up to 4 columns. When you filter or join on clustered columns, BigQuery reads only the relevant data blocks.

CREATE TABLE `project.dataset.events`

PARTITION BY DATE(event_timestamp)

CLUSTER BY user_id, event_type

AS SELECT * FROM `project.dataset.raw_events`;

Partitioning + clustering together typically reduce bytes scanned by 80-95% for well-structured queries.

Materialized Views (Saves Repeated Query Costs)

If multiple users run the same expensive aggregation daily, a materialized view computes it once and serves cached results for subsequent queries.

CREATE MATERIALIZED VIEW `project.dataset.daily_revenue`

PARTITION BY date

CLUSTER BY product_category

AS

SELECT

DATE(order_timestamp) as date,

product_category,

SUM(revenue) as total_revenue,

COUNT(*) as order_count

FROM `project.dataset.orders`

GROUP BY 1, 2;

BigQuery automatically refreshes materialized views when base data changes, and queries that match the view pattern are automatically rewritten to use the view (zero-cost reads from cached results).

Query Scheduling and Caching

BigQuery caches query results for 24 hours. If the same exact query runs again and the underlying data has not changed, the cached result is returned at zero cost. Design your scheduled queries and dashboards to leverage this:

- Run expensive scheduled queries once, then have dashboards read from the results table

- Use

CREATE TABLE ... AS SELECTfor expensive transformations, then query the output table

BigQuery vs Redshift vs Snowflake: Quick Cost Comparison

| Feature | BigQuery | Redshift Serverless | Snowflake |

|---|---|---|---|

| On-demand compute | $6.25/TB scanned | $0.375/RPU-hour | Credits ($2-4/credit) |

| Minimum cost when idle | $0 | $0 | $0 (auto-suspend) |

| Storage | $0.02/GB active | $0.024/GB (RMS) | $23/TB/month |

| Streaming ingestion | $0.01/200MB | Included | $0.005/file + compute |

| Free tier | 1TB queries + 10GB storage | None | $400 in credits (trial) |

| Auto-optimization | Automatic clustering, caching | Manual SORTKEY/DISTKEY | Automatic clustering |

| Separation of compute/storage | Yes (native) | Yes (serverless) | Yes (native) |

The honest take: BigQuery wins for teams that want zero infrastructure management and have unpredictable query patterns. Snowflake wins for multi-cloud data teams that need more control over compute resources. Redshift Serverless wins for teams already deep in the AWS ecosystem who want tight integration with other AWS analytics services.

None of them is universally cheapest. The right choice depends on your cloud provider ecosystem, query patterns, and team expertise.

The Bottom Line

BigQuery pricing is straightforward on paper ($6.25/TB on-demand, $0.02/GB storage) but complex in practice. The gap between a well-optimized BigQuery deployment and an unoptimized one can be 10-20x in monthly cost.

The three highest-impact optimizations, in order:

- Partition and cluster every table over 1GB (reduces query costs 60-95%)

- Switch to Editions if you consistently scan over 20TB/month (saves 40-70%)

- Replace streaming inserts with micro-batch loading where possible (saves 100% of ingestion cost)

If your BigQuery bill is climbing and nobody on your team has audited partition coverage, query patterns, or pricing model fit, there is almost certainly 40-60% waste waiting to be eliminated. Our cloud cost optimization team includes GCP-certified engineers who specialize in BigQuery and Dataflow cost optimization. Start with a free Cloud Waste Assessment and we will include BigQuery in the analysis.

Further reading: