$0.008/vCPU-Hour vs $0.096/vCPU-Hour. Same AWS. Same Workload. Different Architecture Choices.

The cost difference between well-architected and naively-deployed parallel computing on AWS is not 20-30%. It is 10-12x. We have seen genomics pipelines costing $4,800/run on on-demand instances drop to $400/run on optimized Spot Graviton architecture. We have seen video rendering farms spending $15,000/month move to $2,200/month with identical throughput and negligible increase in completion time.

These are not theoretical savings. They come from three architectural decisions that most teams get wrong because AWS's documentation buries the cost-optimal path under 47 different service pages:

- Instance selection: Graviton (ARM) instances deliver 20% better price-performance than x86 for most parallel workloads, yet most teams default to m5/m6i because that is what the tutorial used.

- Pricing model: Spot instances provide 60-90% discount and are perfectly suited for parallel work (jobs can retry on interruption), yet most teams use on-demand "because Spot sounds risky."

- Orchestration: AWS Batch is free and purpose-built for parallel job scheduling, yet teams pay for Step Functions, self-managed Kubernetes, or hand-rolled SQS+Lambda architectures that cost more and do less.

This post gives you the exact cost model for parallel computing on AWS in 2026 at three scales (100, 1,000, and 10,000 vCPU-hours), compares every orchestration method, and provides the architecture blueprint that achieves $0.008-0.015/vCPU-hour.

AWS Parallel Computing: The Cost Landscape in 2026

Instance Pricing for Parallel Workloads (us-east-1, May 2026)

| Instance | vCPU | RAM (GB) | On-Demand/hr | Spot/hr (avg) | Per vCPU-hr (On-Demand) | Per vCPU-hr (Spot) |

|---|---|---|---|---|---|---|

| c7g.xlarge (Graviton) | 4 | 8 | $0.1088 | $0.034 | $0.0272 | $0.0085 |

| c7g.4xlarge (Graviton) | 16 | 32 | $0.4352 | $0.131 | $0.0272 | $0.0082 |

| c7g.16xlarge (Graviton) | 64 | 128 | $1.7408 | $0.522 | $0.0272 | $0.0082 |

| c6i.xlarge (x86) | 4 | 8 | $0.136 | $0.048 | $0.0340 | $0.0120 |

| c6i.4xlarge (x86) | 16 | 32 | $0.544 | $0.163 | $0.0340 | $0.0102 |

| m6i.xlarge (General) | 4 | 16 | $0.192 | $0.058 | $0.0480 | $0.0145 |

| r6g.xlarge (Memory, Graviton) | 4 | 32 | $0.2016 | $0.060 | $0.0504 | $0.0150 |

| p4d.24xlarge (GPU) | 96 + 8xA100 | 1152 | $32.77 | $12.00 | $0.341 | $0.125 |

| hpc7g.16xlarge (HPC) | 64 | 128 | $1.6832 | N/A (limited) | $0.0263 | N/A |

Key insight: Graviton c7g instances deliver the lowest per-vCPU-hour cost ($0.0082 Spot) while also providing 20-40% better single-threaded performance than equivalent x86 for many workloads (compilation, genomics alignment, Monte Carlo simulation). You save money AND finish faster.

Orchestration Layer Costs

| Service | Pricing | Cost for 10,000 Jobs | Best For |

|---|---|---|---|

| AWS Batch | Free (pay only for compute) | $0 | Batch parallel, HPC, ML training |

| Step Functions (Standard) | $0.025/1,000 state transitions | $0.25 (minimal) | Complex workflows with branching |

| Step Functions (Express) | $0.00001667/5GB-sec | Variable | Short, high-volume workflows |

| Lambda (self-orchestrated) | $0.20/1M requests + duration | $2-20 | Short tasks under 15 min |

| EKS + Job Queue | $0.10/hour control plane + compute | $73/month + compute | K8s-native teams |

| SQS + EC2 Workers | $0.40/1M messages | $0.004 | Custom architectures |

AWS Batch's zero orchestration cost is the reason it wins for pure parallel computing. You are not paying for job scheduling, queue management, retry logic, or compute environment scaling. You pay only for the EC2 instances (or Fargate tasks) your jobs actually run on.

Cost Modeling: Three Scales of Parallel Computing

Scenario 1: 100 vCPU-Hours (Small Batch Job)

Example: Nightly data processing pipeline, CI/CD test parallelization, small rendering batch.

| Architecture | Compute Cost | Orchestration Cost | Total | Per vCPU-Hour |

|---|---|---|---|---|

| AWS Batch + c7g Spot | $0.82 | $0 | $0.82 | $0.0082 |

| AWS Batch + c7g On-Demand | $2.72 | $0 | $2.72 | $0.0272 |

| Lambda (256MB, 60sec tasks) | $2.50 | $0.02 | $2.52 | $0.0252 |

| Step Functions + Lambda | $2.50 | $0.25 | $2.75 | $0.0275 |

| EKS + Spot Nodes | $0.82 | $73 (control plane amortized) | $3.26 | $0.0326 |

| EC2 On-Demand (m6i) | $4.80 | $0 | $4.80 | $0.0480 |

At 100 vCPU-hours, the absolute cost difference is small ($0.82 vs $4.80), but the per-vCPU-hour efficiency establishes the pattern. AWS Batch + Graviton Spot is 6x more cost-efficient than naive on-demand EC2.

Scenario 2: 1,000 vCPU-Hours (Medium HPC Workload)

Example: Genomics secondary analysis (GATK), Monte Carlo financial simulation, ML hyperparameter sweep.

| Architecture | Compute Cost | Orchestration Cost | Total | Per vCPU-Hour |

|---|---|---|---|---|

| AWS Batch + c7g Spot | $8.20 | $0 | $8.20 | $0.0082 |

| AWS Batch + c7g On-Demand | $27.20 | $0 | $27.20 | $0.0272 |

| AWS Batch + c6i Spot (x86) | $12.00 | $0 | $12.00 | $0.0120 |

| AWS ParallelCluster + Spot | $9.50 | $2.50 (head node) | $12.00 | $0.0120 |

| EKS + Karpenter Spot | $8.50 | $73 (control plane) | $81.50 | $0.0815 |

| Self-managed EC2 On-Demand | $48.00 | $5.00 (management) | $53.00 | $0.0530 |

At 1,000 vCPU-hours, the savings become significant: $8.20 vs $53.00 (6.5x cheaper). Note that EKS becomes expensive for one-off batch workloads because the $73/month control plane cost dominates. EKS makes sense only if the cluster runs other workloads too.

Scenario 3: 10,000 vCPU-Hours (Large-Scale HPC)

Example: Full genome sequencing pipeline, CFD simulation, movie VFX rendering, large-scale ML training.

| Architecture | Compute Cost | Orchestration Cost | Total | Per vCPU-Hour | Time (64-node) |

|---|---|---|---|---|---|

| AWS Batch + c7g.16xl Spot | $82 | $0 | $82 | $0.0082 | ~2.5 hours |

| AWS Batch + c7g.16xl On-Demand | $272 | $0 | $272 | $0.0272 | ~2.5 hours |

| AWS ParallelCluster + hpc7g | $263 | $10 (head node) | $273 | $0.0273 | ~2.5 hours |

| AWS Batch + c6i Spot (x86) | $120 | $0 | $120 | $0.0120 | ~3.1 hours |

| EKS + Karpenter Spot | $85 | $73 | $158 | $0.0158 | ~2.5 hours |

| Self-managed EC2 On-Demand | $480 | $15 | $495 | $0.0495 | ~2.5 hours |

At 10,000 vCPU-hours, the optimized architecture saves $413 per run compared to naive on-demand ($82 vs $495). If you run this daily, that is $12,390/month in savings, or $150,000/year.

The Cost-Optimal Architecture: AWS Batch + Graviton Spot

Here is the blueprint for the cheapest reliable parallel computing on AWS in 2026:

Architecture Diagram

┌─────────────┐ ┌─────────────┐ ┌──────────────────────┐

│ S3 (input) │───▶│ AWS Batch │───▶│ Spot Fleet (c7g) │

│ │ │ Job Queue │ │ Multi-AZ, auto-scale │

└─────────────┘ └─────────────┘ └──────────────────────┘

│ │

▼ ▼

┌─────────────┐ ┌──────────────────────┐

│ Job Defn │ │ S3 (output/checkpoint)│

│ (container) │ │ │

└─────────────┘ └──────────────────────┘

Key Configuration Decisions

1. Compute Environment: Mixed Spot Fleet

{

"computeEnvironment": {

"type": "MANAGED",

"computeResources": {

"type": "SPOT",

"allocationStrategy": "SPOT_PRICE_CAPACITY_OPTIMIZED",

"instanceTypes": [

"c7g.xlarge",

"c7g.2xlarge",

"c7g.4xlarge",

"c7g.8xlarge"

],

"minvCpus": 0,

"maxvCpus": 10000,

"spotIamFleetRole": "arn:aws:iam::ACCOUNT:role/AWSBatchSpotFleetRole"

}

}

}

Why this works:

SPOT_PRICE_CAPACITY_OPTIMIZEDselects from pools with highest availability, minimizing interruption- Multiple instance sizes allow AWS to find capacity across more pools

minvCpus: 0means you pay nothing when no jobs are queuedmaxvCpus: 10000allows massive burst without pre-provisioning

2. Job Definition: Containerized with Checkpointing

{

"jobDefinition": {

"type": "container",

"containerProperties": {

"image": "ACCOUNT.dkr.ecr.us-east-1.amazonaws.com/parallel-worker:latest",

"vcpus": 4,

"memory": 8192,

"command": [

"python",

"worker.py",

"--checkpoint-s3",

"s3://bucket/checkpoints/"

],

"environment": [{ "name": "CHECKPOINT_INTERVAL", "value": "300" }]

},

"retryStrategy": {

"attempts": 3

},

"timeout": {

"attemptDurationSeconds": 7200

}

}

}

Why checkpointing matters: Spot instances can be interrupted with 2-minute notice. If your job checkpoints every 5 minutes to S3, you lose at most 5 minutes of work per interruption. With 3 retry attempts, a 2-hour job completes reliably even with 1-2 interruptions.

3. Array Jobs for Embarrassingly Parallel Work

# Submit 10,000 parallel jobs with one API call

aws batch submit-job \

--job-name "genome-alignment" \

--job-queue "spot-graviton-queue" \

--job-definition "parallel-worker:7" \

--array-properties size=10000

Each array job runs independently with its index available as AWS_BATCH_JOB_ARRAY_INDEX. Your worker reads this index to determine which shard of the input to process.

Orchestration Comparison: When to Use What

AWS Batch: Best for Pure Parallel Compute

Use when:

- Jobs are compute-heavy (minutes to hours per task)

- Work is embarrassingly parallel (each job independent)

- Spot tolerance is acceptable (can checkpoint and retry)

- You want zero orchestration cost

- Job count is high (hundreds to thousands)

Cost advantage: Free orchestration + native Spot management + automatic scaling to zero between workloads.

Step Functions: Best for Complex Workflows

Use when:

- Workflow has conditional branching (if job A fails, run job B)

- Tasks mix compute with AWS service calls (S3, DynamoDB, SQS)

- Individual steps are short (under 15 minutes each)

- You need visual workflow monitoring and debugging

- State must persist between steps

Cost at scale: 10,000 state transitions = $0.25. Cheap for workflow logic, but compute still costs separately (usually Lambda or Fargate).

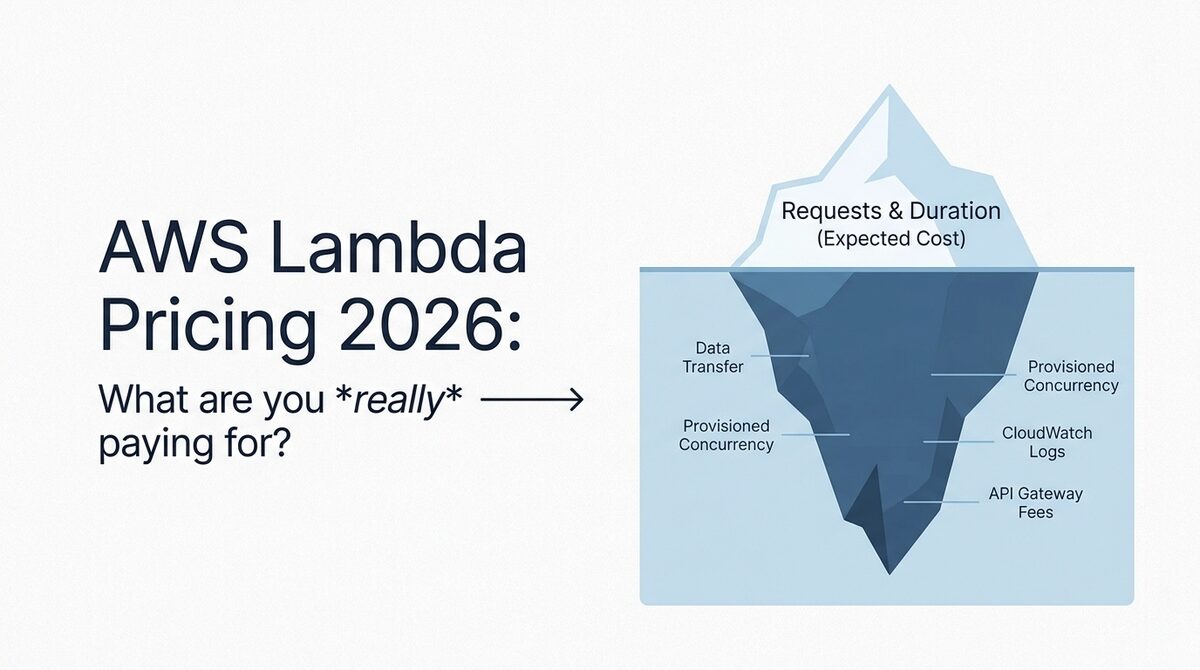

Lambda: Best for Short Parallel Tasks

Use when:

- Individual tasks complete in under 15 minutes

- Memory needs are under 10GB per task

- Cold start latency is acceptable (or use provisioned concurrency)

- Tasks are event-driven (S3 upload triggers processing)

- You want per-millisecond billing (no idle capacity)

Cost efficiency for parallel work:

- 256MB, 60-second tasks: $0.0000042 per task

- At 100,000 tasks/day: $0.42/day ($12.60/month)

- Equivalent EC2 compute: $0.30-0.80/day (cheaper at scale but requires management)

Lambda wins for high-volume, short-duration parallel work (image thumbnailing, PDF processing, API fan-out). It loses for anything over 15 minutes or requiring more than 10GB memory.

EKS with Karpenter: Best for Teams Already on Kubernetes

Use when:

- You already run an EKS cluster for other workloads

- Parallel work needs GPU (Karpenter provisions GPU nodes on-demand)

- Team has Kubernetes expertise

- You want to use Kubernetes Jobs/CronJobs for scheduling

- Workload mixes long-running services with batch jobs

Hidden cost: The $73/month EKS control plane and the operational overhead of managing Kubernetes make this the worst choice for teams that would only use K8s for batch. Only efficient when the cluster serves multiple purposes.

Instance Selection Guide for Parallel Workloads

CPU-Bound (Genomics, Simulation, Compilation)

| Recommendation | Instance | Why |

|---|---|---|

| Best cost-efficiency | c7g (Graviton) | 20% cheaper, often 20-40% faster for single-thread |

| Best absolute performance | c7i.metal-48xl (x86) | Highest clock speed for latency-sensitive HPC |

| Best for tightly-coupled MPI | hpc7g.16xlarge | EFA networking, cluster placement group |

Memory-Bound (Analytics, Graph Processing, In-Memory Databases)

| Recommendation | Instance | Why |

|---|---|---|

| Best cost-efficiency | r7g (Graviton) | $0.015/vCPU-hour Spot, high memory:CPU ratio |

| Best total memory | x2gd.metal | 1TB RAM, Graviton, local NVMe |

| Cheapest per-GB RAM | r6g Spot | $0.060/hour for 32GB (4 vCPU) |

GPU-Bound (ML Training, Rendering, Molecular Dynamics)

| Recommendation | Instance | Why |

|---|---|---|

| Best cost/TFLOP (training) | p4d.24xlarge Spot | 8x A100, $12/hr Spot vs $32.77 on-demand |

| Best for inference batching | g5.xlarge Spot | 1x A10G, $0.40/hr Spot |

| Best for rendering | g6.xlarge | Ada Lovelace GPU, good single-GPU perf |

IO-Bound (Data Processing, ETL, Log Analysis)

| Recommendation | Instance | Why |

|---|---|---|

| Best cost-efficiency | c7gd (Graviton + local NVMe) | Local SSD avoids EBS IOPS charges |

| Highest throughput | i4i.metal | 30TB local NVMe, massive IO bandwidth |

| Best for S3-heavy pipelines | Any (S3 Express One Zone) | Use S3 Express for low-latency object access |

The Five Cost Optimization Levers (Ordered by Impact)

1. Spot Instances (Saves 60-90%)

This is the single biggest lever. Parallel workloads are ideal for Spot because:

- Jobs can be retried on interruption (embarrassingly parallel)

- Checkpointing makes interruption cheap (lose minutes, not hours)

- Multiple instance types provide deep Spot pools

- AWS Batch handles Spot lifecycle automatically

Real-world interruption rates: In 2026, c7g Spot instances in us-east-1 experience roughly 5-10% interruption rate per month. For a 2-hour job, the probability of interruption during execution is approximately 0.7-1.4%. With 3 retries, your effective failure rate is near zero.

2. Graviton/ARM Instances (Saves 20% + Performance Bonus)

Graviton instances cost 20% less AND deliver better performance for many workloads:

| Workload Type | c7g vs c6i Performance | Cost Saving |

|---|---|---|

| Single-threaded compute | +15-25% faster | 20% cheaper |

| Java/JVM workloads | +10-20% faster | 20% cheaper |

| Compression/encoding | +20-40% faster | 20% cheaper |

| Python numerical (NumPy) | Comparable | 20% cheaper |

| Legacy x86-only binaries | N/A (incompatible) | N/A |

The only reason NOT to use Graviton is if your code has x86-specific dependencies (x86 SIMD intrinsics, closed-source x86 binaries, or Windows containers).

3. Right-Sized Instance Selection (Saves 15-30%)

Using general-purpose m6i when your workload is CPU-bound (should be c7g) wastes 40% of your spend on RAM you never use. Conversely, using compute-optimized c7g for a memory-heavy workload forces you to over-provision vCPUs to get enough RAM.

Rule of thumb:

- CPU-bound (high CPU, low memory): c7g family

- Memory-bound (low CPU, high memory): r7g family

- Balanced: m7g family (only when genuinely balanced)

- GPU: p-family (training), g-family (inference/rendering)

4. Scale to Zero Between Workloads (Saves Idle Cost)

AWS Batch with minvCpus: 0 automatically terminates instances when the job queue is empty. If your parallel workload runs 4 hours/day, you pay for 4 hours, not 24. This sounds obvious but many teams leave EC2 instances running 24/7 for workloads that are active 2-6 hours/day.

Annual waste from not scaling to zero:

- 4 c7g.4xlarge running 24/7: $15,200/year

- Same instances running only during 4-hour batch window: $2,533/year

- Savings: $12,667/year (83% reduction)

5. S3 Express One Zone for Data-Intensive Pipelines (Saves 50% on Latency, Reduces Compute Time)

If your parallel jobs read/write heavily from S3, S3 Express One Zone (single-digit millisecond latency, 10x throughput vs standard S3) can reduce total job duration by 30-50% for IO-bound workloads. Shorter duration = less compute cost, even though S3 Express storage is more expensive per GB.

When it pays off: Parallel workloads reading 100GB+ of input data where S3 GET latency is a bottleneck. The compute time saved (and therefore cost saved) typically exceeds the higher S3 Express storage cost.

Real-World Use Case: Genomics Pipeline Cost Optimization

A genomics company running secondary analysis (BWA alignment + GATK variant calling) on 30x whole genome sequences:

| Metric | Before Optimization | After Optimization |

|---|---|---|

| Architecture | Self-managed EC2 m5.4xlarge on-demand | AWS Batch c7g.4xlarge Spot |

| Per-genome cost | $47.50 | $8.20 |

| Per-genome time | 4.2 hours | 3.1 hours (Graviton faster) |

| Monthly volume (200 genomes) | $9,500/month | $1,640/month |

| Annual savings | -- | $94,320/year |

The changes: Graviton (20% cheaper + faster), Spot (70% discount), compute-optimized instead of general-purpose (15% savings), and S3 Express for reference genome reads (reduced IO wait). Total: 83% cost reduction with 26% faster completion.

Common Mistakes That Waste Money

Mistake 1: Using Lambda for Jobs Over 5 Minutes

Lambda's per-millisecond billing sounds efficient, but for jobs over 5 minutes with consistent resource needs, EC2 Spot is 3-5x cheaper. Lambda's value is sub-minute, bursty, event-driven work, not sustained parallel compute.

Mistake 2: Single Instance Type in Spot Fleet

Requesting only c7g.4xlarge limits your Spot pool to one capacity bucket. If that pool is low, you get interrupted frequently or cannot launch at all. Always specify 4-6 instance sizes across at least 3 AZs.

Mistake 3: No Checkpointing

Without checkpointing, a Spot interruption at 95% completion means 100% rework. With 5-minute checkpoints to S3, the worst case is 5 minutes of rework. The S3 PUT cost for checkpointing is negligible ($0.000005 per checkpoint).

Mistake 4: Over-Provisioning Container Resources

AWS Batch jobs requesting 16 vCPU / 32GB when they use 4 vCPU / 8GB at peak wastes 75% of capacity. Profile your workload first: run a job at the smallest viable size and measure actual CPU/memory utilization before scaling your job definition.

Mistake 5: Ignoring Data Transfer Costs

A 10,000-job parallel workload where each job reads 1GB from S3 and writes 500MB generates 15TB of S3 transfer. Within the same region this is free, but cross-region S3 access at $0.02/GB adds $300 per run. Always colocate your compute and data in the same region (and same AZ when using S3 Express).

The Bottom Line

Parallel computing on AWS is either one of the cheapest compute workloads you can run (at $0.008/vCPU-hour with Spot Graviton) or one of the most wasteful (at $0.048+/vCPU-hour with on-demand general-purpose instances). The difference is not compute power. It is architecture.

The cost-optimal recipe for 2026:

- AWS Batch for orchestration (free)

- Graviton c7g for CPU-bound work (20% cheaper + faster)

- Spot instances with multi-type fleet (60-90% off)

- Checkpointing to S3 every 5 minutes (makes Spot reliable)

- Scale to zero between workloads (no idle cost)

If your parallel computing bill exceeds $5,000/month and you are not using all five of these optimizations, you are overpaying by at least 50%. Probably more.

Need help optimizing your HPC or batch computing costs on AWS? Our cloud cost optimization team has re-architected parallel pipelines for genomics, financial simulation, rendering, and ML training workloads, typically achieving 60-80% cost reduction within 30 days. Get a free assessment to see what your parallel workloads should actually cost.

Further reading: