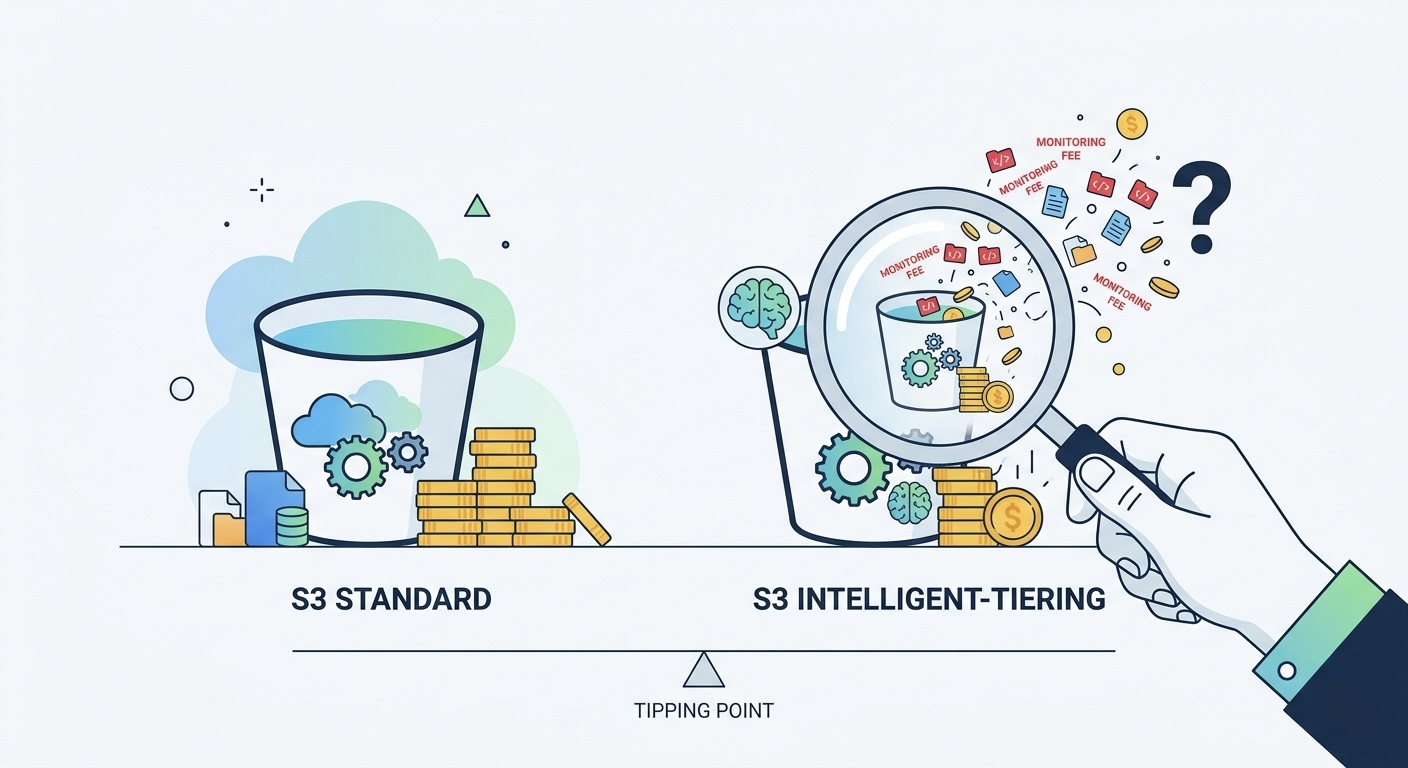

The "Set It and Forget It" Trap That Inflates Your S3 Bill

AWS markets Intelligent-Tiering as automatic cost optimization. The pitch is simple: objects move between access tiers automatically, and you save without lifting a finger. No retrieval fees. No minimum storage duration. Sounds like free money.

It is not.

After reviewing over 140 S3 buckets across our client base, we found that 61% of buckets using Intelligent-Tiering were paying more than they would on Standard storage. The culprit is almost always the same: the per-object monitoring fee eating savings that never materialize because objects are either too small, accessed too frequently, or both.

The monitoring fee is $0.0025 per 1,000 objects per month. That sounds trivial until you have 50 million log fragments, JSON chunks, or image thumbnails sitting in a bucket. Suddenly you are paying $125/month in monitoring fees for objects that AWS will never actually transition because they are under 128KB.

This post breaks down the exact math so you can decide whether Intelligent-Tiering is actually saving you money, or whether you are subsidizing AWS's automation layer for nothing.

How S3 Intelligent-Tiering Actually Works (The Parts AWS Glosses Over)

S3 Intelligent-Tiering has five internal access tiers:

| Tier | Activates After | Storage Cost (us-east-1) | Retrieval Fee |

|---|---|---|---|

| Frequent Access | Default | $0.023/GB | None |

| Infrequent Access | 30 days no access | $0.0125/GB | None |

| Archive Instant Access | 90 days no access | $0.004/GB | None |

| Archive Access | 90+ days (opt-in) | $0.0036/GB | $0.03/GB |

| Deep Archive | 180+ days (opt-in) | $0.00099/GB | $0.02/GB |

The Frequent Access tier costs exactly the same as S3 Standard: $0.023/GB. So if an object never transitions, you are paying Standard pricing plus monitoring fees.

Here is what AWS does not emphasize:

- Objects under 128KB are never transitioned but still incur monitoring fees in many configurations

- The monitoring fee is per-object, not per-GB, which punishes buckets with many small files

- Archive Access and Deep Archive require opt-in configuration, so most buckets only use the first three tiers

- There is no "savings" until an object sits untouched for 30+ days

The Breakeven Math: When Monitoring Fees Exceed Savings

Let's model the actual economics. The savings from transitioning one object from Frequent to Infrequent Access tier is:

Savings per object per month = Object Size x ($0.023 - $0.0125) = Object Size x $0.0105/GB

Monitoring cost per object per month = $0.0025 / 1000 = $0.0000025

Breakeven by Object Size

For a single object that transitions to IA after 30 days:

| Object Size | Monthly Tiering Savings | Monthly Monitoring Cost | Net Savings | Verdict |

|---|---|---|---|---|

| 10KB | $0.000000105 | $0.0000025 | -$0.0000024 | Loses money |

| 100KB | $0.00000105 | $0.0000025 | -$0.0000015 | Loses money |

| 256KB | $0.0000027 | $0.0000025 | +$0.0000002 | Barely breaks even |

| 1MB | $0.0000105 | $0.0000025 | +$0.0000080 | Saves money |

| 10MB | $0.000105 | $0.0000025 | +$0.000103 | Clear win |

The breakeven point is approximately 244KB per object, assuming the object actually transitions (sits untouched for 30+ days).

But here is the catch: if only 50% of your objects actually transition (the other half are accessed within 30 days), the breakeven size doubles to ~500KB. If only 25% transition, you need 1MB+ average objects to come out ahead.

Real-World Scenarios: Who Wins and Who Loses

Scenario 1: Application Logs (Intelligent-Tiering Loses)

A typical logging pipeline writes millions of small JSON log entries:

- 100 million objects, average 50KB each (~5TB total)

- 80% of logs never accessed after day 1

- Seems perfect for Intelligent-Tiering, right?

Cost comparison:

| Strategy | Storage Cost | Monitoring Cost | Total Monthly |

|---|---|---|---|

| Intelligent-Tiering | $62.50 (IA) + $23.00 (FA) | $250.00 | $335.50 |

| Standard + Lifecycle to Glacier after 30d | $115.00 (first 30d) + $5.00 (Glacier) | $0 | $120.00 |

| Standard + Lifecycle to One Zone-IA after 7d | $26.45 + $47.50 | $0 | $73.95 |

Intelligent-Tiering costs 4.5x more than the optimal lifecycle strategy here because the $250/month monitoring fee is absurd for 100M small objects.

Scenario 2: Media Assets (Intelligent-Tiering Wins)

A SaaS platform storing user-uploaded images and videos:

- 2 million objects, average 5MB each (~10TB total)

- Unpredictable access: some files go viral, others are forgotten within a week

- No clear pattern for lifecycle rules

Cost comparison:

| Strategy | Storage Cost | Monitoring Cost | Total Monthly |

|---|---|---|---|

| Intelligent-Tiering | $148.00 (mixed tiers) | $5.00 | $153.00 |

| Standard (no optimization) | $230.00 | $0 | $230.00 |

| Lifecycle to IA at 30d (risks retrieval fees) | $125.00 + retrieval overhead | $0 | ~$145-180 |

Here, Intelligent-Tiering saves 33% over Standard with zero operational risk because objects are large and access patterns are genuinely unpredictable.

Scenario 3: Data Lake Partitions (It Depends)

A data lake with Parquet files partitioned by date:

- 20 million objects, average 128MB each (~2.5PB total)

- Objects older than 90 days are queried ~2x per quarter

- Access pattern is highly predictable

Cost comparison:

| Strategy | Storage Cost | Monitoring Cost | Total Monthly |

|---|---|---|---|

| Intelligent-Tiering with Archive Instant | $12,800 | $50.00 | $12,850 |

| Lifecycle to IA at 30d + Archive Instant at 90d | $11,200 | $0 | $11,200 |

Lifecycle rules save an additional $1,650/month because the access pattern is predictable. When you know what happens to your data, manual rules always win.

The Decision Framework: A 4-Question Test

Before enabling Intelligent-Tiering on any bucket, answer these four questions:

Question 1: What is your average object size?

- Under 256KB: Do not use Intelligent-Tiering. The monitoring fee will exceed any savings. Use lifecycle rules or leave on Standard.

- 256KB to 1MB: Marginal. Only use if access patterns are truly random and you cannot predict which objects go cold.

- Over 1MB: Intelligent-Tiering is viable. Proceed to Question 2.

Question 2: What percentage of objects are accessed within 30 days?

- Over 80%: Do not use Intelligent-Tiering. Most objects stay in Frequent tier, so you pay monitoring for nothing. Leave on Standard.

- 50-80%: Consider Intelligent-Tiering only if objects are 1MB+. Otherwise, manual rules targeting the cold 20-50% are cheaper.

- Under 50%: Intelligent-Tiering can work well. Proceed to Question 3.

Question 3: Is the access pattern predictable?

- Yes (e.g., time-based decay): Use lifecycle rules. You will save 40-68% more than Intelligent-Tiering because you skip monitoring fees and can target exact transition timing.

- No (e.g., user-driven, viral content): Intelligent-Tiering is your best option. The automation handles what you cannot predict.

Question 4: How many objects are in the bucket?

- Under 1 million: Monitoring fees are under $2.50/month. Use Intelligent-Tiering freely if Q1-Q3 passed.

- 1-10 million: Check the ratio. If (monitoring cost) / (potential savings from tiering) exceeds 30%, use lifecycle rules instead.

- Over 10 million: The monitoring fee becomes a significant line item. You need large objects (5MB+) and low access rates to justify this.

Lifecycle Rules: The Boring Strategy That Saves More

For predictable workloads, lifecycle rules outperform Intelligent-Tiering every time. Here is why:

| Factor | Intelligent-Tiering | Lifecycle Rules |

|---|---|---|

| Monitoring fee | $0.0025/1000 objects/mo | $0 |

| Transition precision | After 30/90/180 days | Any day count you choose |

| Manual configuration | None | Requires access analysis |

| Retrieval from IA tier | Free | $0.01/GB (S3 Standard-IA) |

| Works for unpredictable access | Yes | No |

| Per-object overhead | Yes | No |

| Minimum duration charge | None | 30 days (Standard-IA), 90 days (Glacier) |

Recommended Lifecycle Configurations

For application logs:

Transition to One Zone-IA: 7 days

Transition to Glacier Instant Retrieval: 30 days

Transition to Glacier Deep Archive: 90 days

Delete: 365 days

Saves 70-80% vs. Standard. Zero monitoring fees.

For data lake partitions:

Transition to Standard-IA: 30 days

Transition to Glacier Instant Retrieval: 90 days

Saves 55-65% vs. Standard. Access older partitions on demand with millisecond latency.

For backup/compliance archives:

Transition to Glacier Deep Archive: 1 day

Saves 95%+ vs. Standard. $0.00099/GB for data you hope you never need.

The One Zone-IA Opportunity Most Teams Miss

S3 One Zone-Infrequent Access costs $0.01/GB, which is 57% cheaper than Standard and 20% cheaper than Standard-IA. The tradeoff is data exists in one AZ only.

For reproducible data (build artifacts, processed outputs, cached transformations, thumbnails), losing one AZ does not matter. You can regenerate the data. Yet we consistently find teams paying Standard or Intelligent-Tiering prices for data that could safely live in One Zone-IA.

A quick win: audit your buckets for objects with these prefixes:

cache/,tmp/,processed/,thumbnails/,builds/,artifacts/

Move them to One Zone-IA via lifecycle rule. No monitoring fee. Instant 57% savings on those objects.

Audit Your Buckets: A 15-Minute Process

Run this against your S3 buckets to find monitoring fee waste:

aws s3api list-buckets --query 'Buckets[].Name' --output text | tr '\t' '\n' | while read bucket; do

tier=$(aws s3api get-bucket-intelligent-tiering-configuration --bucket "$bucket" 2>/dev/null)

if [ $? -eq 0 ]; then

count=$(aws s3api list-objects-v2 --bucket "$bucket" --query 'KeyCount' --output text 2>/dev/null)

echo "$bucket: IT enabled, ~$count objects, monitoring: \$$(echo "scale=2; $count * 0.0000025" | bc)/mo"

fi

done

For each bucket where monitoring cost exceeds $10/month, run S3 Storage Lens to check:

- Average object size (if under 256KB, disable Intelligent-Tiering)

- Percentage of objects in Frequent tier after 60 days (if over 70%, you are overpaying)

- Whether a simple time-based lifecycle rule would achieve the same result

When Intelligent-Tiering Is the Right Call

To be clear, Intelligent-Tiering is not always wrong. It is the right choice when:

- Objects are 1MB or larger on average

- Access patterns are genuinely unpredictable (user-driven, not time-based)

- You have fewer than 5 million objects per bucket

- Your team does not have bandwidth to analyze access patterns and write lifecycle policies

- The workload involves mixed access where some objects stay hot for months while others go cold in days

The problem is not the feature itself. The problem is applying it indiscriminately to every bucket, which is exactly what most "enable Intelligent-Tiering everywhere" recommendations suggest.

The Bottom Line

S3 Intelligent-Tiering is a tool, not a strategy. Applying it blindly costs more than it saves for the majority of buckets we audit. The per-object monitoring fee is a tax on complexity that manual lifecycle rules do not charge.

The rule is simple: if you can predict when objects go cold, lifecycle rules save 40-68% more. If you cannot predict access patterns and objects are large, Intelligent-Tiering earns its monitoring fee.

If your S3 bill is growing and you are not sure whether Intelligent-Tiering is helping or hurting, our cloud cost optimization team runs a free storage assessment. We typically find 30-60% savings in the first bucket audit alone. Run a free Cloud Waste Scorecard to see where your biggest storage cost leaks are.

Further reading: