The Distributed Vector Database Built for Billions of Vectors (Priced Like It Too)

Milvus is the vector database you graduate to. Most teams start with Pinecone (simple, managed, fast to set up) or Qdrant (cheap, fast, Rust-native). Then their dataset hits 50 million vectors, they need multi-node distribution, and they discover that neither Pinecone nor Qdrant was designed for true horizontal scaling the way Milvus was.

Milvus was built from day one as a distributed system. It separates storage (MinIO/S3), metadata (etcd), message queuing (Pulsar/Kafka), and query/index nodes. Each layer scales independently. That architecture is overkill for 1 million vectors. It is exactly right for 1 billion.

The managed version is Zilliz Cloud, built by the same team that maintains the open-source Milvus project. Zilliz Cloud handles all the distributed complexity (etcd clusters, MinIO backends, node orchestration) and charges you in Compute Units.

We have deployed Milvus at LeanOps for clients running large-scale recommendation systems, image similarity search, and multi-modal RAG pipelines. The pattern is consistent: teams below 20M vectors are usually better served by Pinecone or Qdrant. Teams above 50M vectors where horizontal scaling and high availability matter? Milvus wins on architecture and total cost of ownership.

This post gives you the complete Zilliz Cloud pricing in 2026, models real costs at production scale, compares against every alternative, and provides a framework for deciding when Milvus justifies its added complexity.

Zilliz Cloud Pricing in 2026: Complete Breakdown

Zilliz Cloud offers three deployment models: Serverless, Dedicated, and Enterprise. Each uses Compute Units (CUs) as the core billing metric.

Deployment Options

| Plan | Target | Architecture | Starting Cost |

|---|---|---|---|

| Free Tier | Prototyping | Serverless, shared | $0 (100 CU-hours + 5GB) |

| Serverless | Variable workloads | Auto-scaling, multi-tenant | $0.096/CU-hour + storage |

| Dedicated | Production | Single-tenant, fixed capacity | From 1 CU ($70/month) |

| Enterprise | Large-scale | Custom, SLA, VPC | Custom pricing |

Compute Pricing (CU-Hours)

| Component | Serverless Rate | Dedicated Rate | Notes |

|---|---|---|---|

| Query CU | $0.096/CU-hour | $0.096/CU-hour (reserved) | Scales with query volume and complexity |

| Index CU | $0.096/CU-hour | Included in cluster | Consumed during index building |

| Ingestion CU | $0.096/CU-hour | Included in cluster | Consumed during data loading |

How Compute Units work: A CU represents a unit of compute capacity (roughly equivalent to 1 vCPU + 4GB RAM). On Serverless, CUs scale automatically based on demand. On Dedicated, you provision a fixed number of CUs that run continuously.

The math:

- 1 CU running 24/7 for a month = 720 CU-hours = $69.12/month

- 2 CUs for a month = $138.24/month

- 4 CUs for a month = $276.48/month

On Serverless, you only pay for CU-hours consumed during active queries and indexing. Idle time costs nothing for compute (you still pay storage).

Storage Pricing

| Storage Type | Rate | Notes |

|---|---|---|

| Standard (SSD) | $0.02/GB/month | Vector data + metadata + indexes |

| Warm storage | $0.008/GB/month | Infrequently accessed collections |

| Backup storage | $0.015/GB/month | Automated snapshots |

Storage math for vectors (1536 dimensions, float32):

| Vector Count | Raw Data Size | With Index Overhead | Monthly Storage Cost |

|---|---|---|---|

| 1M | 6.1 GB | ~12 GB | $0.24 |

| 10M | 61 GB | ~120 GB | $2.40 |

| 50M | 305 GB | ~600 GB | $12.00 |

| 100M | 610 GB | ~1.2 TB | $24.00 |

Index overhead roughly doubles the raw vector size because Milvus stores both the raw vectors and the index structures (IVF, HNSW, or DiskANN depending on configuration).

Free Tier Details

| Feature | Limit |

|---|---|

| Compute | 100 CU-hours/month |

| Storage | 5 GB |

| Vector capacity | ~820K vectors (1536-dim) |

| Collections | Unlimited |

| API rate limit | Moderate |

| Duration | Permanent (no expiration) |

| Regions | 1 (US, EU, or Asia) |

The free tier is generous compared to competitors. Pinecone gives 2GB of storage. Qdrant gives 1GB of RAM. Zilliz gives 5GB of storage plus 100 CU-hours of compute. For prototyping a RAG application with under 500K vectors, the free tier lasts months without hitting limits.

Additional Costs

| Feature | Cost |

|---|---|

| Cross-region replication | 2x compute + storage in each region |

| GPU-accelerated search | $0.35/GPU-CU-hour (T4) to $1.20/GPU-CU-hour (A100) |

| VPC peering | Enterprise plan only |

| Priority support | Enterprise plan (custom) |

| RBAC/Security features | Included in all plans |

| Monitoring (Grafana dashboards) | Included |

Real-World Cost Modeling: What Zilliz Cloud Actually Costs

Scenario 1: Small RAG Application (1M Vectors, Low Traffic)

A knowledge base, FAQ search, or small product catalog with 1M vectors at 1536 dimensions, queried a few hundred times per day.

| Component | Calculation | Monthly Cost |

|---|---|---|

| Storage | 12 GB x $0.02 | $0.24 |

| Compute (Serverless) | ~3-5 CU-hours/day x 30 x $0.096 | $9-14 |

| Backups | 12 GB x $0.015 | $0.18 |

| Total (Serverless, minimal traffic) | ~$10-15/month | |

| Total (Serverless, moderate traffic) | ~$80-150/month |

The range is wide because compute dominates and scales directly with query volume and complexity. A few hundred simple searches per day stays in the $10-15 range. Add filtering, hybrid search, or 10,000+ daily queries and costs jump to $80-150.

Comparison at this scale:

- Pinecone Serverless: $35-60/month

- Qdrant Cloud: $25-45/month (or free tier for very low traffic)

- Weaviate Cloud: $45-80/month

- Self-hosted Milvus: $40-80/month (but massive overengineering for 1M vectors)

Verdict: Milvus is overbuilt for 1M vectors. Use Pinecone or Qdrant instead.

Scenario 2: Production Workload (10M Vectors, Moderate Traffic)

A recommendation engine, semantic search platform, or enterprise RAG with 10M vectors and 10,000-50,000 queries per day.

| Component | Calculation | Monthly Cost |

|---|---|---|

| Storage | 120 GB x $0.02 | $2.40 |

| Compute (Dedicated, 2 CU) | 2 x 720 hours x $0.096 | $138 |

| Backups | 120 GB x $0.015 | $1.80 |

| Total (Dedicated) | ~$140-300/month | |

| Total (Serverless, moderate) | ~$250-500/month |

Why is Serverless more expensive here? Because sustained moderate traffic on Serverless accumulates more CU-hours than a fixed Dedicated cluster. If your traffic is predictable and steady, Dedicated saves money. If your traffic is bursty with idle periods, Serverless wins.

Comparison at this scale:

- Pinecone Serverless: $170-370/month

- Qdrant Cloud: $120-180/month

- Weaviate Cloud: $200-400/month

- Self-hosted Milvus: $100-200/month (3-node cluster on EKS)

Verdict: At 10M vectors, Qdrant Cloud is cheapest for pure vector search. Zilliz Dedicated is competitive if you need Milvus-specific features (GPU search, DiskANN, streaming inserts at scale).

Scenario 3: Large-Scale Production (100M+ Vectors, High Traffic)

An enterprise search platform, large-scale recommendation system, or multi-tenant SaaS serving 100M+ vectors with 500,000+ queries per day.

| Component | Calculation | Monthly Cost |

|---|---|---|

| Storage | 1.2 TB x $0.02 | $24 |

| Compute (Dedicated, 8 CU) | 8 x 720 hours x $0.096 | $553 |

| Backups | 1.2 TB x $0.015 | $18 |

| Total (Dedicated) | ~$600-1,200/month | |

| Total (Enterprise, high availability) | ~$1,500-3,500/month |

At this scale, Milvus architecture begins to shine. Its segment-based storage distributes data across nodes and allows independent scaling of query and indexing capacity.

Comparison at this scale:

- Pinecone Pods (p2.x4 x 3): $5,760/month

- Pinecone Serverless: $800-1,500/month

- Qdrant Cloud (XLarge x 2): $1,800-2,500/month

- Self-hosted Milvus on EKS: $300-600/month

Verdict: Self-hosted Milvus wins decisively at 100M+ vectors. If you must use managed, Zilliz Dedicated is 2-3x cheaper than Pinecone Pods.

Self-Hosted Milvus: Production Architecture at $300-600/Month

The $300-600/month number above is not hypothetical. Here is the exact 3-node EKS cluster architecture we deploy for clients running 100M+ vectors in production.

Infrastructure Breakdown (100M Vectors, 1536 Dimensions)

| Component | Instance/Service | Pricing | Monthly Cost |

|---|---|---|---|

| Query node | 1x r6g.xlarge (32GB RAM) | $0.20/hr Spot | $144 |

| Index node | 1x r6g.large (16GB RAM) | $0.10/hr Spot | $72 |

| Coordinator + etcd + proxy | 1x m6g.large (8GB RAM) | $0.077/hr OD | $56 |

| EKS control plane | Managed | Flat fee | $73 |

| S3 storage (segments + indexes) | ~1.2TB | $0.023/GB | $25 |

| Total infrastructure | $370 |

The query node runs on r6g.xlarge because it needs RAM for loaded segments. With DiskANN or IVF_SQ8, 32GB handles 100M vectors comfortably. The index node only bursts during index builds (can use Spot Savings Plans for further reduction). The coordinator node runs on-demand because etcd availability is critical.

Why This Beats Zilliz Dedicated

| Metric | Self-Hosted ($370/mo) | Zilliz Dedicated ($600-1,200/mo) |

|---|---|---|

| Cost savings | Baseline | 1.6-3.2x more expensive |

| Query latency (p99) | 8-15ms | 5-10ms |

| Scaling flexibility | Full (add nodes) | CU increments only |

| GPU support | Yes (add GPU node) | Yes (at $0.35-1.20/GPU-CU-hr) |

| Version control | Pin any release | Zilliz-managed upgrades |

The 1.6-3.2x savings are real, but they come with operational cost.

The Operational Reality

Budget 8-16 hours/month of SRE time for:

- etcd maintenance (4-6 hrs/month): Compaction, defragmentation, backup verification. etcd is the single point of failure in Milvus. If it corrupts, you lose collection metadata.

- Compaction monitoring (2-4 hrs/month): Milvus segments grow and need periodic compaction. Uncompacted segments degrade query performance and waste storage.

- Version upgrades (2-4 hrs/month averaged): Milvus releases monthly. Not every release requires immediate upgrade, but falling more than 2 versions behind makes upgrades painful.

- Capacity planning (1-2 hrs/month): Monitor segment loading times, query latency percentiles, and memory utilization trends to plan scaling.

At $150/hour SRE cost, 12 hours/month = $1,800/month in labor. This makes self-hosting economically viable only when the infrastructure savings exceed the labor cost. The break-even: if Zilliz Dedicated would cost $2,200+/month, self-hosting saves money even with SRE labor factored in. At 100M vectors, Zilliz Dedicated runs $600-1,200/month — self-hosting wins only if your SRE time is already allocated (existing platform team) or if you value the control regardless of labor cost.

Spot Instance Strategy

The query and index nodes use Spot pricing (60-70% discount). To handle Spot interruptions:

- Run the query node in a 2-instance Auto Scaling Group across 3 AZs

- Enable Milvus replica groups so a second query node can serve traffic during Spot reclamation

- Keep the coordinator on On-Demand (etcd cannot tolerate interruptions)

This adds ~$72/month (second query node Spot) but eliminates availability risk. Total with HA: $442/month.

DiskANN: The Game-Changer for 100M+ Vectors

At 100M vectors and above, the choice of index algorithm determines whether your infrastructure bill is $400/month or $4,000/month. DiskANN is what makes large-scale vector search economically viable.

How DiskANN Works

Traditional HNSW stores the entire graph structure and all vectors in RAM. At 100M vectors (1536 dimensions, float32), that requires approximately 64GB of RAM just for vectors, plus another 20-30GB for the HNSW graph. Total: 80-90GB RAM minimum.

DiskANN takes a different approach:

- Vectors are stored on NVMe SSD (not RAM)

- A compressed navigation graph (PQ-compressed) stays in RAM for traversal

- During search, the algorithm navigates the in-memory graph to identify candidate vectors, then fetches full vectors from SSD for final distance computation

The RAM footprint drops from 80-90GB to 12-16GB because only the compressed graph (product quantization) lives in memory. The full vectors sit on fast NVMe storage.

Cost Impact at Scale

| Scale | HNSW (all RAM) | DiskANN (RAM + NVMe) | Savings |

|---|---|---|---|

| 100M vectors | 64GB RAM = $460/month | 16GB RAM + 500GB NVMe = $180 | 61% |

| 250M vectors | 160GB RAM = $1,150/month | 32GB RAM + 1.2TB NVMe = $320 | 72% |

| 500M vectors | 320GB RAM = $2,300/month | 64GB RAM + 2.5TB NVMe = $580 | 75% |

| 1B vectors | 640GB RAM = $4,600/month | 128GB RAM + 5TB NVMe = $1,100 | 76% |

At 500M vectors, DiskANN is the only economically viable option under $1,000/month. HNSW at that scale requires a $2,300/month RAM-optimized cluster or multiple r6g.4xlarge instances.

Latency Tradeoff

DiskANN is not free lunch. The SSD reads add latency:

| Metric | HNSW (in-memory) | DiskANN (NVMe SSD) | Acceptable For |

|---|---|---|---|

| p50 latency | 1-3ms | 3-8ms | All use cases |

| p99 latency | 2-5ms | 5-15ms | Search, recommendations, RAG |

| p999 latency | 5-10ms | 15-40ms | Anything except real-time bidding |

For most search use cases (semantic search, RAG, product recommendations, content discovery), 5-15ms p99 is indistinguishable from 2-5ms in user experience. The 3-10ms difference is invisible when the LLM response takes 500-2000ms anyway.

When DiskANN Does Not Work

- Real-time bidding / ad serving: Needs sub-2ms p99. Stay with HNSW in RAM.

- Extremely high QPS (100K+ queries/second): SSD IOPS become the bottleneck. At 100K QPS, you need multiple NVMe drives in RAID-0 or fall back to in-memory.

- Frequent updates: DiskANN index rebuilds are slower than HNSW. If your vectors change hourly, the rebuild overhead makes DiskANN impractical.

DiskANN on Self-Hosted Milvus: The $180/Month 100M-Vector Setup

| Component | Spec | Monthly Cost |

|---|---|---|

| Query node (r6g.xlarge) | 32GB RAM (16GB for PQ) | $144 (Spot) |

| NVMe storage (i3.large) | 475GB NVMe local | $108 (Spot) |

| OR: EBS io2 (500GB, 10K IOPS) | Provisioned IOPS | $65 |

| Coordinator + etcd | m6g.large | $56 |

| S3 (backup/cold segments) | 1.2TB | $25 |

| Total (EBS path) | $290 | |

| Total (i3 NVMe path) | $333 |

The EBS io2 path is cheaper but gives lower IOPS (10K provisioned vs 100K+ on NVMe). For most workloads under 50K QPS, EBS io2 is sufficient. For higher throughput, the i3 instance with local NVMe wins on raw performance.

Either way: 100M vectors, sub-15ms p99, under $350/month. Compare to Zilliz Dedicated at $600-1,200/month or Pinecone at $800-1,500/month for the same scale.

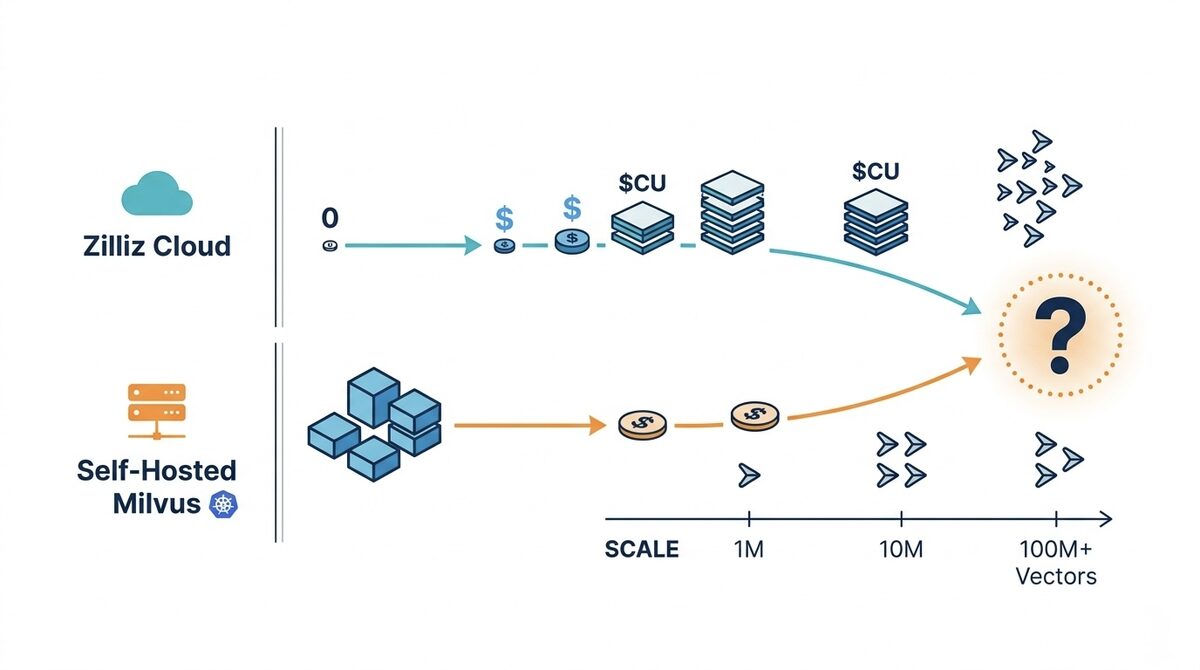

Zilliz Cloud vs Self-Hosted Milvus: The Architecture and Cost Trade-Off

Milvus is fully open source (Apache 2.0 license). Running it yourself is a legitimate production option. But Milvus self-hosting is not like deploying a single binary. It is a distributed system with multiple components.

Milvus Architecture (What You Are Managing)

| Component | Purpose | Infrastructure Needed |

|---|---|---|

| Query Nodes | Execute searches | 1-N pods (scale with QPS) |

| Data Nodes | Handle inserts/deletes | 1-N pods (scale with write throughput) |

| Index Nodes | Build and maintain indexes | 1-N pods (CPU/GPU intensive, burst) |

| Proxy | API gateway and routing | 1-2 pods |

| Root Coord | Metadata coordination | 1 pod |

| etcd | Metadata storage | 3-pod cluster (HA) |

| MinIO/S3 | Object storage for segments | S3 bucket or MinIO cluster |

| Pulsar/Kafka | Message queue for WAL | 3-node cluster (or managed Kafka) |

That is at minimum 10-15 pods for a production deployment. Serious.

Self-Hosted Cost Model (AWS EKS)

| Scale | Nodes | Monthly Infra Cost | Compared to Zilliz |

|---|---|---|---|

| 10M vectors | 3 x m5.xlarge (4 vCPU, 16GB) | $150-200/month | Zilliz: $140-300 |

| 50M vectors | 3 x m5.2xlarge (8 vCPU, 32GB) | $300-450/month | Zilliz: $400-800 |

| 100M vectors | 5 x m5.2xlarge + 2 x m5.4xlarge | $500-800/month | Zilliz: $600-1,200 |

| 500M vectors | 8-12 nodes mixed sizes | $1,500-3,000/month | Zilliz: $3,000-6,000 |

The hidden costs of self-hosting Milvus:

-

etcd is fragile. If your etcd cluster goes down, Milvus cannot serve queries. etcd requires careful monitoring, backup, and occasional compaction.

-

Index building is expensive. Building an IVF or HNSW index on 100M vectors requires significant CPU (or GPU) for hours. On Zilliz, this happens transparently. Self-hosted, you provision index nodes and manage the scheduling.

-

Version upgrades are complex. Milvus has a rapid release cycle. Upgrading a distributed system with etcd schema changes, storage format changes, and API changes requires planning and testing.

-

MinIO/S3 costs add up. At 100M vectors, your segment storage in S3 can reach 1-2TB. S3 costs are minimal ($23/TB/month), but the GET/PUT operations during compaction and segment loading add $10-50/month.

Break-Even Analysis

| Factor | Zilliz Cloud | Self-Hosted Milvus |

|---|---|---|

| < 10M vectors | Usually cheaper (less overhead) | Overengineered, not worth it |

| 10-50M vectors | Comparable (convenience premium) | 30-50% cheaper in infra |

| 50M+ vectors | Expensive at scale | 50-70% cheaper in infra |

| Engineering time | Zero | 8-20 hours/month minimum |

| Time to production | Hours | Days to weeks |

| Disaster recovery | Built-in | You build and test it |

The simple rule: if your monthly Zilliz bill exceeds $500 and your team has Kubernetes expertise, evaluate self-hosting. Below $500/month, the managed convenience is almost always worth it.

Milvus/Zilliz vs Competitors: When Each Wins

Cost and Feature Matrix at 10M Vectors

| Factor | Zilliz Cloud | Pinecone Serverless | Qdrant Cloud | Weaviate Cloud |

|---|---|---|---|---|

| Monthly cost (10M vectors) | $250-500 | $170-370 | $120-180 | $200-400 |

| Architecture | Distributed (multi-node) | Serverless (proprietary) | Single-node or replicated | Single-node or replicated |

| Max vector count | Billions | Hundreds of millions | Hundreds of millions | Tens of millions |

| GPU-accelerated search | Yes (NVIDIA) | No | No | No |

| DiskANN (on-disk vectors) | Yes | No | Limited | No |

| Streaming inserts | Yes (Kafka/Pulsar WAL) | Yes (limited throughput) | Yes | Yes |

| Hybrid search (sparse+dense) | Yes | Limited | No | Yes (BM25) |

| Multi-vector (ColBERT) | Yes | No | No | No |

| Scale-to-zero | Serverless only | Yes | No | Near-zero |

| Open source | Apache 2.0 | No | Apache 2.0 | BSD-3 |

| Self-host path | Full feature parity | N/A | Full parity | Full parity |

Choose Milvus/Zilliz When:

-

Your dataset will exceed 100M vectors. Milvus distributed architecture scales horizontally better than any competitor. Adding query nodes increases QPS linearly.

-

You need GPU-accelerated search. For sub-millisecond latency at scale, Milvus supports NVIDIA GPUs for index building and query execution. No competitor offers this.

-

You want DiskANN. Storing vectors on disk instead of RAM reduces memory costs by 10x. Milvus DiskANN maintains >95% recall with disk-based indexes.

-

Streaming inserts matter. If your data changes continuously (real-time recommendations, live content indexing), Milvus WAL-based architecture handles concurrent reads and writes better than eventually-consistent alternatives.

-

You plan to self-host at scale. Milvus on Kubernetes gives you complete control, full feature parity with managed, and 50-70% cost savings at scale.

Do Not Choose Milvus When:

-

Your dataset is under 10M vectors. The distributed architecture is overkill. You will pay more for less simplicity.

-

Your team lacks Kubernetes expertise. Self-hosted Milvus is complex. Zilliz Cloud removes this, but then you pay a premium over simpler alternatives.

-

You want the simplest API. Pinecone's API is simpler. Qdrant's API is simpler. Milvus has more concepts (collections, partitions, segments, fields, indexes) that take time to learn.

-

Cost at small scale matters most. Under 10M vectors, Qdrant Cloud ($120-180/month) beats Zilliz ($250-500/month) consistently.

Zilliz Cloud Cost Optimization: 6 Strategies

1. Use DiskANN for Large Collections

If recall > 95% is acceptable, DiskANN stores vectors on SSD instead of RAM. At 100M vectors:

- HNSW (in-memory): requires 8-16 CUs ($550-1,100/month in compute)

- DiskANN (on-disk): requires 2-4 CUs ($140-280/month in compute) + extra SSD storage

That is a 60-75% compute reduction with minimal latency impact for most workloads.

2. Choose Dedicated Over Serverless for Steady Workloads

If your queries run 16+ hours per day at consistent volume, Dedicated clusters are cheaper than Serverless pay-per-CU-hour. The crossover:

-

Serverless at 15 CU-hours/day = $43/month

-

Dedicated 1 CU (24/7) = $69/month

-

Serverless at 30 CU-hours/day = $86/month

-

Dedicated 2 CU (24/7) = $138/month

If your average daily consumption exceeds what a fixed CU allocation would provide, Serverless is better. If it is below the CU ceiling, Dedicated wastes less.

3. Partition Your Collections

Milvus supports partition keys that physically separate data. Benefits:

- Queries against a single partition skip all other data (less compute)

- Partitions can be loaded/released independently (memory savings)

- Ideal for multi-tenant workloads (partition per customer)

At 50M vectors with 100 partitions, queries that target one partition search 500K vectors instead of 50M. That is a 100x reduction in compute cost per query.

4. Use Scalar Quantization

Milvus supports IVF_SQ8 (scalar quantization) that compresses vectors from float32 to int8:

- Memory reduction: 4x

- Recall impact: typically less than 2% loss

- Cost impact: fewer CUs needed for the same dataset size

At 100M vectors, SQ8 means you can run on 4 CUs instead of 16 CUs. Annual savings: roughly $8,000.

5. Offload Cold Collections to Warm Storage

Collections not queried in the last 30+ days should move to warm storage ($0.008/GB vs $0.02/GB). That is a 60% reduction in storage costs for archival data that you still want available for occasional queries.

6. Right-Size Index Parameters

Milvus index parameters dramatically affect compute consumption:

| Parameter | Effect of Over-Sizing | Recommended Approach |

|---|---|---|

| nlist (IVF) | Higher = more segments to build | Start at sqrt(N), tune up if recall is low |

| ef_construction (HNSW) | Higher = slower index, better recall | 128-256 for most workloads |

| M (HNSW) | Higher = more memory per vector | 16-32 for most workloads |

| nprobe (query) | Higher = more partitions searched | Start at nlist/10, tune for latency budget |

Over-provisioning these parameters wastes CU-hours on both index building and query execution.

The Bottom Line

Milvus is not a vector database for everyone. It is a vector database for teams building at scale with demanding requirements around distribution, GPU acceleration, and streaming ingestion. At 100M+ vectors, nothing matches its architecture.

At smaller scales (under 10M vectors), you are paying a complexity premium. The distributed architecture that makes Milvus shine at billions of vectors is unnecessary overhead at millions. Use Qdrant Cloud for the cheapest managed vector search, or Pinecone Serverless for the simplest developer experience.

If you are running vector database infrastructure that costs more than $500/month and growing, our team at LeanOps specializes in AI infrastructure cost optimization. We audit vector database deployments, recommend architecture changes, and typically cut costs by 40-60% within 60 days. Get a free Cloud Waste Assessment to find out where your AI infrastructure budget is going.

Further reading: