The Open-Source Vector Database With a Cloud That Actually Makes Sense Financially

Qdrant has quietly become the vector database of choice for teams that care about cost. While Pinecone grabs headlines with its marketing budget, Qdrant has been winning on three fronts that matter to engineering teams: it is open source (you can leave anytime), it is written in Rust (genuinely fast), and its managed cloud pricing does not punish you for growing.

We have deployed Qdrant for several clients at LeanOps as part of AI infrastructure cost optimization projects. The typical scenario: a team starts on Pinecone, their vector count grows past 10 million, and their monthly bill crosses $300-500. They ask "is there something cheaper?" The answer is usually Qdrant, either self-hosted or on Qdrant Cloud, at 40-60% of the Pinecone cost.

But "cheaper" has nuances. Qdrant Cloud is not always the right choice. This post gives you the real pricing in 2026, honest cost modeling at different scales, and a clear framework for deciding between Qdrant Cloud, self-hosted Qdrant, and Pinecone.

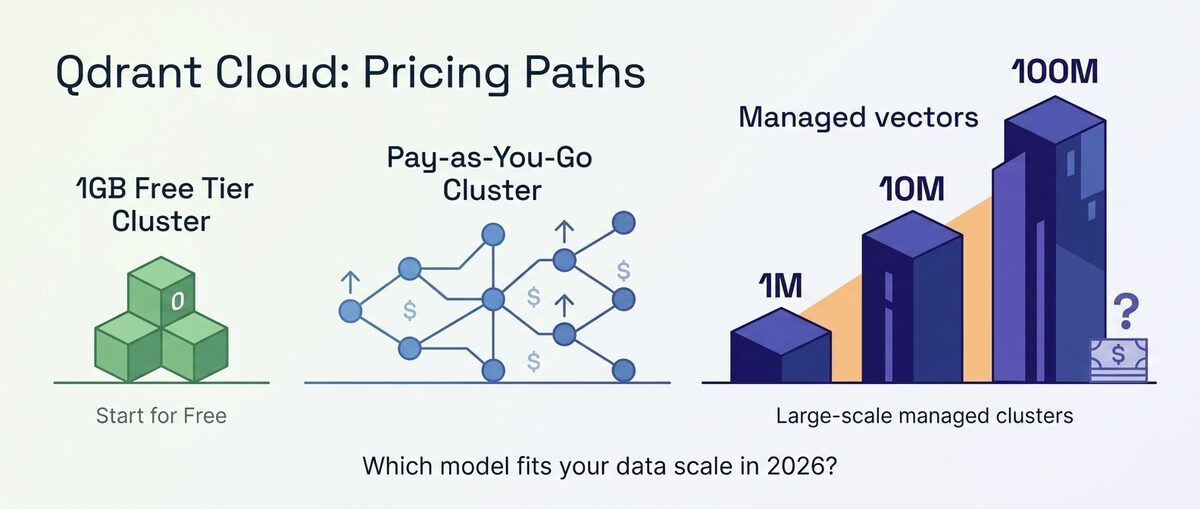

Qdrant Cloud Pricing in 2026: Complete Breakdown

Qdrant Cloud uses a resource-based pricing model. You pay for the cluster resources you provision (RAM, CPU, disk), not per query or per vector.

Cluster Pricing

| Resource | Rate | Monthly Equivalent |

|---|---|---|

| RAM | $0.078/GB-hour | ~$57/GB/month |

| vCPU | Bundled with RAM tier | Included |

| Disk (SSD) | $0.00015/GB-hour | ~$0.11/GB/month |

| Disk (NVMe) | $0.00025/GB-hour | ~$0.18/GB/month |

Pre-Configured Cluster Sizes

| Cluster Size | RAM | vCPU | Disk | Monthly Cost (approx) |

|---|---|---|---|---|

| Free | 1 GB | 0.5 | 4 GB | $0 |

| Small | 2 GB | 0.5 | 8 GB | $114 |

| Medium | 4 GB | 1 | 16 GB | $228 |

| Large | 8 GB | 2 | 32 GB | $456 |

| XLarge | 16 GB | 4 | 64 GB | $912 |

| Custom | Configurable | Configurable | Configurable | Varies |

Free Tier Details

| Feature | Limit |

|---|---|

| Cluster size | 1 GB RAM, 0.5 vCPU, 4 GB disk |

| Vector capacity | ~250K vectors (768-dim) or ~125K (1536-dim) |

| Collections | Unlimited |

| API requests | No rate limit (limited by cluster size) |

| Duration | Permanent (no expiration) |

| Regions | 1 |

| Backups | Manual only |

The free tier is genuinely usable. Unlike some "free" tiers that throttle you into upgrading within a week, Qdrant's 1GB cluster runs without time pressure. For a side project or a low-traffic RAG app, it is legitimately free forever.

Additional Costs

| Feature | Cost |

|---|---|

| Automated backups | $0.03/GB/month |

| Cross-region replication | 2x cluster cost (replica in second region) |

| Private networking (VPC peering) | Included in Enterprise |

| Support (Standard) | Included |

| Support (Priority) | Custom pricing |

How Qdrant Cloud Sizing Works

Understanding how to size a Qdrant cluster determines your cost. The key constraint is RAM: all vector data (or at least the HNSW index) must fit in RAM for low-latency queries.

RAM Requirements Per Vector

| Dimension | Bytes per Vector (float32) | Vectors per GB RAM | With Scalar Quantization |

|---|---|---|---|

| 384 | 1,536 bytes | ~650,000 | ~1,300,000 |

| 768 | 3,072 bytes | ~325,000 | ~650,000 |

| 1024 | 4,096 bytes | ~245,000 | ~490,000 |

| 1536 | 6,144 bytes | ~163,000 | ~326,000 |

| 3072 | 12,288 bytes | ~81,000 | ~162,000 |

These numbers include HNSW index overhead (roughly 30-40% on top of raw vector storage). Real-world capacity is typically 60-70% of the theoretical maximum to leave headroom for metadata, payload storage, and query processing.

Practical rule of thumb: For 1536-dimension vectors with metadata, budget approximately 100,000-120,000 vectors per GB of RAM in production. This leaves sufficient headroom for stable query performance.

Sizing Examples

| Vector Count | Dimension | Recommended Cluster | Monthly Cost |

|---|---|---|---|

| 100K | 1536 | Free (1GB) | $0 |

| 500K | 1536 | Small (2GB) | $114 |

| 1M | 1536 | Medium (4GB) | $228 |

| 2M | 1536 | Large (8GB) | $456 |

| 5M | 1536 | XLarge (16GB) | $912 |

| 10M | 1536 | 2x XLarge or Custom (32GB) | $1,824 |

Wait. $1,824/month for 10M vectors? That seems high. Let me be transparent about what happens here: at larger scales, you should use quantization and disk-based indexes to reduce RAM requirements significantly.

With Scalar Quantization (Recommended for 5M+ Vectors)

Scalar quantization reduces each float32 dimension to uint8, cutting RAM usage by roughly 75% while losing less than 1% recall in most benchmarks.

| Vector Count | Dimension | With Quantization Cluster | Monthly Cost |

|---|---|---|---|

| 5M | 1536 | Medium (4GB) + disk index | $228 |

| 10M | 1536 | Large (8GB) + disk index | $456 |

| 50M | 1536 | Custom (32GB) + disk index | $1,824 |

| 100M | 1536 | Custom (64GB) + disk index | $3,648 |

That is more reasonable. Quantization is not optional for cost-effective Qdrant at scale. It is essential.

Real-World Cost Modeling: Qdrant Cloud vs Pinecone vs Self-Hosted

Let us compare the three most common options at realistic scales.

1M Vectors, 1536 Dimensions, 100K Queries/Day

| Solution | Monthly Cost | Notes |

|---|---|---|

| Qdrant Cloud (Medium) | $228 | 4GB cluster, includes all queries |

| Qdrant Cloud (Small + quantization) | $114 | 2GB with scalar quantization |

| Pinecone Serverless | $25-60 | Depends on RU consumption per query |

| Self-hosted Qdrant (AWS t3.medium) | $35 | 4GB RAM, you manage it |

| Pinecone Pods (p1.x2) | $192 | Fixed capacity, includes queries |

At 1M vectors with moderate query volume, Pinecone Serverless is cheapest because its pay-per-query model works well at low scale. Qdrant Cloud's fixed cluster cost makes it more expensive for small workloads. Self-hosted is cheapest overall but adds operational burden.

10M Vectors, 1536 Dimensions, 500K Queries/Day

| Solution | Monthly Cost | Notes |

|---|---|---|

| Qdrant Cloud (Large + quantization) | $456 | 8GB with scalar quantization |

| Pinecone Serverless | $370 | $120 storage + $250 read units |

| Self-hosted Qdrant (AWS r6g.large) | $80 | 16GB RAM, Spot pricing |

| Pinecone Pods | $700-1,200 | Multiple pods needed |

At 10M vectors, the picture shifts. Pinecone Serverless is still competitive but Qdrant Cloud with quantization is only 23% more expensive while giving you unlimited queries (no per-read-unit charges). Self-hosted is 5-6x cheaper than either managed option.

50M Vectors, 1536 Dimensions, 2M Queries/Day

| Solution | Monthly Cost | Notes |

|---|---|---|

| Qdrant Cloud (Custom 32GB + quant) | $1,824 | Scalar quantization + disk index |

| Pinecone Serverless | $2,700 | $600 storage + $2,100 read units |

| Self-hosted Qdrant (AWS r6g.2xlarge) | $200 | 64GB RAM, Spot, handles 50M easily |

| Pinecone Pods | $3,000+ | Enterprise tier required |

At 50M vectors, Qdrant Cloud saves 32% versus Pinecone Serverless and significantly more versus Pinecone Pods. But the real story here is self-hosted: a $200/month Spot instance handles 50M vectors comfortably. The managed options cost 9-14x more.

The Crossover Summary

| Scale | Cheapest Option | Second Cheapest |

|---|---|---|

| Under 500K vectors | Pinecone Serverless (or Qdrant Free) | Self-hosted |

| 500K - 5M vectors | Self-hosted | Tie (Qdrant Cloud vs Pinecone) |

| 5M - 20M vectors | Self-hosted | Qdrant Cloud (with quantization) |

| 20M+ vectors | Self-hosted | Qdrant Cloud (40-50% cheaper than Pinecone) |

Optimization Playbook: Cut Qdrant Cloud Costs 50-75%

The pricing tables above assume default configurations. In practice, teams that apply quantization and indexing strategies cut their Qdrant Cloud bill by half or more. Here is what actually moves the needle.

Scalar Quantization: 75% RAM Reduction, Direct Cost Cut

Scalar quantization converts each float32 dimension to uint8 (1 byte instead of 4). RAM usage drops by 75%, and since Qdrant Cloud bills by RAM, your cluster cost drops proportionally.

At 10M vectors (1536 dimensions):

| Configuration | Cluster Size | Monthly Cost |

|---|---|---|

| Default (float32, HNSW) | 32GB | $1,824 |

| Scalar quantization (int8) | 8GB | $456 |

| Scalar + disk index | 2GB | $114 |

That is a 93% cost reduction from default to fully optimized. The $114/month configuration maintains >99% recall for most embedding models (OpenAI text-embedding-3, Cohere embed-v3, BGE).

Binary Quantization: 32x Compression for Coarse Retrieval

For use cases that tolerate 2-3% recall loss (initial candidate retrieval followed by re-ranking, recommendation pre-filtering), binary quantization reduces each dimension to a single bit. That is 32x compression versus float32.

At 10M vectors: binary quantization drops RAM requirements to under 500MB. You can run on the free tier or a $114/month Small cluster with room to spare.

The trade-off is real: binary quantization works best with high-dimensional embeddings (1536+) and requires a re-ranking step with original vectors for precision-sensitive applications. But for coarse ranking in a two-stage retrieval pipeline, the economics are unbeatable.

Disk-Based Indexing: Trade 2-5ms Latency for 60-80% RAM Savings

By default, Qdrant keeps the entire HNSW graph in RAM. Disk-based indexing moves the graph to SSD and keeps only hot segments (frequently accessed entry points) in memory.

Impact:

- RAM reduction: 60-80% depending on access patterns

- Latency increase: 2-5ms added to p99 (from ~3ms to ~5-8ms)

- Use case fit: Any application where 10ms p99 is acceptable (most RAG, semantic search, recommendations)

Combined with scalar quantization, disk-based indexing is what makes the $114/month price point possible at 10M vectors.

Batch Ingestion: Reduce CU Consumption During Peak

Streaming writes during peak query hours forces Qdrant to rebuild index segments while serving traffic, consuming extra resources and potentially requiring a larger cluster.

Instead:

- Batch ingestion during off-peak hours (2-6 AM in your primary traffic timezone)

- Use

wait=falsefor bulk upserts to avoid blocking - Set

optimizers_config.indexing_thresholdhigher during ingestion, then lower it after

This does not reduce your cluster size directly, but it prevents the need to over-provision for concurrent read/write peaks.

The Combined Impact

With quantization alone, the 10M vector scenario drops from $456/month (already optimized from $1,824 default) to $114/month. That is now cheaper than Pinecone Serverless at $370/month for the same scale, with unlimited queries included.

| Optimization Stack | 10M Vectors Cost | vs Pinecone ($370) |

|---|---|---|

| Qdrant Cloud (default) | $1,824/month | 4.9x more |

| + Scalar quantization | $456/month | 1.2x more |

| + Scalar quant + disk index | $114/month | 3.2x cheaper |

| Self-hosted (r6g.large, Spot) | $80/month | 4.6x cheaper |

Self-Hosted Qdrant: The $80/Month Setup That Handles 10M Vectors

For teams with basic AWS/DevOps capability, self-hosted Qdrant on a single instance is the cheapest path to production vector search. Here is the exact setup we deploy for clients at LeanOps.

Instance Selection

AWS r6g.large (ARM Graviton3):

- 16GB RAM, 2 vCPU

- Spot price: ~$0.11/hour = $80/month

- On-Demand fallback: $0.20/hour = $144/month

- ARM-native Qdrant binary available (no performance penalty)

Why r6g.large: Qdrant is memory-bound, not CPU-bound. The 16GB RAM on an r6g.large holds 10M vectors at 1536 dimensions with scalar quantization enabled (requires ~6-8GB effective). The remaining RAM serves as OS cache and query buffer.

Docker Compose Configuration

services:

qdrant:

image: qdrant/qdrant:latest

ports:

- "6333:6333"

- "6334:6334"

volumes:

- ./qdrant_storage:/qdrant/storage

environment:

- QDRANT__STORAGE__PERFORMANCE__MAX_OPTIMIZATION_THREADS=1

deploy:

resources:

limits:

memory: 14G

The 14GB memory limit leaves 2GB for the OS and prevents OOM kills. The single optimization thread prevents CPU contention during index rebuilds.

Monitoring: Know When to Scale

Set a CloudWatch alarm at 80% RAM utilization. At typical growth rates (adding 500K-1M vectors per month), you have 4-6 months of headroom before needing to upgrade to r6g.xlarge (32GB, $160/month Spot).

Key metrics to monitor:

app_info_memory_usage_bytes— primary scaling signalcollections_total_segments— if segments grow past 20, trigger optimizationgrpc_responses_duration_seconds(p99) — latency degradation signals memory pressure

Backup Strategy

Daily snapshot to S3 costs $0.50/month at 10M vectors:

# Cron job: daily at 3 AM UTC

0 3 * * * curl -X POST http://localhost:6333/collections/my_collection/snapshots && \

aws s3 cp /qdrant/storage/snapshots/ s3://my-qdrant-backups/ --recursive

Snapshot size at 10M vectors with quantization: ~4-6GB compressed. S3 Standard costs $0.023/GB/month. Seven daily snapshots = ~35GB = $0.80/month.

Total Self-Hosted Cost Breakdown

| Component | Monthly Cost |

|---|---|

| r6g.large (Spot) | $80 |

| EBS gp3 (100GB) | $8 |

| S3 backups | $0.80 |

| CloudWatch alarms | $0.30 |

| Total | ~$89 |

Compare this to $456/month on Qdrant Cloud (with quantization) or $370/month on Pinecone Serverless for the same 10M vector workload. Self-hosted is 4-5x cheaper with approximately 2 hours/month of maintenance time.

Qdrant Cloud vs Pinecone: Beyond Pricing

Cost is not the only factor. Here is where each platform wins on capabilities.

Qdrant Cloud Advantages

- No per-query billing. Once your cluster is running, queries are unlimited. This makes costs predictable and eliminates surprise bills from traffic spikes.

- Open-source portability. If Qdrant Cloud gets too expensive, you can download your data and self-host the exact same software. No vendor lock-in.

- Quantization built-in. Scalar and binary quantization reduce memory requirements by 4-8x with minimal recall loss. This directly reduces cluster costs.

- Payload filtering. Rich filtering on metadata without the "read unit multiplier" that Pinecone applies to filtered queries.

- Multitenancy. Built-in tenant isolation without the namespace overhead of Pinecone.

Pinecone Advantages

- True serverless (scale to zero). When nobody queries, you pay only storage ($2/GB). Qdrant Cloud clusters run 24/7 at their provisioned size.

- Zero sizing decisions. You never think about RAM, CPU, or disk. Pinecone handles all capacity planning automatically.

- Faster cold-start for new projects. Create an index, upsert vectors, query. No cluster provisioning, no quantization configuration, no capacity planning.

- Larger ecosystem of integrations. LangChain, LlamaIndex, and most AI frameworks have Pinecone as a first-class integration. Qdrant support is growing but slightly less mature.

When to Choose Each

Choose Qdrant Cloud if:

- You have 5M+ vectors and want predictable monthly costs

- Your query volume is high and variable (unlimited queries on a fixed cluster is better economics)

- You value the escape hatch of self-hosting later

- You want fine control over quantization and indexing configuration

- Your team understands basic cluster sizing

Choose Pinecone if:

- You have under 2M vectors with sporadic, low-volume queries

- Your team has zero infrastructure experience

- You need the fastest possible time-to-production (minutes, not hours)

- Your query traffic is highly bursty (scale-to-zero saves money during idle periods)

5 Strategies to Reduce Qdrant Cloud Costs

1. Enable Scalar Quantization (Save 50-75% on RAM)

This is the single most impactful optimization. Scalar quantization converts float32 vectors to uint8, reducing memory per vector by 4x. Recall loss is typically under 1% for most embedding models.

from qdrant_client.models import ScalarQuantization, ScalarQuantizationConfig

client.update_collection(

collection_name="my_collection",

quantization_config=ScalarQuantization(

scalar=ScalarQuantizationConfig(

type="int8",

quantile=0.99,

always_ram=True

)

)

)

A collection that needs 16GB of RAM without quantization drops to 4GB with it. That is a 75% cost reduction ($912/month to $228/month).

2. Use On-Disk Indexes for Cold Data

Qdrant supports storing the HNSW index on disk rather than entirely in RAM. For collections where sub-10ms latency is not required (batch processing, offline recommendations), disk-based indexes let you use a smaller, cheaper cluster.

3. Right-Size Your Cluster Monthly

Qdrant Cloud lets you resize clusters. If your vector count grew from 2M to 3M but you provisioned for 5M, you are over-paying. Monitor actual memory usage via Qdrant's metrics endpoint and right-size quarterly.

4. Use Binary Quantization for Coarse Ranking

For re-ranking architectures (coarse retrieval + fine re-ranking), binary quantization reduces vectors to 1 bit per dimension. This is 32x smaller than float32 and enables dramatically smaller clusters for the initial retrieval stage. Combine with a smaller, high-accuracy re-ranker for final results.

5. Consider Hybrid (Cloud + Self-Hosted)

Run your hot, frequently-queried collections on Qdrant Cloud for managed reliability. Move cold or archival collections to a cheap self-hosted instance. This hybrid approach can reduce total costs by 30-50% while keeping critical paths fully managed.

The Bottom Line

Qdrant Cloud is the most cost-effective managed vector database for workloads above 5M vectors in 2026. Its resource-based pricing model (pay for RAM, not per query) rewards teams that optimize their memory footprint with quantization and smart indexing. At 10-50M vectors, you pay 30-50% less than Pinecone while getting unlimited query throughput.

The trade-off is clear: Qdrant Cloud requires more upfront sizing decisions. You need to understand your vector dimensions, choose quantization settings, and select the right cluster size. Pinecone abstracts all of that away at a higher price.

For teams where vector search infrastructure cost is a growing concern, the combination of Qdrant's open-source flexibility and managed cloud convenience hits a sweet spot. And if costs grow further, the exit path to self-hosted is clean because it is the same software.

If your AI infrastructure costs are climbing and you want to understand whether Qdrant, Pinecone, or self-hosted makes the most financial sense at your scale, our cloud cost optimization team works with AI/ML teams on exactly this analysis. Start with a free Cloud Waste Assessment.

For a broader comparison of vector database pricing, see our vector database cost comparison for 2026 and our breakdown of Pinecone pricing in 2026.

Further reading: