Your XS Warehouse Costs $4/Hour. Your Team Is Running a Medium 24/7. Do the Math.

Here is a conversation we have at least twice a month with new clients. "Our Snowflake bill is $15,000 this month and we genuinely do not understand why. We only have 2TB of data."

The answer is always the same: Snowflake's cost has almost nothing to do with how much data you store. It has everything to do with how many warehouses are running, how large they are, and how long they stay on between queries.

A single Medium warehouse left running 24/7 on Enterprise edition burns 4 credits/hour x 24 hours x 30 days x $3/credit = $8,640/month. That same workload, properly configured with auto-suspend at 60 seconds and right-sized to Small, would cost under $800/month for the same query performance. Same data. Same queries. Same results. 90% less cost.

Snowflake's credit-based pricing model is elegant in theory but punishing in practice. Unlike BigQuery (which charges per byte scanned and costs nothing when idle), Snowflake charges for time. Every second a warehouse is "on" costs money, whether it is running a query or waiting for the next one. Most teams wildly over-provision warehouse sizes "just in case" and set auto-suspend to 5-10 minutes, bleeding credits around the clock.

This post breaks down Snowflake's complete pricing model in 2026, models real-world costs at three different team sizes, shows you exactly where money leaks, and gives you the specific settings that save 40-60% within a single billing cycle.

If you are also evaluating BigQuery, see our complete BigQuery pricing breakdown for a direct comparison.

Snowflake Pricing Model: How Credits Actually Work

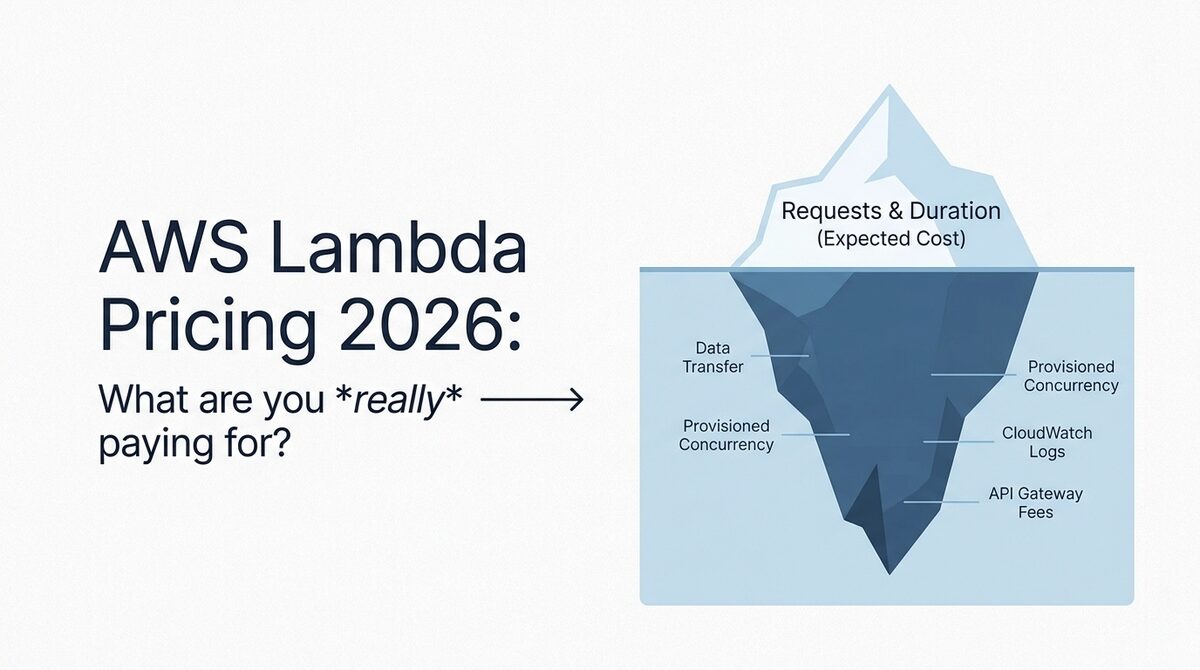

Snowflake bills on three dimensions: compute (credits), storage (per TB), and data transfer (per TB moved). Compute is where 70-85% of your bill lives.

Credit Pricing by Edition (2026)

| Edition | AWS Price/Credit | Azure Price/Credit | GCP Price/Credit | Key Feature |

|---|---|---|---|---|

| Standard | ~$2.00 | ~$2.10 | ~$2.15 | Basic analytics, 1 day Time Travel |

| Enterprise | ~$3.00 | ~$3.15 | ~$3.20 | Multi-cluster warehouses, 90-day Time Travel, masking |

| Business Critical | ~$4.00 | ~$4.20 | ~$4.30 | HIPAA, PCI DSS, failover, encryption everywhere |

| Virtual Private (VPS) | Custom | Custom | Custom | Dedicated infrastructure, highest isolation |

Critical context on credit pricing:

- These are on-demand (pay-as-you-go) rates. Pre-purchased capacity commitments reduce rates by 15-30% depending on volume and term length.

- A 1-year commitment typically saves 15-20%. A 3-year commitment saves 25-30%.

- Snowflake does not publish exact per-credit prices publicly. The rates above are based on our experience across 40+ client accounts in 2026. Your actual rate depends on negotiation.

- Credits are consumed by warehouses (queries), Snowpipe (continuous loading), automatic clustering, materialized view maintenance, and serverless features. Not all credits are equal in terms of what triggers them.

Warehouse Sizes and Credit Consumption

This is the table that determines your actual bill. Every warehouse size doubles the credits consumed per hour (and doubles the compute power).

| Warehouse Size | Credits/Hour | Credits/Day (24h) | On-Demand Cost/Hour (Enterprise) | Monthly Cost (24/7) |

|---|---|---|---|---|

| X-Small (XS) | 1 | 24 | $3.00 | $2,160 |

| Small (S) | 2 | 48 | $6.00 | $4,320 |

| Medium (M) | 4 | 96 | $12.00 | $8,640 |

| Large (L) | 8 | 192 | $24.00 | $17,280 |

| X-Large (XL) | 16 | 384 | $48.00 | $34,560 |

| 2X-Large | 32 | 768 | $96.00 | $69,120 |

| 3X-Large | 64 | 1,536 | $192.00 | $138,240 |

| 4X-Large | 128 | 3,072 | $384.00 | $276,480 |

Read that "Monthly Cost (24/7)" column again. A Medium warehouse running continuously costs more per month than most companies' entire S3 bill. And we regularly see teams with 3-5 Medium or Large warehouses running around the clock for workloads that actually need them for 4-6 hours per day.

Per-Second Billing (With a Catch)

Snowflake bills per second with a 60-second minimum each time a warehouse resumes. This means:

- A query that takes 5 seconds? You pay for 60 seconds.

- A query that takes 90 seconds? You pay for 90 seconds.

- A warehouse that resumes, runs a 3-second query, suspends, then resumes again 2 minutes later? You pay for 120 seconds (60-second minimum x 2 resume events).

This 60-second minimum is why auto-suspend timing matters so much. If you set auto-suspend to 60 seconds and your queries come in bursts every 45 seconds, the warehouse never suspends. But if they come every 90 seconds, you pay the 60-second minimum on every resume. Finding the right auto-suspend interval for your query pattern is one of the highest-ROI optimizations.

Storage Pricing: The Cheaper Part of Your Bill

Snowflake storage pricing is straightforward and typically represents only 10-20% of total costs. But it has nuances worth understanding.

Storage Rates (2026)

| Component | On-Demand Rate | Capacity Rate | Notes |

|---|---|---|---|

| Active storage | ~$40/TB/month | ~$23/TB/month | Compressed size, not raw |

| Time Travel storage | Same rate | Same rate | Data retained for recovery |

| Fail-safe storage | Same rate | Same rate | 7 days beyond Time Travel |

| Stage storage (internal) | Same rate | Same rate | Files in @stages |

Key things to understand about Snowflake storage:

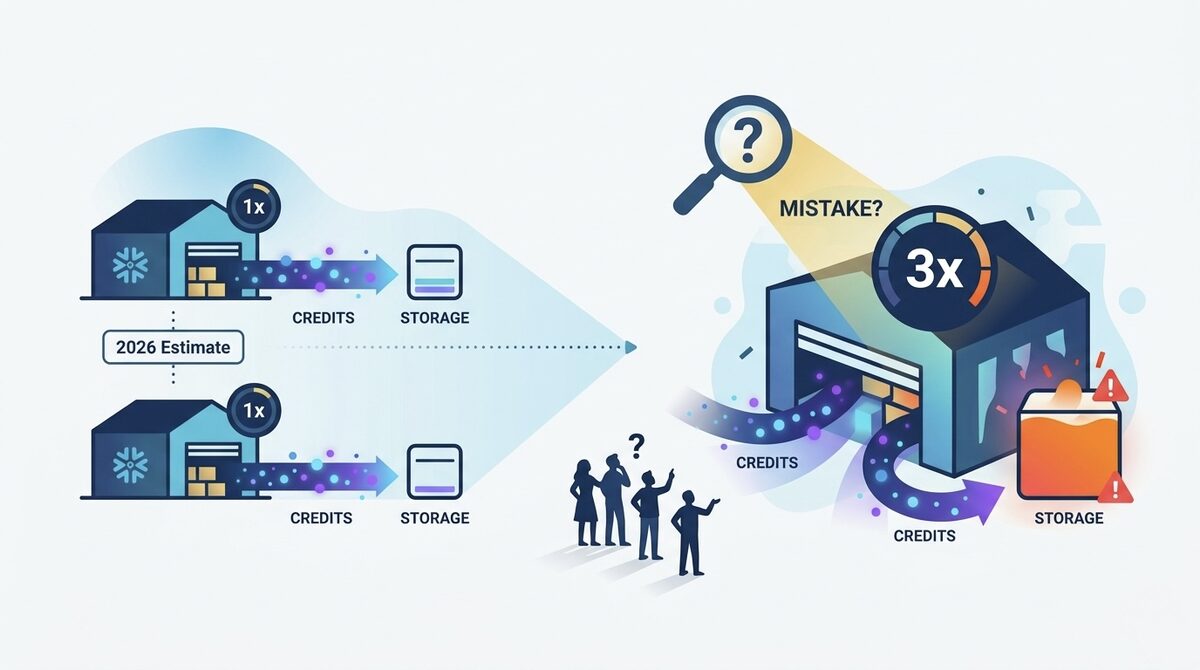

- Compression is aggressive. Snowflake's columnar micro-partitions compress data 3-5x typically. So 1TB of raw CSV data might consume only 200-300GB of billed storage ($5-12/month on capacity pricing). This makes Snowflake storage genuinely cheap per raw TB.

- Time Travel adds up. Enterprise edition allows up to 90 days of Time Travel. If you modify large tables frequently, each modification stores a snapshot of the changed data. A 100GB table that gets 10% of rows updated daily accumulates ~10GB/day of Time Travel storage. Over 90 days, that is 900GB of Time Travel storage for a 100GB table.

- Fail-safe is 7 non-configurable days beyond your Time Travel setting. You cannot disable it or reduce it. Budget for ~7 days of additional retention on all modified data.

- Capacity pricing requires commitment. The $23/TB rate requires pre-purchasing storage capacity. On-demand at $40/TB is nearly double. For any production workload, the capacity commitment is worth it.

Storage Cost Example

| Scenario | Raw Data | Compressed (3.5x) | Time Travel (90-day, 5% daily churn) | Total Billed | Monthly Cost (Capacity) |

|---|---|---|---|---|---|

| Small team | 500GB | 143GB | ~640GB | 783GB | $18/month |

| Mid-size | 5TB | 1.4TB | ~6.3TB | 7.7TB | $177/month |

| Enterprise | 50TB | 14.3TB | ~64TB | 78.3TB | $1,801/month |

Compare this to compute. A single Medium warehouse running 8 hours/day on Enterprise edition costs $2,880/month. Storage for 50TB of raw data costs less. This is why compute optimization matters 5-10x more than storage optimization in Snowflake.

The Hidden Costs: Serverless Features That Burn Credits Silently

Snowflake has an increasing number of serverless features that consume credits without running a visible warehouse. These are the line items that surprise teams on their first real invoice.

Serverless Credit Consumers

| Feature | Credit Consumption | Typical Monthly Cost | Notes |

|---|---|---|---|

| Snowpipe (continuous ingest) | Per file loaded (~0.06 credits/file notification) | $50-500/month | Scales with file frequency, not size |

| Automatic Clustering | Varies with table size and DML frequency | $100-2,000+/month | Runs continuously on clustered tables |

| Materialized View maintenance | Triggered on base table DML | $50-500/month | Every INSERT/UPDATE/DELETE refreshes MVs |

| Search Optimization Service | Background maintenance | $100-1,000/month | Maintains search access paths |

| Replication | Per-credit for secondary refreshes | $200-5,000/month | Cross-region/cross-cloud replication |

| Serverless Tasks | Per-second, similar to XS warehouse | Varies widely | Background scheduled SQL |

Automatic Clustering is the biggest surprise. Teams enable clustering on large tables for better query performance (which genuinely helps), then forget that Snowflake continuously re-clusters data as new rows arrive. A heavily-updated 1TB table with clustering enabled can consume 500-2,000 credits/month in background maintenance alone. That is $1,500-6,000/month on Enterprise edition.

Snowpipe charges per notification, not per byte. If your pipeline sends 10,000 small files per hour (common with event streaming into S3), Snowpipe consumes roughly 600 credits/hour worth of notifications. Batching files into fewer, larger loads before triggering Snowpipe cuts this by 80-90%.

Real-World Cost Modeling

Let me show what Snowflake actually costs at three different scales, with realistic usage patterns.

Startup Analytics Team (5 Users)

| Component | Configuration | Monthly Cost |

|---|---|---|

| Compute (queries) | 1 XS warehouse, ~6 hours/day active, auto-suspend 120s | $540 |

| Compute (loading) | 1 XS warehouse for ELT, 2 hours/day | $180 |

| Storage | 200GB raw (57GB compressed) | $5 |

| Snowpipe | 500 files/day | $15 |

| Data transfer | Minimal (same region) | $5 |

| Total | $745/month |

This is the "well-configured" startup. The poorly-configured version (Medium warehouses, 5-minute auto-suspend, no resource monitors) typically costs $2,500-4,000/month for the same workload.

Mid-Size Data Team (20 Users)

| Component | Configuration | Monthly Cost |

|---|---|---|

| Compute (BI/reporting) | 1 Small warehouse, 10 hours/day | $1,800 |

| Compute (ad-hoc analytics) | 1 Small warehouse, 8 hours/day | $1,440 |

| Compute (ELT/loading) | 1 Medium warehouse, 4 hours/day | $1,440 |

| Compute (data science) | 1 Medium warehouse, 6 hours/day | $2,160 |

| Storage | 5TB raw (1.4TB compressed + Time Travel) | $177 |

| Snowpipe | 5,000 files/day | $45 |

| Automatic clustering | 3 large tables | $300 |

| Data transfer | Moderate | $50 |

| Total | $7,412/month |

At this scale, the right-sizing conversation becomes critical. Many teams at this stage have warehouses one or two sizes too large and auto-suspend set too generously. We commonly see this same workload running at $12,000-18,000/month before optimization.

Enterprise Data Platform (100+ Users)

| Component | Configuration | Monthly Cost |

|---|---|---|

| Compute (BI dashboards) | 2 Medium multi-cluster (max 3), 12 hours/day | $8,640 |

| Compute (self-service analytics) | 1 Large multi-cluster (max 4), 10 hours/day | $7,200 |

| Compute (ELT pipelines) | 2 Large warehouses, 6 hours/day | $8,640 |

| Compute (data science/ML) | 1 XL warehouse, 4 hours/day | $5,760 |

| Compute (operational reporting) | 1 Small, 16 hours/day | $2,880 |

| Storage | 50TB raw (14.3TB compressed + Time Travel) | $1,801 |

| Snowpipe | 50,000 files/day | $450 |

| Automatic clustering | 10+ large tables | $2,000 |

| Materialized views | 5 MVs on frequently updated tables | $500 |

| Cross-region replication | DR to second region | $3,000 |

| Data transfer | Cross-region + external tools | $800 |

| Total | $41,671/month |

Enterprise Snowflake bills of $40,000-80,000/month are common. The difference between $40K and $80K is almost always warehouse sizing and auto-suspend configuration, not data volume.

Snowflake vs BigQuery vs Redshift: 2026 Cost Comparison

This is the comparison most data teams need when choosing a cloud data warehouse. We modeled the same workload (20 analysts, 5TB data, 50TB queries scanned per month equivalent).

| Dimension | Snowflake (Enterprise) | BigQuery (Enterprise) | Redshift Serverless |

|---|---|---|---|

| Pricing model | Credits (time-based) | Per TB scanned or slots | RPU-hours |

| Idle cost | $0 (with auto-suspend) | $0 (on-demand) | $0 (serverless) |

| Compute for 50TB equivalent/month | ~$7,000-9,000 | ~$8,000-10,000 (slots) | ~$6,000-8,000 |

| Storage (5TB raw) | ~$177 (capacity) | ~$100 | ~$120 |

| Concurrency handling | Multi-cluster auto-scale | Slot autoscaling | Auto-scaling RPUs |

| Best for | Predictable workloads, multi-cloud | Ad-hoc exploration, GCP-native | AWS-native, existing Redshift users |

| Worst for | Idle-heavy workloads with bursts | Wide table full scans | Cross-cloud needs |

| Cost predictability | Medium (depends on usage) | Low on-demand, high on slots | Medium |

| Estimated monthly total | $7,500-9,500 | $8,500-10,500 | $6,500-8,500 |

The honest assessment:

- Snowflake wins when you need cross-cloud portability, fine-grained warehouse isolation per team, and predictable performance SLAs. It also wins when your team is comfortable with warehouse management.

- BigQuery wins when queries are sporadic and unpredictable, when you want zero infrastructure management, and when you are already on GCP. The 1TB/month free tier is excellent for prototyping.

- Redshift wins on raw price for heavy, sustained workloads within AWS, especially with Reserved Instance pricing. But it requires more operational expertise.

For teams spending $10,000+/month on any data warehouse, our cloud cost optimization service typically finds 30-50% in savings through right-sizing, scheduling, and pricing model optimization.

7 Strategies to Cut Snowflake Costs by 40-60%

These are the exact optimizations we implement for clients during FinOps engagements. Listed in order of impact, fastest wins first.

1. Set Auto-Suspend to 60 Seconds (Minimum Viable)

The default auto-suspend for new warehouses is 600 seconds (10 minutes). For most analytics workloads, the sweet spot is 60-120 seconds. Every second beyond your last query is pure waste.

ALTER WAREHOUSE analytics_wh SET AUTO_SUSPEND = 60;

ALTER WAREHOUSE reporting_wh SET AUTO_SUSPEND = 60;

ALTER WAREHOUSE etl_wh SET AUTO_SUSPEND = 120;

Expected savings: 20-40% for teams with bursty query patterns.

The one exception: if your warehouse resumes 100+ times per day with queries under 5 seconds each, the 60-second minimum billing per resume can actually cost more than leaving it running. Monitor WAREHOUSE_METERING_HISTORY to find the optimal balance.

2. Right-Size Every Warehouse Down One Step

The dirty secret of Snowflake sizing: a Small warehouse often runs queries only 10-30% slower than a Medium, at half the credit cost. For ad-hoc analytics and BI dashboards where a 5-second query taking 7 seconds is acceptable, the savings are enormous.

-- Check current warehouse utilization

SELECT warehouse_name,

AVG(avg_running) as avg_concurrent_queries,

AVG(avg_queued_load) as avg_queued

FROM snowflake.account_usage.warehouse_load_history

WHERE start_time > DATEADD('day', -14, CURRENT_TIMESTAMP())

GROUP BY warehouse_name;

If avg_concurrent_queries is below 0.5 and avg_queued is 0, your warehouse is oversized. Drop it one size.

Expected savings: 30-50% (since each size step is exactly 2x cost).

3. Implement Resource Monitors With Hard Limits

Resource monitors are the only way to prevent runaway queries or unexpected usage from blowing your budget.

CREATE RESOURCE MONITOR monthly_limit

WITH CREDIT_QUOTA = 5000

TRIGGERS

ON 75 PERCENT DO NOTIFY

ON 90 PERCENT DO NOTIFY

ON 100 PERCENT DO SUSPEND;

ALTER WAREHOUSE analytics_wh SET RESOURCE_MONITOR = monthly_limit;

This does not directly save money but prevents the $15,000 surprise bills that come from an engineer accidentally running a cartesian join on a Large warehouse for 6 hours.

4. Schedule Batch Jobs With Smaller Warehouses

ETL and batch processing do not need to finish in 5 minutes. A pipeline that takes 30 minutes on a Medium warehouse can run for 60 minutes on a Small at half the total credit cost (same work, same credits consumed, but spread over more time). This only works if you have scheduling flexibility.

-- Use a smaller warehouse during off-peak hours

ALTER WAREHOUSE etl_wh SET WAREHOUSE_SIZE = 'SMALL';

-- Schedule at night when time pressure is lowest

CREATE TASK nightly_transform

WAREHOUSE = etl_wh

SCHEDULE = 'USING CRON 0 2 * * * America/New_York'

AS

CALL run_daily_transforms();

Important nuance: Snowflake bills credits per second of warehouse time, and a larger warehouse processes more data per second. A query that scans 100GB takes roughly the same total credits whether you run it on Small (slower, more seconds) or Large (faster, fewer seconds). The savings come from reducing idle time between queries, not from the queries themselves. Use smaller warehouses when the workload is a stream of small queries with gaps between them.

5. Consolidate Light Warehouses

Many teams create per-department or per-project warehouses that each run 1-2 hours per day. Each one hits the 60-second minimum on every resume. Consolidating 5 lightly-used XS warehouses into 1 Small warehouse often reduces total credits because you eliminate hundreds of 60-second minimums.

-- Before: 5 XS warehouses, each resuming 50x/day

-- Cost: 5 x 50 x 60 seconds = 250 minutes/day of minimums = 4.2 credits/day wasted

-- After: 1 Small warehouse shared with routing

-- Same queries, but warehouse stays warm between users

6. Fix Clustering Costs

If automatic clustering is consuming more than 10% of your total credits, you are likely clustering tables that do not benefit from it or clustering on the wrong columns.

-- Check clustering credit consumption

SELECT table_name,

SUM(credits_used) as total_credits

FROM snowflake.account_usage.automatic_clustering_history

WHERE start_time > DATEADD('day', -30, CURRENT_TIMESTAMP())

GROUP BY table_name

ORDER BY total_credits DESC;

Drop clustering on tables where queries already have good pruning (check SYSTEM$CLUSTERING_INFORMATION). For append-only tables with natural date ordering, clustering on the date column gives diminishing returns because partitions are already sorted.

7. Negotiate Committed Capacity Pricing

If you are spending more than $5,000/month on Snowflake on-demand, you should be on a capacity commitment. The savings are straightforward:

| Commitment Term | Typical Discount | Break-Even vs On-Demand |

|---|---|---|

| 1 year | 15-20% off | Month 1 |

| 2 year | 20-25% off | Month 1 |

| 3 year | 25-30% off | Month 1 |

There is virtually no risk in committing if your usage is stable or growing. Snowflake also allows "rolling over" unused credits to subsequent periods within the contract term in most agreements. Always negotiate rollover and true-up terms.

When Snowflake Is NOT Worth the Cost

We believe in honest recommendations, even when they mean less work for us.

Your workload is purely ad-hoc and unpredictable. If your data team runs 5-20 queries per day with wildly varying complexity and no scheduled jobs, BigQuery on-demand is almost certainly cheaper. You pay $0 when idle and only for bytes scanned. Snowflake's per-second billing still costs you 60 seconds per warehouse resume.

You are under 500GB of data with 1-2 users. At this scale, a PostgreSQL instance on RDS ($50-100/month) handles analytical queries fine. Snowflake's minimum viable monthly spend is realistically $200-500/month once you account for any sustained usage.

You need real-time sub-second queries on hot data. Snowflake's query latency floor is 1-3 seconds for warehouse resume plus query compilation. If your application needs sub-100ms responses, use a purpose-built OLAP engine like ClickHouse, Apache Druid, or a materialized cache layer. Snowflake is a batch and interactive analytics tool, not a real-time serving layer.

Your budget is under $500/month total. At this price point, consider DuckDB for local analytics, MotherDuck for cloud DuckDB, or BigQuery on-demand with the 1TB free tier. Snowflake's platform overhead (governance, access control, multi-cluster) provides value at scale that is wasted at startup budgets.

The Bottom Line

Snowflake's credit-based pricing is powerful once you understand it, but it punishes careless configuration harder than almost any other cloud service. A warehouse left one size too large or auto-suspend set 5 minutes too long can double your bill with zero change in query performance or data volume.

The fastest path to savings: set auto-suspend to 60 seconds on every warehouse, right-size down one step, and implement resource monitors today. These three changes take 15 minutes and typically save 30-50% in the next billing cycle.

If your Snowflake bill has grown faster than your data volume and you want a clear picture of where credits are leaking, take our free Cloud Waste and Risk Scorecard. We analyze your ACCOUNT_USAGE views, identify the specific warehouses and features consuming excess credits, and deliver a prioritized savings plan within 48 hours.

For teams spending $10,000+/month on Snowflake, our FinOps consulting engagement typically delivers 40-60% cost reduction with a 30% savings guarantee. The optimization pays for itself within the first month.

Further reading: